How to Lead an AI “Team Member”: What Leadership Skill Boards Will Look For

Think about how your team just got a new coworker.

Think about how your team just got a new coworker.

They work all the time. They never take time off. They can turn around research in seconds and drafts in minutes. And here’s the catch: they will sometimes make up facts with complete confidence, unless you design the work so they can’t.

This isn’t a thought experiment. This is what any executive who is adding large language models and autonomous agents to knowledge work has to deal with.

And the sad truth is that most leadership training hasn’t prepared managers for it.

Adding AI agents to the team doesn’t make leadership easier. It becomes more important.

The Gap in Leadership Training

Management training has always been behind the times. We taught leaders how to work in hierarchies while businesses switched to matrices. We learned how to work together in person, but remote work changed how we did things. We are doing the same thing with AI again.

A lot of businesses have bought AI tools. Not as many people have put money into teaching leaders how to run AI-augmented workflows, where AI is not just a tool but an active part of analysis, drafting, and making suggestions.

The outcome is foreseeable: AI-enhanced teams that fail to meet expectations—not due to inadequate technology, but rather due to a lack of managerial rigor.

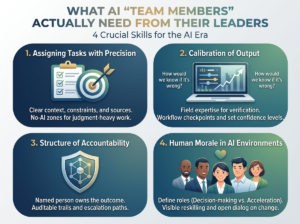

What AI “Team Members” Really Want from Their Leaders

It’s not the same to run an AI system as it is to run a person. But it’s still management: defining work, setting standards, checking quality, and making sure people are responsible.

Here are four skills that boards should expect leaders to quickly develop.

1) Assigning tasks with precision

The quality of AI output is determined more by how the task is defined than by how good the model is. Unclear inputs lead to unclear outputs. Requests that aren’t well-defined lead to more confident mistakes.

The leader advantage is being able to turn an organization’s need into a clear set of instructions that include the context, constraints, assumptions, sources, and the required form of output. They also know when the task is not suitable for AI and a person must take charge.

Actions of a leader

- Make task briefs the same (goal, audience, limits, acceptable sources, and quality bar).

- Make clear assumptions (“what needs to be true for this to work?”).

- Set “no-AI zones” for work that requires a lot of judgment or has serious consequences unless there are strict controls in place.

2) Calibration of Output

Even when it is wrong, AI can be convincing. Leaders need to change how they think about evaluations. Instead of just asking “Is this right?” they should also ask “How would we know if it’s wrong, and what would it cost if we missed it?”

You need to be fluent in the field to do this. You can’t calibrate AI output in a field you don’t know much about. You also can’t safely scale AI without habits that let you check things over and over.

Actions of leaders

- Add steps for verification (triangulation, source checking, and peer review) to workflows.

- Set confidence levels based on the use case (drafting, decision support, or external-facing).

- Keep an eye on where mistakes happen and change the guardrails as needed.

3) Structure of accountability

When AI output hurts a customer, a partner, or a decision-making process, the model is not to blame. The business owns it, and in the end, a named person owns it.

That only works if you build in accountability by giving people ownership, audit trails, escalation paths, and review checkpoints at every important decision point.

Actions of leaders

- Require people to sign off at certain decision points.

- Make sure there are records of prompts, sources, outputs, edits, and approvals that can be checked.

- Make escalation plans for times of uncertainty, conflict, and big problems.

4) Morale of People in AI Environments

The most overlooked challenge is human: when AI comes into the workplace, professionals may feel threatened, undervalued, or unsure of what will happen next. If leaders don’t pay attention to this, people will stop caring, then leave, and trust will slowly fade away.

AI can’t take the place of morale. And it can’t fix damage to culture.

Actions of a leaders

- Clearly define roles: people are in charge of making decisions, building relationships, and being accountable; AI speeds up the process.

- Put money into role-based reskilling that is linked to real changes in the way things work.

- Make the change talkable—leaders should not act like the anxiety isn’t there.

A Different Kind of Performance Review

AI systems need to have their performance reviewed on a regular basis, not just once a year.

The Quarterly AI Performance Review

For each AI system that is part of workflows, look at:

- Task Fit—Are we using it in the right way?

- Accuracy Rate: What is the rate of mistakes, and where do they happen most often?

- Dependency Risk: Are people losing skills that AI is doing?

- Accountability Gaps: Where can AI output go without being properly checked?

- Trust Calibration: Are teams trusting too much, not using controls enough, or skipping them altogether?

This isn’t red tape. It is running a business that can grow in value and risk at machine speed.

The Hardest Part: Staying in Charge

Outsourcing judgment is a subtle failure mode that lowers the quality of leadership over time.

It begins innocently by asking AI for a suggestion and then taking it without question. Over time, leaders stop asking questions about outputs. Their own judgment gets worse. Instead of being responsible decision-makers, they become a way for AI content to get through.

The best leaders see AI as a smart, hard-working junior analyst who is always in need of review, context, and human ownership.

You have already given up leadership if you can’t explain why the AI suggestion is right or wrong.

Important Points

- AI is now a useful “team member” in knowledge work. Treat it like one, with management discipline.

- Leaders need to develop four skills: precise tasking, output calibration, accountability architecture, and leading people to have good morale.

- Every three months, do an AI performance audit to check for task fit, accuracy, dependency risk, accountability gaps, and trust calibration.

- The biggest risk isn’t AI making mistakes; it’s mistakes that aren’t found that affect decisions on a large scale.

- The most dangerous way to fail is to let AI “hold the pen” on important decisions, which is called judgment atrophy.

Prompt for the board: Where in our operating model is AI already making decisions, and how can we show that the chain of human accountability is still in place?

Leave a Reply