Spec-Driven Development: The Missing Operating Discipline in AI-Enhanced Engineering

The problem isn’t that AI makes bad code. The problem is that we’re giving it vague business terms and calling them requirements.

The same pattern of failure is showing up in businesses all over the place.

Teams copy a user story into an AI coding assistant and tell it to “build this.” The AI then gives them something that compiles, passes tests, and still ships risk. Not because the model is bad at its job, but because it had to make decisions that leaders never made clear.

After six weeks, those “silent decisions” turn into problems for customers, regulatory issues, financial losses, or operational problems. At that point, it’s hard to see what went wrong: the system did exactly what it was told to do, without being told to do it.

This is not an issue with the tools. It’s a problem with governance and the interface.

You’re Not Writing Prompts. You’re making deals.

When you give work to experienced engineers, they don’t just do it; they also ask questions. They want to know what “archive” means. They think about what “cancel” means for money, keeping data, audit trails, and customer rights. They bring up edge cases before there is any code.

AI doesn’t do any of that. It doesn’t have any institutional memory, an implicit understanding of your risk posture, or an instinct for what the Board would think is unacceptable. It only has your text and a tendency to fill in the blanks with defaults.

So when your input says, “Let users archive their account,” the AI has to pick:

- Is it permanent or can it be undone?

- Does it take away access right away or at the end of the term?

- Does it keep data, remove data, or delete data?

- Does it stop charging? Stop billing? Need approval?

- What does compliance entail—retention, auditability, legal hold?

There is a good chance that all of the interpretations are correct. The model chooses one. In a quiet way. With confidence. And your company gets it as soon as it merges.

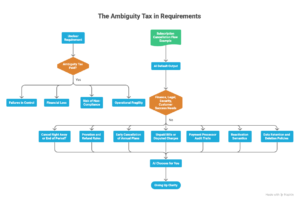

The Ambiguity Tax: What It Is and Why Boards Should Care

There is an ambiguity tax for every requirement that is not clearly defined.

Later on, that tax is paid as:

- Failures in control (when unapproved product behavior turns into “the system”)

- Financial loss (wrongly handled proration, refunds, credits, and renewals)

- Risk of not following the rules (unclear retention, consent, and audit logging)

- Operational fragility (edge cases turn into incidents instead of backlog items)

“Build a subscription cancellation flow” is a classic example.

A normal output from AI cancels a record, changes a status, and shows a confirmation screen. It is “done.” There are also important ways in which it is incomplete for Finance, Legal, Security, and Customer Success:

- Cancel right away or at the end of the period?

- Rules for prorating and getting your money back

- Plans for the year canceled early

- Unpaid bills or charges that are in dispute

- Payment processors must keep audit trails

- Reactivation semantics: do you need the same subscription ID or a new contract?

- Retention windows and deletion policies for customer data

If you don’t say what you want, the AI will choose for you. That’s not speeding up; that’s giving up clarity.

The Format Is What Caused It

The purpose of user stories was to help people work together, not to be done by machines.

“As a user, I want X so that Y” leaves out some of the context on purpose. It assumes that everyone knows the same things, has professional judgment, and can ask follow-up questions during sprint planning.

That layer of empathy works for people. It doesn’t work as an interface for AI.

When you give a model a user story, you’re giving it a headline and expecting it to put together the full operating policy that goes with it.

AI doesn’t need compression. Before it can be put into action, it needs clear limits, restrictions, definitions, and ways to fail in plain language.

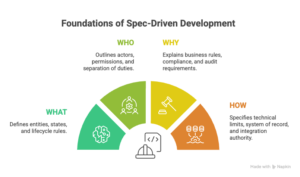

The Change: From Story to Determinism

Moving to spec-driven development doesn’t mean heavy documentation. It’s a planned way to get rid of confusion before it turns into behavior.

A light spec with four short parts covers most enterprise-grade features:

WHAT: entities, states, and lifecycle rules

WHO: actors, permissions, and the separation of duties

WHY: business rules, rules for compliance, and requirements for audits

HOW :technical limits, system of record, and authority to integrate

This isn’t “process.” This is power.

The AI stops guessing and starts doing what you ask when you give it these four dimensions. The code turns into a translation of a clear policy, not a set of likely defaults.

What This Looks Like in Real Life (One Page, Not Twenty)

Feature: Canceling a Subscription

WHAT

- States of subscription: active, canceled, or expired

- You can’t get your subscription back once it’s been canceled.

- Data kept for 90 days after cancellation, then deleted (unless there is a legal hold)

WHO

- The owner of the account can cancel

- Team members can’t change their status, but billing admins can see it.

WHY

- If there is an unpaid invoice, cancellation is not possible.

- Every time the state changes, the audit log must write down the time and the user ID of the person who made the change.

- Cancellation must also send a notification to the billing provider.

HOW

- Node.js for the backend and Stripe for billing

- Stripe is the system that keeps track of subscription state.

- Database reflects Stripe through webhooks; subscription state can’t be written directly to the database.

That’s four paragraphs. And it changes everything: the AI can’t be as flexible as it used to be. You changed “build a flow” to “put a policy into place.”

The Hidden Benefit: This also makes people make better decisions.

AI doesn’t just show how unclear engineering is; it also shows how unclear organizations are.

Most vague prompts aren’t because you’re lazy; they’re because you haven’t made a decision yet. Teams haven’t always agreed on the right way to do business, so they give the model the uncertainty and hope that the implementation “looks right.”

Writing specifications makes things clear early on:

Can you get your money back? What are the conditions?

Who owns the retention policy—Legal or Product?

What actions need to be able to be audited?

Which system is the one that counts?

These decisions were going to be made anyway, either directly by the leaders or indirectly by the model. Every time, explicit beats implicit.

Anti-Patterns Boards Should Actively Discourage

- “Just make it and we’ll look it over later.” Looks good because it feels quick. Fails because reviews don’t usually bring up policy-level mistakes until they have to.

- “Let the model choose the edge cases.” It’s appealing because it makes things easier. Fails because edge cases are where risk, cost, and regulatory exposure are highest.

- “Tests passed, so it’s right.” It seems objective, which makes it appealing. Fails because tests check what you said, not what you forgot to say.

The Deeper Shift: AI Makes Requirements a Top-Notch Control Surface

AI is no longer just an autocomplete tool; it’s now a partner. Not wishful prose, but context is what collaborators need.

Spec-driven development is becoming the new way of doing things in AI-era engineering:

- It cuts down on problems later on.

- It makes it easier to track and audit.

- It makes sure that what people want from a product matches what they actually do.

- It speeds up implementation without letting probabilistic defaults make policy decisions.

One thing that will slow progress down

Model quality is not the biggest problem. It’s the company’s unwillingness to make tough choices early on, especially ones that affect Product, Finance, Legal, and Risk.

Writing specs makes things fit together. Alignment takes longer than coding. Many organizations will fight it and keep paying the “ambiguity tax” in the form of incidents, rework, and compliance risk.

What Leaders Should Do Now: A Final Thought

If you want AI-assisted engineering to give you a long-lasting edge instead of a short-lived speed boost, switch to spec-first execution.

What you should stop doing:

- Using user stories as the only requirements for machines to work

- Giving more points for speed of output than for clarity of intent

- Assuming that code review can make up for a policy that isn’t there

What you should promise to do in the next year:

- A standard one-page spec (WHAT/WHO/WHY/HOW) for any feature that has to do with money, identity, permissions, or the lifecycle of data

- Clear ownership of business rules (not “engineering will decide”)

- Design for auditability: follow decisions from policy to spec to code to runtime behavior

AI will write what you tell it to. The question for leaders is whether your company is giving them a contract or just a hope.

In the age of AI, uncertainty is no longer a problem for planning. Make “spec quality” a measurable control in your SDLC. It’s a risk for production.

Leave a Reply