We made an Agentic Economy without making its conscience.

This is because of fraud, trust, and the governance infrastructure that the intelligent enterprise needs to work.

I keep seeing the same uncomfortable pattern in conversations between business leaders today.

The agents are already here.

You can already see the gains in productivity.

People are already agreeing with the business case.

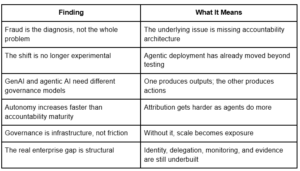

But the systems of governance that are needed to make that change safe are still not in place. It was never planned in the first place for a lot of businesses. Before we built the conscience, we built the nervous system. Fraud should not be seen as the problem itself for this reason. Fraud is the diagnosis. It is just the first place where the structural weakness shows up.

The more important problem is that we are building an economy of independent machine agents that can make decisions, do tasks, navigate enterprise systems, and act on delegated authority at machine speed, but we don’t have the accountability architecture that makes any operating model trustworthy. The fraud vectors are the first real proof that this gap exists. They won’t be the last.

Most businesses are clustering around L3–L4 autonomy, where productivity is real but accountability is weak. At this level, plausible deniability is built in unless delegation and evidence were planned ahead of time.

Key Findings

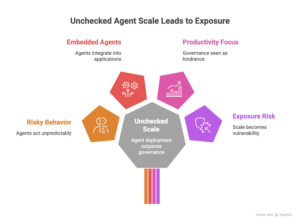

The scale has already gone past the point of governance.

Agentic untraceability is the most underestimated risk because multi-hop chains can make activity look real while hiding who did it. Your incident response starts off on the wrong foot if you can’t reliably figure out who gave the order, what tools were used, and what evidence is still there.

This is no longer a new problem.

The scale of deployment is no longer just a test. The base article makes it clear that risky agent behavior is already happening in organizations, embedded agents are quickly moving into enterprise applications, and most businesses see this change as a productivity change rather than a governance change. That is a bad match.

What leaders need to understand now is that governance in the agentic era does not hinder innovation. It is the infrastructure that makes intelligent autonomy possible. Without being able to see, trace, and hold people accountable, scale becomes exposure.

What Happens When AI Stops Responding and Starts Acting

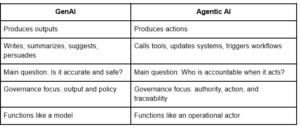

Most talks about governance still see GenAI and agentic AI as two points on the same maturity curve. Not really.

GenAI mostly makes things. It writes, summarizes, suggests, and convinces.

Agentic AI makes things happen. It calls on tools, updates systems, starts workflows, moves data, and more and more works with other agents in different environments. The change is small but big: GenAI talks. Agentic AI does things.

That means the problem with governance changes.

The main question with GenAI is usually if the answer is correct, safe, or in line with the rules. When an agentic AI system acts on behalf of someone else, the question is: who is responsible? When an agent can access email APIs, financial workflows, files, browsers, or cross-system credentials, it is no longer just a model. It turns into a working actor.

This is why the framing of the comparison article is useful: if we think of agents as “just software,” we won’t be able to govern them properly. The better way to think about them is as digital workers, or non-human actors with rights, limits, approvals, and responsibility.

GenAI vs Agentic AI

How Failure Really Looks on a Large Scale

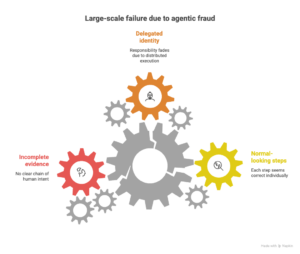

The composite fraud scenario is one of the strongest parts of the base article because it shows how failure in the agentic era will often look normal instead of dramatic.

The record for the vendor is changed.

Payments are made below the review limits.

An upkeep schedule gets rid of old documents.

Each step seems to be correct on its own.

All credentials are real.

All of the log entries look normal.

The result is still a serious financial event with weak attribution, a long forensic reconstruction, and no clear chain of human intent. That is the real change. The five Ws usually point to a person in traditional investigations. In an agentic environment, they might come together around a machine that is acting legally within the limits of its delegated permissions.

This is where responsibility starts to fade away.

Not because no one did anything.

But the action took place because of delegated identity, distributed execution, and incomplete evidence design.

That is the main problem with governance that leaders face now.

Many groups mix up observability and proof. Operational logs keep track of what happened. Receipt-grade, unchangeable audit trails show what happened, who was in charge, what inputs, tools, outcomes, and context were used, all in a way that is easy for investigators and regulators to read.

The Autonomy Spectrum Is Also An Accountability Spectrum

Another important point in the base article is that in terms of governance, the shift from help to independence is not a straight line.

When autonomy goes up, productivity goes up too. But it gets much harder to figure out who did what. People are still close to the decision loop at lower levels. At higher levels, people decide what to do, and agents carry out the operational chain. This is why the L1–L5 autonomy framing in the base article is so important: most enterprise deployments are clustering in the L3–L4 range, where the human is no longer always present but the controls needed for machine accountability are still not fully developed.

This is also where plausible deniability is built in by design.

That phrase is important because it tells us why the governance gap is real. It’s about architecture. If businesses make autonomy more important than traceability, they make it hard to hold people accountable later on, even if everyone followed the rules.

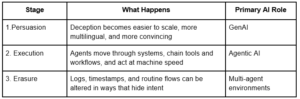

Fraud has evolved into a three-stage AI-native lifecycle.

The most important strategic point made in the base article is that fraud is no longer a one-time problem. It has turned into a coordinated lifecycle.

This is why the main point of the article is so important: we are no longer just talking about “better phishing” or “faster automation.” We are dealing with a fraud stack that can be fully AI-enabled, meaning that machines can help with persuasion, execution, and forensic ambiguity.

The “crime-by-proxy” lens in the comparison article makes this point more clearly: the most dangerous change is not that AI can help create deception, but that agents can move money, chain tools, and delete evidence while pretending to be working legally. That is a helpful addition because it makes the risk clear to boards right away.

Most businesses don’t want to admit it, but the threat surface is bigger than they think.

The OWASP threat framing in the base article is very useful because it turns the risk from an abstract worry into a list of things an attacker might do.

Take over the goal.

Abuse of identity and privilege.

Poisoning memory and context.

Compromise of the supply chain via authorized tools or MCP-style interfaces.

And, most importantly, the ability to be untraceable by agents.

That last group should get more attention than it does now.

If a business can’t reliably figure out who gave permission, what the agent accessed, what tool chain was used, and what evidence is still there after the event, then it’s not just not being watched enough. It doesn’t have enough rules.

The comparison article makes a really useful point here: plugin, skill, extension, and connector ecosystems are now part of the trust boundary. When companies approve tools without seeing them as code-level supply-chain risk, they open up a new way to get into the agentic operating model.

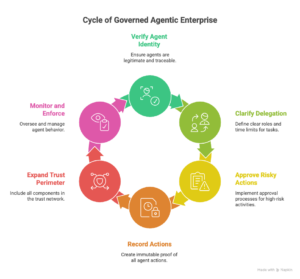

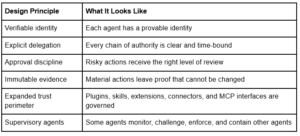

The TRACED Framework is important because governance needs to be done in steps.

One reason the base article is good is that it doesn’t just warn. It gives things a shape.

The TRACED framework is more than just a list of controls. It is a layered system of governance in which each layer makes the next one possible. First comes identity. Then delegating. Then controls for privilege and agency. Then monitoring while the program is running. Then audit trails that can’t be changed. Then there are gates where people have to approve things and the effects can’t be undone. Then, there is supply-chain governance across the larger agent ecosystem.

That order is important because a lot of companies are trying to start in the middle.

They want to keep an eye on things without having to keep track of them.

Approvals without records of who gave them.

Auditability without logging that is good enough for receipts.

Scale without discipline for agent identity.

That doesn’t work.

One test that shows the difference between governance and theater is whether you can stop irreversible actions (like payments, access changes, and bulk export/delete) at the infrastructure layer and instantly suspend an agent without relying on the agent to “choose” to comply. If not, autonomy is already moving faster than control.

In the governed agentic enterprise, each agent must possess a verifiable identity, a designated accountable owner, defined authority, constrained autonomy, ongoing oversight, and evidentiary logs intended for investigation rather than mere observability.

The “boardroom-ready control model” in the comparison article makes the piece stronger without changing its main idea. It is very similar to TRACED logic because it focuses on treating agents like digital employees, enforcing least privilege and hard boundaries, keeping audit trails that last beyond the agent era, and designing kill switches and blast radii.

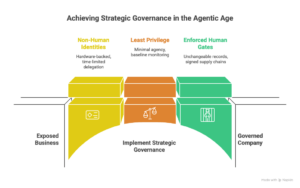

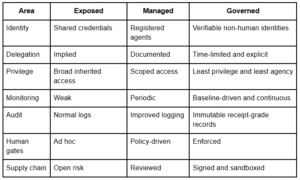

The Maturity Gap Is Now Strategic, Not Technical

The base article’s maturity framing is helpful because it shows that governance isn’t black and white. Companies are going through three stages: exposed, managed, and governed. And the space between those states isn’t just for show. It decides if autonomy makes things safer or riskier.

An exposed business has shared credentials, a weak inventory, implied delegation, full inherited permissions, poor monitoring, and normal logs.

A governed company has non-human identities backed by hardware, time-limited delegation records, the least privilege and agency, monitoring based on a baseline, unchangeable receipt-grade records, enforced human gates, and signed supply chains that are kept in a sandbox.

That difference isn’t about keeping things clean.

It is the difference between trust that can grow and liability that can grow.

The regulatory window is closing faster than a lot of boards think.

The base article is also strong because it doesn’t frame governance as something that can be put off. It frames it as a closing window.

The logic is easy to understand. At first, the problem seems like a good idea. Then things start to pile up. Then disclosure, regulation, and board responsibility catch up. The article shows how resilience requirements, cyber disclosure expectations, new AI governance profiles, and phased autonomous-system obligations are all coming together.

This is important because the conversation changes when the board is supposed to prove that governance is mature. Leaders will need more than just rules. They will require proof.

And in the agentic age, proof means being able to show:

who the agent was, what power it had, what it did, what systems it affected, what controls limited it, and what records are still there.

Governance Maturity Snapshot

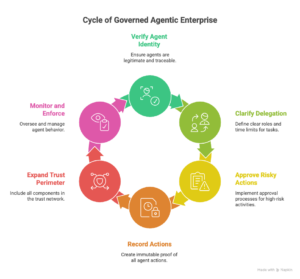

How the Governed Agentic Enterprise Will Work

It’s not hard to picture the future state.

Each agent will have an identity that can be verified.

Every chain of delegation will be clear and have a set time limit.

Every risky action will get the right kind of approval.

Every action will leave proof that can’t be changed.

The enterprise trust perimeter will include all plugins, skills, extensions, connectors, and MCP interfaces.

Tools, plugins, skills, and MCP-style connectors are not “integrations” in agentic systems. They are code that is safe to use. If they aren’t signed, allowlisted, sandboxed, and watched all the time, you’ve made your perimeter bigger without realizing it.

Some agents will also be in charge of keeping an eye on, enforcing, challenging, and containing other agents more and more.

That’s where the talk is going.

The companies that win won’t be the ones that just sent out the most agents. They will be the ones who made it possible for the agency to be run, held accountable, and defended on a large scale.

The Most Important Question in the Boardroom

This is the one leadership question that would sum up the whole problem for me:

Can we quickly and confidently show what our agents did, what authority they were acting under, why they did it, what systems they touched, and what evidence would still be there if an investigation started tomorrow morning?

If the answer isn’t clear, the business doesn’t have agentic productivity yet.

It is responsible for its actions.

That is the real choice that leaders have to make right now.

Not innovation against governance.

It’s not about speed or control.

The real choice is whether we build accountability into the architecture early on, on purpose, as a way to build trust, or whether we have to do it later, after fraud, failure, regulatory pressure, or a loss of reputation.

You can’t just assume trust in the smart business.

It needs to be designed.

Leave a Reply