PART 2/2 — Banking & Insurance Strategic Trends (2026–2028)

Continuation from Part 1: the consequences + the rebuild

Part 1 ended at the point where the “New Buyer” becomes architecture: transactions increasingly originate via agents and platforms, and embedded finance hardens into regulated distribution.

Part 2 is what happens next: deposit displacement, the new insurance product stack, prevention as a fee economy, fraud/scams at industrial scale, cryptographic trust and provenance, machine identity governance—and the invisible infrastructure required to run BFSI as a governed execution engine.

META FORCE IV — THE NEW BUYER (2026–2028)

Trend #19: BNPL, Digital Wallets & Deposit Displacement

The customer is not “leaving the bank.” The customer is routing around it. In 2026–2028, the most damaging shift isn’t that consumers like wallets or BNPL—it’s that these tools capture the transaction moment and progressively displace deposits, interchange, and relationship primacy. The bank still holds accounts, but the default spend interface becomes a wallet, a platform checkout, or an installment plan—often with limited visibility into true consumer leverage.

Article content

The shift is from bank-led payment + credit rails to platform-led spend + parallel credit, with banks increasingly relegated to background funding.

Three forces converge here:

- Digital wallets are becoming the dominant consumer interface Forecasts and industry reporting show wallets taking an ever-larger share of commerce—e.g., FIS Global Payments data summarized in 2026 analyses points to mobile wallets comprising ~54% of global e-commerce in 2023, expected to rise toward ~61% by 2026. (cashfree.com) This is how deposit displacement starts: not by account closure, but by where transactions originate.

- BNPL keeps scaling—and it creates a parallel credit picture The Richmond Fed estimates BNPL reached ~$70B in transactions in 2025 (U.S.), and while still small relative to total card spend, it’s large enough to distort credit visibility and consumer behavior at the margin. (Richmond Fed Economic Brief, 2026) And Reuters highlights the transparency gap: many BNPL loans still aren’t reported to bureaus, raising concerns about oversight and consumer protection. (Reuters, Sep 10 2025)

- Younger cohorts are training themselves on installments and wallets as defaults J.D. Power survey reporting shows 44% of Gen Z used BNPL in 2024 (with 50% using credit cards), confirming BNPL is not niche for younger spenders—it’s a normal behavior pattern. (Payments Dive, Feb 27 2025) Morgan Stanley’s fintech research warns BNPL can become a “masking force” that hides true consumer credit quality if reporting and scoring don’t catch up. (Morgan Stanley, Jun 9 2025)

Business impact

- Cost & efficiency: servicing and dispute volumes rise when transactions originate outside bank-controlled UX; resolution becomes multi-party.

- Speed & adaptability: product iteration must move at platform cadence—wallet integrations, installment offers, and dynamic risk controls.

- Risk & resilience: credit invisibility grows; underwriting and collections models become less predictive without shared BNPL visibility; scams exploit faster checkout flows. (Morgan Stanley, Reuters)

- Revenue & differentiation: interchange and revolving interest economics come under pressure; loyalty shifts to whoever owns the “spend moment” (wallet/platform).

CXO CTAs

- Treat wallets as a distribution layer, not a competitor: win by being the best-funded, best-risk-managed option inside the wallet (tokenized credentials, seamless auth, instant provisioning).

- Build “installments as a native capability”: don’t let BNPL sit outside the bank; integrate installment offers into card and account journeys with clearer disclosures and safer affordability logic.

- Close the credit-visibility gap: participate in bureau/consortium reporting efforts and incorporate alternative signals so BNPL doesn’t become a blind spot in credit risk. (Morgan Stanley, Richmond Fed)

- Redesign deposit strategy around “primary spend interface”: measure which customers route spend through wallets/platforms and build retention mechanics (benefits, pay-day liquidity tools, context-aware offers).

- Instrument “deposit displacement” as a board metric: wallet-originated spend share, lost interchange, revolver conversion rate, and relationship dilution.

META FORCE IV — THE NEW BUYER (2026–2028)

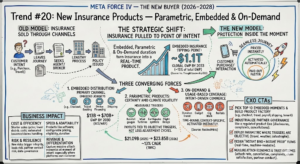

Trend #20: New Insurance Products — Parametric, Embedded & On-Demand

Insurance is being pulled to the point of intent. In the old model, customers left the moment (purchase, travel booking, device checkout, shipment, weather event) to go buy coverage. In the new model, protection shows up inside the moment—priced instantly, activated automatically, and paid out faster when triggers are objective. That’s why embedded insurance is approaching a tipping point: forecasts now put it at ~$1.1T in global GWP by 2033 (~15% of total GWP). (Insurance Thought Leadership summary of Embedded Insurance Observatory)

Article content

The shift is from policies sold through channels to coverage embedded into journeys—with parametric triggers and on-demand duration turning insurance into a real-time product.

Three forces converge here:

- Embedded distribution is becoming a primary channel, not an add-on Global commentary (citing BCG) projects embedded insurance growing from ~$13B to $70B+ GWP by 2030—a channel re-write, not incremental growth. (World Economic Forum) The 2033 “$1.1T / 15%” forecast reinforces that embedded is moving from edge cases to mainstream. (Insurance Thought Leadership)

- Parametric products are scaling because climate volatility demands certainty Parametric insurance is growing as buyers value speed and predictability—payouts tied to measurable triggers (rainfall, wind speed, earthquake intensity, flight delay), not loss adjustment cycles. Market estimates show parametric expanding rapidly (e.g., from $21.09B in 2025 to $23.85B in 2026, ~13% CAGR in one widely cited forecast). (The Business Research Company — Parametric Insurance 2026)

- On-demand and usage-based coverage fits “intent-driven” commerce On-demand insurance remains smaller than embedded—but it matters because it normalizes the idea that coverage is time-bound, context-specific, and activated instantly (micro-duration travel, gadget, gig work, rentals). (ResearchAndMarkets — On Demand Insurance)

Business impact

- Cost & efficiency: shorter sales cycles; lower distribution costs; automated issuance; and lower claims handling where parametric triggers enable faster settlement.

- Speed & adaptability: products can be launched and iterated like software—pricing, eligibility, bundling, and coverage duration become configurable.

- Risk & resilience: new risks emerge—trigger integrity, basis risk (parametric payout vs actual loss), partner conduct risk in embedded journeys, and data/consent governance.

- Revenue & differentiation: insurers (and bancassurers) that win distribution at the moment of intent will capture massive attach economics—especially where platforms own the customer journey.

CXO CTAs

- Pick your “top 10 embedded moments” (checkout, travel, SME payroll, shipping, device financing, tenant onboarding) and build a repeatable embedded product factory around them. (Embedded scale context: WEF, Insurance Thought Leadership)

- Industrialize partner governance like a regulated product line: disclosures, complaints, cancellations, and claims support must be controllable even when the UX sits with a platform.

- Deploy parametric where triggers are objective and measurable: start with domains where basis risk can be explained and accepted (travel delay, weather-indexed agri/SME, catastrophe layers). (Market context: TBRC)

- Design “instant bind + instant proof” flows: policy issuance and coverage proof must be real-time and machine-readable for platforms and agents.

- Measure attach economics and trust outcomes: attach rate, cancellation rate, complaints per 10k binds, claim satisfaction, and partner conduct indicators—because embedded growth without trust becomes regulatory drag.

META FORCE IV — THE NEW BUYER (2026–2028)

Trend #21: Insurance Predict-and-Prevent Fee Economy

Insurance is being pulled from “payout after loss” into “paid prevention before loss.” In 2026–2028, the most strategic insurer shift isn’t a new product wrapper—it’s a business model rewrite: from underwriting risk to operating risk outcomes. The insurer that wins is the one that can credibly sell risk reduction as a subscription / managed service—with measurable impact and provable accountability—whether or not a policy is attached.

Article content

The shift is from premium-for-transfer to fee-for-prevention—with outcomes you can audit. For decades, insurers monetized the moment of uncertainty: price the probability, collect premium, pay if the event happens. But continuous data (IoT, telematics, cyber telemetry), agentic operations, and platform distribution change the economics: If you can detect risk early, you can intervene cheaply. If you can intervene at scale, you can monetize prevention as a service. If you can prove intervention effectiveness, regulators and enterprise buyers will treat it as an operational control, not “nice-to-have advice.” This creates a new category: Predict-and-Prevent—risk services sold as recurring fees, embedded in ecosystems (OEMs, smart home, SME platforms, cyber stacks), and measured like operational performance.

Three forces converge here

- Buyers are shifting spend from “coverage” to “control” Enterprises and households are increasingly willing to pay for risk management that avoids disruption, not just indemnification after the fact. This is showing up across:

- Data + automation make “intervention at scale” operationally feasible Prevention used to be advisory-heavy and labor-bound. Now it’s becoming an execution loop: sense → score → intervene → verify → document. When interventions can be executed by workflows (and agents) with evidentiary trails, prevention becomes repeatable and auditable—i.e., sellable.

- The industry can no longer afford pure loss-cost exposure in volatile risk domains Nat-cat volatility, cyber frequency, medical inflation, liability expansion—these pressures make “transfer-only” economics fragile. Prevention becomes the only scalable lever that improves: loss ratio stability, retention (because you’re actively helping), partner attach (because prevention fits ecosystems). And critically: platform-originated insurance (Trend #20) wants prevention features because platforms want fewer disputes, fewer refunds, and fewer “customer harm” escalations.

Business impact

- Cost & efficiency Lower loss cost through measurable risk reduction (fewer/severity-reduced incidents). Lower claims handling via earlier detection, faster triage, and fewer “gray area” disputes.

- Speed & adaptability Product iteration becomes service iteration: new prevention “modules” ship faster than new policy forms. You can deploy prevention into new segments (SMB, gig, micro-fleet, renters) without re-architecting the whole underwriting stack.

- Risk, trust & resilience New risk surface: if you sell “prevention,” you’re implicitly accountable for performance and transparency. The winners build outcome measurement + auditability into prevention services from day one (not after the first lawsuit or regulator inquiry).

- Revenue & differentiation Recurring fee revenue that is less capital-intensive than pure underwriting growth. Expansion beyond policyholders into “risk services for non-clients” (your Gallagher signal: a material share of revenue from non-brokerage / non-traditional services).

CXO CTAs (2026–2028 actions)

- Decide your prevention thesis by line Pick 2–3 domains where you can plausibly deliver measurable outcomes (home water/fire, auto safety, SME cyber, workers’ safety, health/wellness adherence). Don’t boil the ocean.

- Productize prevention as a priced SKU on day one Free “risk advice” kills the market signal. Price it like managed services: tiered packages, SLAs, outcomes reporting.

- Build an outcome measurement spine Define auditable metrics (incident frequency, severity, time-to-detect, time-to-mitigate, loss avoided) and standardize reporting. If you can’t prove impact, you can’t defend pricing.

- Separate prevention governance from underwriting governance—then connect them Prevention needs an operating model (service delivery, partner ops, escalations, telemetry, evidence). Underwriting needs a model. Connect them through shared economics: loss ratio benefit + fee margin.

- Turn prevention into an ecosystem attach strategy Embed prevention “features” into OEMs, smart-home platforms, payroll/HR stacks, and SME SaaS—so distribution becomes continuous, not campaign-based.

Trend-specific leadership prompt

If prevention becomes a subscription business, who owns the P&L—and who is accountable when your “risk reduction” claim is challenged in audit, court, or the regulator’s office?

Sub-trends (aligned to your framework)

21a. Fee-based risk management expansion (the new recurring revenue pool; Deloitte growth signal). 21b. “Promise to prevent” operating model: proactive loss mitigation via IoT, smart home, predictive analytics, cyber telemetry—interventions, not advice. 21c. Non-client fee revenue: sell risk services beyond policyholders (Gallagher-style model), turning risk expertise into a platform revenue line.

META FORCE IV — THE NEW BUYER (2026–2028)

Trend #22: IoT, Telematics & Connected Insurance Ecosystems

Insurance is becoming a continuous relationship, not an annual renewal. In 2026–2028, IoT and telematics move from “data enrichment” to product architecture: continuous signals reshape underwriting, servicing, claims, and prevention. The winners won’t just use device data—they’ll orchestrate connected ecosystems (OEMs, smart home, health platforms, SMB SaaS) where insurance is embedded, prevention is automated, and pricing updates with exposure in near real time.

Article content

The shift is from periodic actuarial cycles to continuous underwriting—inside ecosystems that can act. Traditional insurance decides at moments: quote, bind, renew, claim. Connected telemetry breaks that cadence. Risk becomes observable continuously (driving behavior, property conditions, equipment status, health signals, cyber posture), which enables a new operating loop: sense → score → intervene → adjust price/terms → verify → document. This is where IoT/telematics stops being “analytics” and becomes execution infrastructure. The product is no longer just a policy—it’s a governed, always-on control loop that can reduce loss cost and improve retention if it is trusted, consented, and operationally scalable.

Three forces converge here:

- The ecosystem now originates the risk signal—and increasingly the customer OEMs, smart-home platforms, device manufacturers, health ecosystems, and SMB platforms are becoming the default layer where telemetry lives and customer engagement happens. Insurance distribution and service increasingly follow those ecosystems rather than traditional agent/channel pathways. (Ecosystem / embedded trajectory context: WEF platform & insurance discussions; broader digital ecosystems in financial services.) https://www.weforum.org/agenda/archive/insurance/ https://www.weforum.org/agenda/archive/financial-and-monetary-systems/

- Telematics economics are shifting from “discount programs” to loss-cost control Usage-based insurance (UBI) started as a pricing/marketing lever. In 2026–2028 it becomes a claims and prevention lever: coaching, alerts, automated interventions, and ecosystem-integrated services (repairs, roadside, smart-home mitigation). This ties directly to Trend #21 (Predict-and-Prevent): connected telemetry makes prevention operationally feasible. (Insurance tech transformation context: Capgemini insurance research and industry reports.) https://www.capgemini.com/insights/research-library/world-insurance-report/ https://www.capgemini.com/industries/financial-services/insurance/

- Consent, privacy, and “data truth” become product constraints—not compliance footnotes Connected insurance fails when customers don’t trust data usage or can’t understand price/decision changes. Regulators and consumer advocates increasingly focus on transparency, fairness, and explainability in data-driven products. The winners treat consent, purpose limitation, and dispute handling as core product capabilities—especially as AI agents start mediating customer interactions and disputes. (Privacy-by-design and consent governance baseline patterns.) https://ico.org.uk/for-organisations/ https://gdpr.eu/what-is-gdpr/

Business impact

- Cost & efficiency: Loss-cost reduction becomes structural, not episodic. Early-warning and intervention reduce frequency/severity (leaks, fire risk, unsafe driving, equipment failure). Claims triage accelerates with telemetry-backed evidence, shrinking investigation cycles and disputes.

- Speed & adaptability: Pricing and underwriting move at the speed of exposure change. Continuous underwriting enables mid-term adjustments, dynamic endorsements, and real-time risk segmentation. New product shapes emerge: micro-duration coverage, usage bundles, ecosystem-triggered activation.

- Risk & resilience: A new risk surface appears: data integrity, model fairness, and dispute economics. Sensor spoofing, missing data, vendor dependency, and biased proxies can create customer harm and regulatory exposure. Strong provenance, audit trails, and explainable decisioning become mandatory as products become more automated.

- Revenue & differentiation: The insurer becomes an ecosystem partner—or gets disintermediated. Ecosystem placement drives “always-on” distribution and attach economics. Prevention services + connected experiences increase retention and justify fee-based value (ties to Trend #21).

CXO CTAs

- Pick your ecosystem battlegrounds (and be explicit about the role) Choose 2–3 ecosystems where you can win (auto OEM/fleet, smart home/property, health/wellness, SMB platforms). Decide whether you are manufacturer, distributor, or orchestrator (ties to Trend #11 platform boundary wars).

- Build a “Connected Product Factory,” not one-off pilots Standardize device onboarding, telemetry ingestion, consent capture, pricing hooks, intervention workflows, and dispute evidence—so each new partner doesn’t become bespoke engineering.

- Treat consent + transparency as conversion infrastructure Make it simple for customers to understand: what data is collected, why, how it affects price, and how to contest decisions. Design “dispute-ready” evidence flows from day one.

- Operationalize intervention, not just scoring Risk scores without action don’t change economics. Build intervention playbooks (alerts, partner dispatch, preventive services) with SLAs and measurable outcomes (loss avoided proxies).

- Design for partner dependency risk and data truth Telemetry supply chains create concentration and integrity risk. Contract for audit rights, uptime, data quality SLAs, and portability. Implement provenance and anomaly detection to defend against spoofing and manipulation.

Trend-specific CTA

If your future underwriting depends on ecosystem telemetry, who owns “data truth” in a dispute—and can you prove it fast enough to avoid customer harm, regulator escalation, and loss creep?

META FORCE V — RESILIENCE UNDER SIEGE (2026–2028)

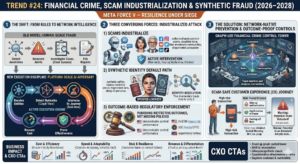

Trend #24: Financial Crime, Scam Industrialization & Synthetic Fraud

Fraud is no longer a “loss line.” It’s an industrial adversary operating at platform scale. In 2026–2028, financial crime shifts from episodic bad actors to organized, AI-assisted production systems: scam supply chains, mule networks, synthetic identities, deepfake-enabled impersonation, and bot-driven account opening. Regulators are also tightening the lens: they are increasingly punishing ineffective outcomes, not just missing policies. The winners won’t be the firms with the most controls—they’ll be the ones that run real-time, graph-driven prevention with provable effectiveness.

Article content

The shift is from rules and case queues to network intelligence and outcome-proof controls. Traditional financial crime programs were designed for human-scale fraud: detect anomalies, generate alerts, investigate cases, file reports. That model collapses when adversaries operate like software—spinning identities, rotating devices, renting mule accounts, and tuning their tactics faster than your model validation cycles. In this era, financial crime becomes an execution discipline: resolve entities → detect networks → intervene in-journey → recover fast → prove effectiveness. The control objective also changes: not “more alerts,” but less customer harm (authorized push-payment scams, impersonation, account takeover) and fewer control failures under supervisory review.

Three forces converge here:

- Scams industrialize—customer harm becomes the primary battlefield Fraud is increasingly “social engineering + payments” rather than just stolen credentials. Criminals run call centers, deepfake voice, fake investment communities, and AI-generated persuasion at scale. The hardest challenge is that many scams are authorized transactions—customers initiate them—so the bank must shift from passive processing to active intervention (risk holds, step-up verification, trusted comms, friction by risk tier).

- Synthetic identity becomes the default attack path AI makes fake personas cheap: realistic documents, synthetic selfies, spoofed liveness attempts, and layered identities that look legitimate across fragmented data sources. If you can’t resolve identity across accounts, devices, merchants, and counterparties, you can’t see the network—and you can’t stop it.

- Regulators are enforcing effectiveness, not intent (outcome-based AML and surveillance) Major enforcement actions increasingly emphasize program effectiveness: governance, monitoring, escalation, and demonstrable control performance—not just “a policy exists.” U.S. enforcement and supervisory bodies continue to set expectations through public actions and guidance across AML/CFT, sanctions, and market surveillance—especially where monitoring failed at scale. U.S. DOJ (enforcement posture and public casework): https://www.justice.gov/ FinCEN (AML/CFT priorities, advisories, enforcement announcements): https://www.fincen.gov/ SEC enforcement (market surveillance / compliance failures): https://www.sec.gov/enforce CFTC enforcement (market integrity and surveillance failures): https://www.cftc.gov/LawRegulation/Enforcement/index.htm

Business impact

- Cost & efficiency: Fraud becomes a controllable COGS line—if detection is network-native. Graph + entity resolution reduces duplicate investigations and improves hit rates. Early interdiction (before funds exit) reduces loss severity and recovery cost.

- Speed & adaptability: Controls must operate in-journey, not after the fact. Interventions need to occur at the point of initiation (payment, change-of-details, new payee, high-risk login), not in downstream reconciliation. Playbooks must update weekly, not quarterly—adversaries iterate continuously.

- Risk & resilience: Customer harm, model risk, and regulatory exposure converge. Over-blocking creates conduct risk; under-blocking creates fraud loss and supervisory risk. Evidence quality matters: you must prove why you intervened (or didn’t), especially for authorized transactions and disputes.

- Revenue & differentiation: Trust becomes an acquisition and retention lever. Safer payments and safer onboarding reduce churn and increase platform/partner confidence. Institutions that can prove fraud prevention outcomes win embedded finance and real-time rail participation.

CXO CTAs

- Stand up a graph-led financial crime control tower Unify entity resolution across customers, devices, accounts, merchants, counterparties, and mule networks. Move from “alert lists” to network detection and disruption.

- Shift from detection to intervention (scam-safe customer experience) Implement risk-tiered friction: step-up authentication, confirmation of payee, cooling-off holds for high-risk cohorts, trusted communications, and guided warnings designed to stop social engineering—not just satisfy UX.

- Treat synthetic identity as a lifecycle problem, not an onboarding check Continuously re-verify identity through behavior, device integrity, account link analysis, and network signals. Make non-human and agent activity visible (ties directly to Trend #27).

- Operationalize effectiveness metrics as board-level controls Track: authorized scam loss rate, mule account time-to-detect, recovery rate, false-positive harm, intervention success rate, and time-to-contain emerging scam types.

- Engineer evidence and auditability into every intervention For any hold/decline/release decision, capture the “why” in machine-readable form (signals, thresholds, policy applied, outcome), so you can defend actions to customers and supervisors.

Trend-specific CTA

If scams are now the dominant fraud vector, can you intervene in real time—without breaking customer trust—and prove to regulators that your program is effective, not just compliant.

META FORCE V — RESILIENCE UNDER SIEGE (2026–2028)

Trend #25: Quantum Encryption & Digital Provenance

Trust is shifting from “we verified it” to “we can prove it”—cryptographically. In 2026–2028, BFSI faces a dual forcing function: post-quantum cryptography (PQC) migration accelerates because “harvest now, decrypt later” turns today’s captured traffic into tomorrow’s breach, while generative AI destroys evidentiary confidence in documents, images, voice, and video. The winners won’t be the institutions that publish roadmaps—they’ll be the ones that industrialize two capabilities: crypto-agility at scale and provenance as a first-class evidence layer across onboarding, disputes, claims, and regulated communications.

Article content

The shift is from static encryption programs to crypto-agile platforms—and from “KYC checks” to verifiable evidence chains. Historically, encryption upgrades were periodic infrastructure projects and “authenticity” was handled through process (manual review, human judgment, watermarking, call-backs). That model fails in a world where: Quantum risk threatens long-lived confidentiality (payments messaging, client data, trading/treasury records, legal archives). Synthetic media can manufacture believable evidence (voice authorizations, identity artifacts, claim photos, dispute screenshots, “executive” instructions). This creates a new control requirement: prove authenticity and integrity end-to-end, not just at onboarding. PQC and provenance are converging into one executive mandate: trust becomes cryptographic, continuous, and auditable.

Three forces converge here:

- “Harvest now, decrypt later” turns PQC into a near-term risk, not a science project Adversaries can capture encrypted traffic and store it until decryption becomes feasible. That matters in BFSI because many data types have long confidentiality lifetimes (customer PII, corporate treasury flows, trade finance docs, legal records, surveillance archives). NIST’s PQC program (selected algorithms and standardization track) is the baseline signal that PQC is moving into implementable standards. https://csrc.nist.gov/projects/post-quantum-cryptography https://www.nist.gov/pqcrypto

- Governments are pushing PQC planning into procurement reality Regulators and national cyber agencies are increasingly publishing guidance and migration planning expectations—shifting PQC from “R&D” to “program execution.” CISA’s PQC guidance and resources are designed to help organizations plan migration and manage crypto-agility. https://www.cisa.gov/quantum https://www.cisa.gov/resources-tools/resources/post-quantum-cryptography-migration

- Digital provenance becomes mandatory when evidence itself is attackable GenAI makes it cheap to fabricate “proof”: IDs, bank letters, invoices, beneficiary instructions, claim photos, dispute screenshots, “executive” instructions, and even “recorded” conversations. BFSI therefore needs provenance as infrastructure: signed content, tamper-evident metadata, and chain-of-custody that can be verified during disputes, claims, investigations, and audits. Industry initiatives like C2PA are building open standards for content authenticity and provenance. https://c2pa.org/ https://c2pa.org/specifications/

Business impact

- Cost & efficiency: Crypto and evidence failures become expensive operational drag. PQC migration done late becomes a “double spend” (rebuild + re-audit + re-certify). Provenance reduces manual review and dispute handling costs by making authenticity machine-verifiable.

- Speed & adaptability: Crypto-agility becomes a platform capability. Institutions that can rotate algorithms, keys, and certificate policies quickly can respond faster to new threats and regulatory expectations. Provenance embedded into workflows accelerates straight-through processing (claims triage, onboarding verification, dispute resolution).

- Risk & resilience: This is about existential failure modes: confidentiality collapse and evidence collapse. A future decryption event compromises archives and creates retroactive breach exposure. Fake evidence at scale drives customer harm, fraud loss, and regulatory action unless authenticity can be proven.

- Revenue & differentiation: Provable trust becomes a commercial feature. Enterprise clients and platforms will prefer counterparties that can prove data integrity, document authenticity, and transaction authorization—especially for high-value payments, trade, and claims.

CXO CTAs

- Stand up a PQC migration program tied to data-lifetime risk Inventory cryptographic usage (TLS, VPNs, HSMs, key management, signing, internal service mesh, vendor connections). Prioritize by confidentiality lifetime and “replaceability” of historical exposure.

- Build crypto-agility as a platform, not a one-time project Standardize cryptography behind services and libraries so algorithms can be swapped without rewriting applications. Treat crypto policy like controls-as-code: versioned, tested, auditable.

- Upgrade identity and authorization flows for synthetic media reality Assume voice and video can be faked. Require step-up verification for high-risk actions (payments, beneficiary changes, wire instructions, claim approvals), and engineer “verified communications” patterns (signed messages, strong device binding).

- Make provenance an enterprise evidence layer (not a niche pilot) Embed content authenticity and chain-of-custody into: onboarding documents, KYC artifacts, claims images/video, dispute evidence, client instructions, and agent-generated communications. Use standards-based approaches (e.g., C2PA) where feasible. https://c2pa.org/

- Define an “evidence SLA” for regulated operations For any disputed decision or instruction, be able to reconstruct: who initiated, what was presented, what checks ran, what was signed, what changed, and why it was accepted/rejected—within audit timelines.

Trend-specific CTA

If a customer, regulator, or court challenges the authenticity of an instruction, a document, or a piece of “evidence,” can you prove what’s real—cryptographically—within hours, not weeks?

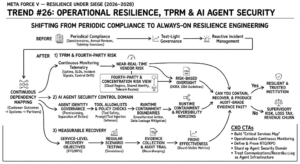

Trend #26: Operational Resilience, TPRM & AI Agent Security

Resilience is shifting from “plans and attestations” to continuous, testable runtime control—across third parties, fourth parties, and AI agents. In 2026–2028, BFSI’s biggest operational risk isn’t a single outage—it’s compound dependency failure: cloud concentration, SaaS supply chains, fourth-party fragility, and a growing population of AI agents that can execute actions faster than human oversight. Regulators are also explicit: outsourcing does not outsource accountability. Winners will run resilience as an engineered system: dependency mapping + continuous monitoring + measurable recovery + auditable evidence—extended to agent permissioning, tool safety, and recordkeeping.

Article content

The shift is from periodic resilience compliance to always-on resilience engineering—covering vendors, fourth parties, and autonomous workflows. Historically, operational resilience and third-party risk management (TPRM) were governance-heavy and test-light: questionnaires, annual reviews, tabletop exercises, and vendor scorecards. That model breaks when: critical services are delivered through layered ecosystems (cloud → SaaS → sub-processors → data providers → telecom), incident blast radius crosses legal entities and geographies instantly, and agentic systems introduce a new class of runtime risk (prompt/tool injection, runaway automation, unauthorized actions, opaque decision trails). This forces a new operating reality: resilience as code + monitoring as evidence + containment as design.

Three forces converge here:

- Regulators are raising the resilience floor—measurable outcomes, not policy binders In Europe, DORA makes operational resilience and ICT third-party risk a hard requirement: governance, testing, incident management, and oversight of critical ICT providers. The key is the posture shift: “show me your capability” becomes “prove your effectiveness.” https://eur-lex.europa.eu/eli/reg/2022/2554/oj https://finance.ec.europa.eu/regulation-and-supervision/financial-services-legislation/digital-operational-resilience-act-dora_en

- Fourth-party and concentration risk become systemic constraints The real failure modes sit below your direct vendor list: common cloud regions, shared identity providers, shared observability stacks, shared KYC/biometric providers, shared telecom routes. Traditional TPRM can’t see or react fast enough. Continuous dependency mapping and near-real-time vendor telemetry move from “nice” to necessary. (EBA guidance reinforces risk-based oversight expectations for outsourcing/ICT risk management.) https://www.eba.europa.eu/regulation-and-policy/internal-governance/guidelines-on-outsourcing-arrangements https://www.eba.europa.eu/regulation-and-policy/internal-governance

- Agentic AI turns “access and control gaps” into instantaneous loss events Once AI agents can execute workflows across systems, weaknesses in permissioning, separation of duties, change control, and recordkeeping become amplified. The new security surface is not just models—it’s agents + tools + identities + data flows. Without runtime containment, an injected tool call or mis-scoped permission can trigger unauthorized transactions, data leakage, or regulated communication violations at scale.

Business impact

- Cost & efficiency: Resilience becomes a controllable operating cost—or a recurring loss tax. Continuous monitoring reduces surprise incidents and shortens triage cycles. Standardized resilience patterns reduce bespoke remediation across programs and vendors.

- Speed & adaptability: Change velocity depends on reusable controls. Institutions that encode resilience controls (failover patterns, runbooks, access gates, evidencing) ship faster with fewer audit surprises. Vendor onboarding becomes scalable when obligations and monitoring are standardized.

- Risk & resilience: The blast radius is now cross-enterprise and machine-speed. Third/fourth-party incidents become your incidents. Agents raise the probability of high-impact errors unless bounded by permission manifests, tool constraints, and reversibility horizons.

- Revenue & differentiation: Trust becomes partner currency. Platforms and enterprise clients will prefer institutions that can prove resilience, containment, and evidencing—especially for embedded finance, real-time payments, and mission-critical treasury services.

CXO CTAs

- Build a “critical services map” that includes fourth parties and concentration hotspots Start from customer outcomes (payments, onboarding, claims, trading, servicing) and map dependencies end-to-end: cloud regions, SaaS, sub-processors, identity providers, data feeds, telecom. Treat concentration like a portfolio risk with limits.

- Operationalize continuous resilience monitoring (not annual questionnaires) Shift TPRM from document collection to telemetry: uptime/latency SLOs, incident signals, control drift, patch posture, data access changes, and sub-processor changes—linked to risk tiering and escalation.

- Define measurable RTO/RPO and prove them through testing, not declarations Establish service-level recovery objectives, run regular scenario tests, and retain evidence. Make “time-to-detect” and “time-to-recover” board-visible metrics.

- Stand up AI agent security as a first-class control domain Implement: agent identity governance, least privilege by default, tool allowlists, runtime policy checks, prompt/tool injection defenses, and traceability for every consequential action (ties directly to Trend #7 and Trend #27).

- Treat communications governance and recordkeeping as agent-era infrastructure As agentic systems generate business communications, every interaction becomes a regulated record. Align controls to regulatory expectations for retention and supervision. (Baseline enforcement hubs for recordkeeping/communications expectations.) https://www.sec.gov/enforce https://www.cftc.gov/LawRegulation/Enforcement/index.htm

Trend-specific CTA

If a critical workflow fails at 2 a.m. because a fourth-party dependency breaks—or an agent executes the wrong action—can you contain it fast, recover to a measurable standard, and produce audit-grade evidence without improvisation?

Trend #27: Identity Fabric & Machine Identity Governance

Identity is becoming the new perimeter—and most identities are no longer human. In 2026–2028, BFSI security and compliance stop being primarily about networks and endpoints and become about who/what is allowed to act—humans, services, APIs, bots, and AI agents. The definitive shift is from “logins and KYC checks” to an identity fabric that continuously verifies, governs, and evidences identity across channels and workflows. Winners won’t just modernize authentication; they will govern non-human identities as regulated control infrastructure—because that’s where the next systemic losses will originate.

Article content

The shift is from authentication events to continuous identity control—across human and non-human actors. Historically, identity in banking and insurance was handled in silos: IAM for employees, KYC for customers, API keys for systems, and separate fraud controls for channels. That approach collapses when: non-human identities outnumber humans (service accounts, APIs, workloads, robotic process automation, partner integrations, agents), real-time rails and embedded distribution compress decision windows, and AI agents execute actions that look legitimate unless identity and authorization are enforced at runtime. This drives a new mandate: identity must be continuous, risk-scored, and provable, not a one-time gate. Identity becomes a control plane that connects security, fraud, AML, customer experience, and regulatory defensibility.

Three forces converge here:

- Passkeys and phishing-resistant auth move from “nice” to necessary Credential theft remains a primary breach path, and deepfake-enabled social engineering makes “what you see/hear” unreliable. BFSI therefore shifts to phishing-resistant authentication (passkeys / FIDO-based), plus continuous behavioral verification for risky actions. FIDO Alliance and passkey standards are now mature enough to be treated as mainstream enterprise and consumer patterns. https://fidoalliance.org/passkeys/ https://fidoalliance.org/fido2/

- Non-human identity governance becomes the dominant security gap Service identities, API keys, workload identities, and agent identities often have: long-lived secrets, excessive privilege, weak rotation, unclear ownership, and minimal monitoring. In an agentic enterprise, this becomes existential: an agent with broad tool access is effectively a privileged employee—without the same controls. NIST’s Zero Trust guidance reinforces the direction: identity and continuous verification sit at the center of modern security architecture. https://csrc.nist.gov/publications/detail/sp/800-207/final https://www.nist.gov/itl/zero-trust-architecture

- Digital identity becomes an enablement layer for programmable money and embedded finance—yet policy diverges Instant payments, tokenized assets, stablecoin settlement, and embedded finance all require stronger identity assurance, consent, and provenance. DPI models demonstrate what “national-scale identity rails” can enable (e.g., Aadhaar’s scale in India), even as other jurisdictions diverge in their policy posture—forcing institutions to build more of the identity stack themselves. Aadhaar’s official ecosystem signals what large-scale digital identity infrastructure looks like in practice. https://uidai.gov.in/ https://uidai.gov.in/about-uidai.html

Business impact

- Cost & efficiency: Identity becomes a controllable cost driver for fraud, operations, and compliance. Stronger authentication and continuous verification reduce ATO, scams, and manual exception handling. A unified identity fabric reduces duplicate KYC, fragmented entitlements, and channel inconsistencies.

- Speed & adaptability: Identity control becomes a reusable platform capability. New products and partner integrations can move faster when identity, consent, and authorization are standardized as services. Agentic workflows can scale safely when permissioning and evidence are baked in.

- Risk & resilience: The blast radius shifts to identity compromise—especially non-human compromise. Leaked keys and over-privileged service accounts become “silent systemic risk.” Without machine identity governance, you cannot credibly secure agents, APIs, and embedded ecosystems.

- Revenue & differentiation: Trust becomes a growth lever in real-time and embedded worlds. Safer identity enables higher limits, smoother journeys, lower friction step-up, and better conversion—without absorbing unacceptable fraud loss. Platforms and enterprise clients prefer institutions that can prove identity assurance and authorization controls.

CXO CTAs

- Adopt an “Identity Fabric” architecture that unifies customer, workforce, and machine identity Treat identity as shared infrastructure: authentication, authorization, device binding, consent, behavioral signals, and evidencing—consistent across channels and products.

- Industrialize phishing-resistant authentication (passkeys) and step-up policy Move high-risk actions (new payee, beneficiary changes, wire approvals, claim payouts, policy loans) to phishing-resistant flows and risk-tiered step-up—designed for deepfake reality. https://fidoalliance.org/passkeys/

- Stand up Non-Human Identity Governance as a first-class control domain Inventory all service/workload/agent identities; enforce ownership, least privilege, rotation, vaulting, attestation, and continuous monitoring. Treat non-human privileges like regulated entitlements.

- Make authorization runtime-governed for agents and APIs Define permission manifests, tool allowlists, separation-of-duties constraints, and reversibility horizons for any identity (human or machine) that can execute consequential actions (ties directly to Trend #7 and Trend #26).

- Tie identity to fraud + AML outcomes with measurable effectiveness Track identity-driven KPIs: ATO rate, scam loss rate, false-positive friction, step-up success rate, mule detection lead time, and non-human privilege drift—then review them like capital metrics.

Trend-specific CTA

If most “users” in your environment are APIs, services, and agents, can you answer—in audit-grade detail—who (or what) executed a transaction, what permissions were in force, and why the action was allowed?

META FORCE VI — INVISIBLE INFRASTRUCTURE (2026–2028)

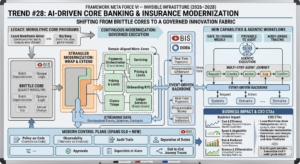

Trend #28: AI-Driven Core Banking & Insurance Modernization

Core modernization is shifting from “replacement” to “continuous changeability”—because agents and real-time rails can’t live on brittle cores. In 2026–2028, the strategic objective is no longer “a modern core.” It’s a core designed for governed execution: event-driven, domain-aligned, observable, and safe to change weekly—not yearly. The winners won’t be the institutions that finish the biggest core program; they’ll be the ones that build an innovation fabric around the core and progressively decompose legacy into composable domains that can support agentic workflows, programmable money, and audit-grade controls.

Article content

The shift is from monolithic transaction engines to domain-aligned micro-cores with event-driven control. For years, core programs were framed as one of two extremes: leave the mainframe alone, or replace everything in a multi-year big bang. In 2026–2028, both approaches underperform: “Leave it” fails because real-time products, multi-rail routing, and agentic operations demand low-latency data, consistent semantics, and change velocity. “Big bang” fails because it concentrates risk, burns years, and rarely delivers full scope before priorities change. The winning pattern is strangler modernization: wrap and extend the core, carve out domains (pricing, limits, onboarding, servicing, claims, payments, ledger services), and migrate functionality incrementally—while running a modern control plane (policy-as-code, observability, audit trails) across old and new. This is why “AI-driven modernization” matters: not because AI writes code faster, but because it accelerates decomposition, testing, migration, and documentation—making continuous modernization economically feasible.

Three forces converge here:

- Agentic execution needs cores that are safe to change—and provable to audit Agents orchestrate multi-step work across systems. If the core is brittle, every change becomes a risk event. If the core is opaque, you can’t prove what happened. Modernization therefore becomes inseparable from observability, traceability, and runtime governance (ties directly to Trend #7 controls-as-code).

- Real-time rails and programmable settlement expose batch-era architecture limits Instant payments, 24×7 treasury, tokenized settlement, and continuous underwriting don’t tolerate end-of-day batch dependencies. Event-driven cores and streaming data patterns become prerequisites for real-time products and resilience. (BIS work on fast payments highlights the operational implications of always-on payment infrastructures.) https://www.bis.org/cpmi/publ/d168.pdf https://www.bis.org/cpmi/publ/d191.pdf

- The industry is standardizing resilience and third-party accountability—forcing better core change discipline Operational resilience regimes (e.g., DORA in the EU) raise expectations around ICT risk, change management, incident response, and third-party dependencies—making “fragile modernization” untenable. Core modernization must be engineered to withstand regulatory scrutiny. https://finance.ec.europa.eu/regulation-and-supervision/financial-services-legislation/digital-operational-resilience-act-dora_en https://eur-lex.europa.eu/eli/reg/2022/2554/oj

Business impact

- Cost & efficiency: Modernization becomes cheaper when it’s continuous and reusable. Strangler patterns reduce “rewrite waste” and avoid re-platforming everything at once. Shared platform capabilities (identity, consent, logging, policy, telemetry) reduce duplication across domains.

- Speed & adaptability: Change velocity becomes the differentiator. New products ship as configuration + services + events, not core rewrites. Agent workflows and real-time journeys can be introduced incrementally—without waiting for “core completion.”

- Risk & resilience: The goal is fewer “core-caused incidents” and faster recovery. Domain boundaries reduce blast radius; event-driven designs improve observability and incident triage. Better change control and evidence reduce audit and regulatory exposure.

- Revenue & differentiation: Modern cores unlock new profit pools faster. Embedded finance, programmable payments, real-time underwriting/claims, and treasury platforms become economically viable when the core can support them safely and cheaply.

CXO CTAs

- Adopt a “Strangler Modernization Blueprint” with domain sequencing Pick the first 3–5 domains to carve out (onboarding/KYC, pricing & limits, servicing, payments orchestration, claims triage) based on: customer impact, change frequency, and risk.

- Build an event-driven backbone and treat it as core infrastructure Standardize events, schemas, and contracts so every domain publishes/consumes in consistent semantics (ties directly to Trend #30 semantic layer). Use events to power controls, audit traces, and real-time decisioning.

- Define a modern control plane that spans old + new Implement policy-as-code, separation of duties, approvals, and audit-grade tracing across the hybrid estate so modernization doesn’t create “two control worlds.”

- Make observability non-negotiable: telemetry as evidence Require end-to-end traces across customer journeys and operational workflows (latency, failures, retries, compensations). Treat “prove what happened” as a product requirement—not an ops afterthought.

- Budget modernization as a product (continuous funding), not a project (one-time capex) Set an annual modernization capacity with clear throughput metrics: domains decomposed, journeys migrated, change lead time reduced, incident rate reduced.

Trend-specific CTA

If an AI agent executes a multi-step customer or risk action across ten systems, can your core estate both support the speed—and reconstruct the full decision and transaction trail in audit-grade detail?

Trend #29: GenAI for Application & Data Modernization

Modernization is shifting from “multi-year rewrites” to “continuous refactoring at scale”—because GenAI collapses the cost of change (if governance is engineered in). In 2026–2028, GenAI becomes the accelerant for rebuilding the invisible foundations: migrating legacy code, regenerating tests, documenting lineage, and modernizing data schemas fast enough to support agentic workflows and audit-grade control. But the real shift is not “AI writes code.” It’s that modernization becomes industrial throughput: repeatable pipelines, measurable quality, and compliance-by-design.

Article content

The shift is from modernization as a program to modernization as a production system. For decades, application and data modernization failed for predictable reasons: fragile legacy code, missing documentation, scarce skills, and testing bottlenecks. GenAI changes the constraint—but also increases risk if organizations ship unverified transformations. Winning institutions treat GenAI as an assembly line, not a chat tool: discover → translate → test → secure → deploy → observe → certify. The objective is to turn modernization into a continuous capability: every quarter you retire legacy, reduce complexity, improve semantics, and raise automation coverage—without creating new operational and regulatory debt.

Three forces converge here:

- Legacy risk is now change-velocity risk (not just tech debt) Agentic operations, real-time rails, and new regulatory expectations demand faster product and control changes. Legacy systems can’t safely change at that speed—so modernization becomes a prerequisite for competitiveness, not a “tech agenda.”

- Software supply-chain scrutiny forces “secure-by-construction” modernization When GenAI touches code, the risk isn’t only bugs—it’s introducing insecure patterns, license exposure, and unverifiable changes. Institutions must anchor modernization pipelines to recognized secure development frameworks (e.g., NIST SSDF) and provenance/attestation concepts (e.g., SLSA). https://csrc.nist.gov/publications/detail/sp/800-218/final https://slsa.dev/

- Data modernization is becoming an audit requirement, not a data-team preference You can’t govern agents, prove decisions, or run controls-as-code without trustworthy data foundations: lineage, quality, semantics, and reproducibility. Open lineage and provenance standards are maturing and increasingly used as implementation patterns. https://openlineage.io/ https://www.w3.org/TR/prov-overview/

Business impact

- Cost & efficiency: Modernization becomes cheaper when it’s pipeline-driven and test-heavy. Lower unit cost per migrated application/component as patterns become reusable. Reduced manual effort in documentation, test creation, and data mapping—when GenAI is constrained by standards and verified by automation.

- Speed & adaptability: Change throughput becomes the differentiator. Teams ship modernization increments weekly (not quarterly), enabling faster product iteration and control upgrades. Internal Developer Platforms (IDPs) and “golden paths” make modernization safer and repeatable across squads.

- Risk & resilience: The modernization pipeline becomes a regulated control surface. Without evidence (tests, security scans, attestations, lineage), GenAI modernization creates audit and incident risk. With evidence, modernization improves resilience by reducing fragile dependencies and improving observability.

- Revenue & differentiation: Modernization unlocks new business models faster. Faster onboarding, real-time decisioning, embedded distribution, and prevention services become feasible when systems can evolve safely and quickly.

CXO CTAs

- Stand up a “Modernization Factory” with measurable throughput Define a repeatable pipeline: discovery/inventory → transformation → automated testing → security scanning → deployment → runtime monitoring → certification. Measure throughput like manufacturing: components migrated per month, defect escape rate, and lead time reduction.

- Make testing the governor of GenAI speed Mandate automated test generation + regression coverage targets as gates for every transformation. GenAI should increase test coverage, not bypass it.

- Anchor GenAI SDLC to secure development standards (and require evidence) Adopt SSDF-aligned controls and require build attestations and dependency integrity signals for transformed code. https://csrc.nist.gov/publications/detail/sp/800-218/final https://slsa.dev/

- Treat data modernization as “decision governance,” not a data project Prioritize lineage, reproducibility, and semantic consistency for the domains that feed regulated decisions (credit, fraud, underwriting, claims). Use open lineage/provenance patterns to make evidence portable across platforms. https://openlineage.io/ https://www.w3.org/TR/prov-overview/

- Create an AI-native engineering platform (IDP) with golden paths Build standardized templates for services, pipelines, logging, policy checks, secrets, and approvals—so modernization can scale across teams without bespoke risk review every time. (Platform engineering baseline community patterns.) https://platformengineering.org/ https://tag-app-delivery.cncf.io/

Trend-specific CTA

If regulators asked you to prove how a legacy system was transformed—and why the new behavior is correct—could you produce test evidence, security evidence, and data lineage evidence on demand?

Trend #30: Domain Models, Semantic Layer & Data Foundations

AI governance is collapsing into one hard prerequisite: shared meaning. In 2026–2028, the biggest blocker to scaling agentic workflows and explainable decisioning isn’t model quality—it’s semantic inconsistency: “customer,” “exposure,” “beneficiary,” “default,” “loss,” “obligation,” and “consent” mean different things across systems, teams, and jurisdictions. The winners won’t be the institutions with the biggest lakehouse. They’ll be the ones that build an enterprise semantic layer—domain models, ontologies, lineage, and entity resolution—so every agent, model, control, and report operates on the same definitions with audit-grade traceability.

Article content

The shift is from data platforms that store information to semantic platforms that govern decisions. For years, BFSI treated “data foundations” as a storage and integration problem: lakes, warehouses, ingestion pipelines, and dashboards. That approach fails in an agentic world because agents don’t just read data—they act on it. If the semantics are inconsistent, agents and models will execute inconsistent actions, create contradictory customer outcomes, and generate indefensible explanations. The new foundation is a decision-grade semantic stack: domain model → semantic layer → entity resolution → lineage/provenance → policy bindings. This turns data from “available” into usable for regulated execution—and it creates the only scalable way to prove: what the system believed, why it decided, what it did, and how that maps to policy, consent, and obligation.

Three forces converge here:

- Agentic operations make semantic drift a control failure (not a data quality annoyance) When AI agents orchestrate onboarding, servicing, disputes, claims, collections, AML triage, or treasury routing, semantic mismatches produce real harm: wrong limits, wrong eligibility, wrong disclosures, wrong risk tier, wrong escalation path. That becomes operational risk, conduct risk, and regulatory exposure—fast.

- Explainability and auditability depend on stable concepts and reproducible lineage You cannot deliver “audit-grade why” if the underlying terms and relationships shift by system or by team. Provenance and lineage standards are increasingly used to represent and trace how data and decisions were produced. https://www.w3.org/TR/prov-overview/ https://openlineage.io/

- The industry is standardizing “semantic interoperability” through open financial data models Common domain models reduce integration friction and improve portability across vendors, clouds, and partners. The Financial Data Exchange (FDX) and the Banking Industry Architecture Network (BIAN) are two widely used reference points for structuring financial data sharing and banking capability models—useful as accelerants even when institutions customize. https://financialdataexchange.org/ https://bian.org/

Business impact

- Cost & efficiency: Less rework, fewer reconciliations, fewer broken controls. Cuts duplicated mapping, reconciliation, and “data translation” work across projects. Reduces model retraining and control redesign caused by shifting definitions.

- Speed & adaptability: Change becomes safer and faster when meaning is standardized. New products, partners, and jurisdictions integrate faster when semantics are reusable. Agents can be deployed across journeys without “semantic rewrites” in every domain.

- Risk & resilience: Semantics becomes a first-class risk control. Fewer contradictory decisions across channels (reduces complaints, disputes, UDAAP risk). Better audit defensibility because lineage and definitions are consistent and reconstructable.

- Revenue & differentiation: Decision quality scales—and so does trusted automation. Institutions can safely automate more journeys (lower cost-to-serve) and expand into new ecosystems (embedded finance, agentic commerce) because decisioning is consistent and explainable.

CXO CTAs

- Appoint a named owner for enterprise meaning (not just data storage) Create accountability for definitions, relationships, and canonical domain models across credit, fraud, payments, underwriting, claims, servicing, and consent.

- Build a semantic layer anchored in domain models (start with 3–5 regulated domains) Prioritize domains tied to consequential decisions (credit eligibility, fraud/scam interventions, underwriting/claims, AML triage, complaints/disputes). Use reference models (FDX/BIAN) to accelerate—then tailor. https://financialdataexchange.org/ https://bian.org/

- Make entity resolution and relationship intelligence a first-class platform capability Unify identities across customers, accounts, devices, counterparties, merchants, policies, beneficiaries, and non-human actors—so both fraud controls and agents operate on the same “who is who.”

- Treat lineage/provenance as evidence infrastructure (not metadata) Require every high-impact decision pipeline to produce reproducible traces: inputs, transformations, model versions, policy applied, and outputs—stored as audit evidence. https://openlineage.io/ https://www.w3.org/TR/prov-overview/

- Bind policy and consent to semantics (controls-as-code needs shared meaning) Controls-as-code only works when policy terms map to stable entities and attributes (e.g., consent purpose, retention, eligibility constraints, jurisdiction routing). Make the semantic layer the “binding layer” between policy and execution (ties directly to Trend #7).

Trend-specific CTA

If your CEO asked “why did we decline this customer, approve that payout, or block that payment?”, can you answer with one consistent definition of ‘customer’ and a reproducible decision trail—across every system and jurisdiction?

Trend #31: Composable Architecture, API-First & Platform Engineering

BFSI is shifting from building applications to running product platforms—with governed reuse. In 2026–2028, competitive advantage comes less from individual digital experiences and more from the institution’s ability to assemble capabilities quickly and safely: onboarding, payments, lending, servicing, claims, fraud interventions—delivered as versioned APIs and reusable components with audit-grade controls. The winners won’t be the firms with the most microservices. They’ll be the ones that treat architecture as a product system: API product management + platform engineering + reusable control patterns.

Article content

The shift is from project-by-project delivery to composable platforms with “golden paths.” For years, “API-first” meant exposing services. In 2026–2028, API-first becomes an operating model: products are assembled from standardized building blocks—identity, consent, pricing, limits, ledger, payments routing, dispute workflows—each delivered with clear contracts, SLAs, and governance. This is where platform engineering becomes decisive. If every team builds its own pipelines, logging, secrets, policy checks, and approval flows, you don’t get speed—you get fragmentation. The winning pattern is a shared platform that gives teams guardrailed autonomy: discover → compose → ship → observe → govern. Composable architecture isn’t about “more services.” It’s about predictable change under regulation.

Three forces converge here:

- Embedded finance and agentic commerce demand machine-callable products, not brochures When partners and agents originate transactions, BFSI products must be callable: quote, approve, pay, finance, insure—via stable APIs with explicit semantics, errors, and audit traces. This aligns with open API ecosystems and standardized data-sharing patterns that reduce integration friction. (FDX is a reference signal for interoperable financial data sharing.) https://financialdataexchange.org/

- Governance is moving into the build pipeline (controls-as-code needs platform primitives) Controls-as-code (Trend #7) only scales when platform primitives exist: standardized authN/authZ, logging, retention, approvals, separation of duties, evidence capture, and policy enforcement. Platform engineering makes governance reusable—so compliance becomes a shared capability, not a per-team negotiation.

- Complexity is now the primary operational risk in distributed systems Microservices without platform discipline create “distributed monoliths”: inconsistent contracts, brittle dependencies, and incident chaos. Platform engineering communities codify patterns for paved roads, reliability, and delivery governance. (CNCF’s Platform Engineering and Internal Developer Platform work is a strong reference baseline.) https://tag-app-delivery.cncf.io/whitepapers/platform-engineering/ https://platformengineering.org/

Business impact

- Cost & efficiency: Reuse becomes measurable operating leverage. Lower cost per product launch when identity, consent, KYC, risk checks, logging, and approvals are reusable components. Reduced integration and remediation costs through standardized contracts and versioning.

- Speed & adaptability: Teams move faster because the platform absorbs complexity. Faster partner onboarding and ecosystem distribution when APIs are productized (documentation, SLAs, monitoring, lifecycle management). Faster experimentation because “golden paths” minimize bespoke security/compliance work.

- Risk & resilience: Reliability and auditability become built-in, not bolted on. Stronger observability and dependency management reduce incident MTTR. Better evidencing because calls are traceable, versioned, and policy-bound.

- Revenue & differentiation: Composable capability becomes a distribution advantage. You can show up inside platforms with consistent eligibility, pricing, and servicing—driving attach economics (embedded lending, embedded insurance, treasury platforms). “Time-to-partner” becomes a competitive weapon.

CXO CTAs

- Treat APIs as products, not integration artifacts Define API owners, SLAs, versioning policy, deprecation rules, monetization/chargeback (where relevant), and usage analytics. Measure adoption and reliability like a P&L asset.

- Build an Internal Developer Platform (IDP) with golden paths Standardize CI/CD, policy checks, secrets, observability, and audit evidence so teams can ship safely without re-inventing controls. (Reference patterns: CNCF platform engineering guidance.) https://tag-app-delivery.cncf.io/whitepapers/platform-engineering/

- Standardize reusable “control components” Create drop-in modules for consent, retention, approvals, SoD, logging, redaction, and reversible execution—so every new workflow is compliant by default (ties to Trend #7 and Trend #30).

- Implement dependency discipline: contract testing + runtime governance Require contract tests, schema registries, and backward-compatibility guarantees. Treat breaking changes as risk events with formal approvals and rollback plans.

- Design for composability across partners and sovereignty regimes Ensure APIs support policy-driven routing, data residency constraints, and auditable execution locations—so composable architecture works across fragmented regulatory environments (ties to Trend #2).

Trend-specific CTA

If a platform partner asked you to embed lending, payments, and insurance in 90 days—with audit-grade controls—could your organization assemble it from governed building blocks, or would you start another bespoke project?

Trend #32: Cloud Strategy, Observability & Site Reliability Engineering

Cloud is shifting from a destination to a portfolio—and reliability becomes the operating system. In 2026–2028, BFSI winners won’t be defined by “cloud adoption %.” They’ll be defined by workload placement discipline (cost/latency/compliance), end-to-end observability as evidence, and SRE operating models that keep 24×7 money and agentic workflows stable under continuous change. The shift is from “move to cloud for efficiency” to run a hybrid, policy-driven execution fabric where resilience and cost are continuously optimized—not annually renegotiated.

Article content

The shift is from cloud migration programs to cloud operations as a control plane—measured by SLOs and unit economics. Most institutions built cloud capability as infrastructure modernization: landing zones, migrations, and platform builds. In 2026–2028, that’s table stakes. The hard part is operational: AI agents, real-time payments, embedded distribution, and sovereign constraints require a cloud strategy that behaves like a portfolio: place → observe → optimize → recover → prove. Observability stops being “ops tooling” and becomes audit-grade evidence: you must prove where execution occurred (sovereignty), what happened (incident reconstruction), and why outcomes were produced (decision traceability). SRE becomes the operating cadence that makes continuous change survivable.

Three forces converge here:

- Hybrid is becoming structural—not a temporary stage Regulatory divergence, data/model residency, latency constraints, and cost realism push institutions toward hybrid and multi-environment placement. The strategic capability is policy-driven placement and portability—not ideological “all-in” positions. (EU operational resilience posture is a forcing function for disciplined ICT risk management and third-party dependency oversight.) https://finance.ec.europa.eu/regulation-and-supervision/financial-services-legislation/digital-operational-resilience-act-dora_en https://eur-lex.europa.eu/eli/reg/2022/2554/oj

- Observability becomes mandatory when systems act at machine speed With agentic workflows and always-on rails, failures cascade faster and disputes are evidence-driven. Institutions need consistent telemetry across services, events, data pipelines, and model/agent actions. OpenTelemetry is becoming the de facto standard for portable traces/metrics/logs—critical in hybrid estates. https://opentelemetry.io/ https://opentelemetry.io/docs/

- Reliability is the product: SRE becomes the control discipline for 24×7 BFSI Real-time rails force a 24×7 operating model (treasury, fraud, payments, claims, servicing). SRE formalizes this through SLOs, error budgets, incident response, and blameless postmortems—so speed doesn’t break trust. Google’s SRE foundations remain the reference playbook for turning reliability into an engineering discipline. https://sre.google/books/ https://sre.google/sre-book/table-of-contents/

Business impact

- Cost & efficiency: Cloud spend becomes a controllable operating variable—or a margin leak. Placement discipline reduces “default cloud tax” (over-provisioning, egress surprises, duplicated tooling). Observability reduces mean time to isolate (MTTI) and mean time to recover (MTTR), cutting outage cost and remediation burden.

- Speed & adaptability: Release velocity survives when SLOs govern change. Teams ship faster with clear reliability guardrails (error budgets) rather than ad hoc “freeze culture.” Hybrid portability increases optionality under sovereign constraints and vendor concentration risk.

- Risk & resilience: Telemetry becomes evidence; resilience becomes measurable. Strong tracing enables audit-grade incident reconstruction and supports regulatory expectations for operational resilience. SRE reduces systemic risk by shrinking blast radius and institutionalizing response playbooks.

- Revenue & differentiation: Uptime and predictable performance become partner currency. Platforms and enterprise clients choose institutions that can prove reliability and containment—especially for embedded finance, real-time payments, and treasury platforms.

CXO CTAs

- Run cloud as a portfolio with placement policy—not as a one-way migration Define workload placement rules by latency, compliance/residency, criticality, and unit economics. Treat placement exceptions as risk decisions with named accountability.

- Make SLOs board-visible for critical journeys Define SLOs for payments, onboarding, servicing, claims, and fraud controls. Use error budgets to govern release velocity and prioritize reliability work. (SRE reference foundation.) https://sre.google/books/

- Standardize observability across hybrid using open standards Adopt consistent tracing/metrics/logs conventions and require end-to-end correlation IDs across services, events, and agent actions. (OpenTelemetry baseline.) https://opentelemetry.io/

- Engineer resilience patterns as defaults: failover, graceful degradation, and safe-fail Design “degrade but don’t break” for customer-critical paths (payments, auth, fraud scoring, servicing). Require tested runbooks and routine game days for critical services.

- Tie cloud economics to business journeys (FinOps discipline) Move from aggregate cloud spend to unit cost per journey (onboarding, dispute, claim, payment, decision). Use showback/chargeback and optimization backlogs so product teams own cost outcomes. (FinOps baseline.) https://www.finops.org/introduction/what-is-finops/ https://www.finops.org/framework/

Trend-specific CTA

If regulators asked you to prove where a transaction/decision executed, and your CEO asked you to cut outage minutes in half—do you have SLO-governed operations and telemetry-as-evidence, or just more dashboards?

META FORCE VII — EXPERIENCE, WORKFORCE & SUSTAINABILITY (2026–2028)

Trend #33: Customer & Workforce Experience Transformation

Experience is shifting from “digital journeys” to intent execution—where the interface becomes an agent and the metric becomes outcomes. In 2026–2028, BFSI experience strategy stops being a channel roadmap and becomes an execution system: AI anticipates intent, orchestrates next-best-actions across products, and completes work end-to-end—with audit-grade transparency. At the same time, workforce experience becomes a throughput lever: copilots and agents reduce manual load, but only if operating models, controls, and skills are redesigned. The winners won’t be the firms with the prettiest apps—they’ll be the ones that turn experience into a governed performance engine for customers and employees.

Article content

The shift is from UX as interface to UX as orchestration—powered by agents, governed by trust, measured by outcomes. For years, banks and insurers optimized experiences around steps: fewer clicks, better NPS, digitized forms. In 2026–2028, the experience layer becomes a control loop: detect intent → verify identity → execute action → explain outcome → log evidence. That is true for customers (disputes, onboarding, claims, servicing, advice) and for employees (case handling, compliance tasks, underwriting, collections). The new experience stack is built on three foundations: intent, orchestration, and trust.

Three forces converge here:

- Agents compress the journey—so experience becomes “completion,” not “navigation” When customers interact via conversational interfaces and AI agents, the “journey” is no longer a page flow—it’s an execution workflow that spans identity, policy, risk controls, and core systems. This is why agentic commerce and agentic servicing require machine-callable product surfaces and traceable decisions (ties directly to Trend #17 and Trend #7).

- Trust-first regulation and scam pressure force “protective friction” design Fraud and scams are pushing institutions toward calibrated safeguards (step-up auth, holds, confirmations, verified comms). The experience challenge is hard: add friction without breaking conversion. This is where behavioral design and risk-tiered UX become strategic.

- Workforce experience becomes an operating model redesign, not a tooling rollout Copilots and agents change how work is done: fewer queues, more supervision of automation, more exception handling, more quality control. The workforce shift is from “individual productivity” to team throughput with measurable unit economics (ties directly to Trend #5 AI economics). (Experience and personalization themes are consistently emphasized across major industry research and outlooks; WEF coverage on customer trust and digital systems provides useful framing on why trust becomes a competitive foundation.) https://www.weforum.org/agenda/archive/financial-and-monetary-systems/ https://www.weforum.org/agenda/archive/cybersecurity/

Business impact

- Cost & efficiency: Experience becomes the cost-to-serve engine. Automated resolution and guided servicing reduce contact volume and handle time. Workforce copilots reduce rework and improve consistency (fewer errors, fewer escalations).

- Speed & adaptability: Journeys become configurable workflows. Changes ship as orchestration updates and policy bindings, not multi-quarter UI rewrites. Faster partner/embedded expansions because experience is API- and workflow-driven.

- Risk & resilience: Bad experiences become regulated events. Complaints, disputes, and scam harms drive supervisory and reputational exposure. Audit-grade decision traces and verified communications become experience requirements—not compliance add-ons.

- Revenue & differentiation: Outcomes win loyalty—not brand. Faster resolution, proactive prevention, and personalized guidance drive retention and cross-sell. Institutions that can execute intent safely become preferred partners in embedded ecosystems.

CXO CTAs

- Redefine experience KPIs around outcomes, not clicks Track completion rate, time-to-resolution (claims/disputes), first-contact resolution, complaint rate per 10k, scam-loss avoided, and “time-to-safe-completion” for high-risk actions.

- Build an “Intent Orchestration Layer” across channels Treat orchestration as a platform: identity, consent, eligibility, policy checks, and execution steps—so the same intent can complete via app, call center, agent, or partner experience.

- Design risk-tiered friction as a product capability Implement protective UX patterns: step-up by risk, cooling-off holds, confirmation of payee, verified comms, and guided warnings—measured for both fraud reduction and conversion impact.

- Turn workforce copilots into a throughput operating model Define where copilots assist vs act; set QA and escalation thresholds; measure productivity by team throughput and error rate—not “usage.” Build “agent supervisors” as a role.

- Make explainability and evidence part of the experience Every consequential outcome should include a customer-facing explanation and an internal audit trail: what inputs mattered, what policy applied, what options exist to appeal.

Trend-specific CTA

If customers increasingly express intent through agents—and regulators expect outcome-proof—can your experience layer execute, explain, and evidence decisions end-to-end, or does it still just route people through screens and queues?

Trend #34: Organizational Redesign & the War for AI Talent