Fraud In The Age Of GenAI And Agentic AI: When “Who Did It?” Becomes A Systems Question

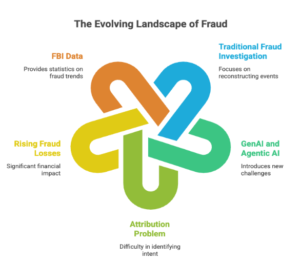

The main goal of fraud investigations has always been to put the pieces of reality back together.

Who did what. What has changed? When it happened. Where it came from. Why it was done. How it was done.

The “five W’s and one H” framework is still important, but GenAI and agentic AI are bending it in ways that make it hard to use. The hardest part of modern fraud isn’t just finding it out. It’s giving someone else the power to act like a user when actions can be done by software that acts like a user

And the stakes are getting higher very quickly. In 2024, people in the U.S. said they lost $12.5 billion to fraud, which is a 25% increase from the year before. The FBI’s Internet Crime Complaint Center said that losses in 2024 were over $16 billion, mostly because of fraud and cyber-enabled scams.

A working definition of fraud and why it still works

Fraud is often defined as lying to a victim by hiding, misrepresenting, or leaving out facts in order to make money.

The definition isn’t changing. It’s the way it gets there.

1) GenAI Talks. Agentic AI Does.

GenAI (chat mode) typically looks like: a user asks, the model answers. There’s usually a trace: prompts, timestamps, account IDs, device identifiers, session metadata.

Agentic AI shifts the interaction from conversation to delegation:

- “Check my inbox and draft replies.”

- “Reconcile these invoices.”

- “Update the spreadsheet and email the vendor.”

- “Clean up my browser artifacts.” (yes, criminals will ask this)

When the agent can call tools (email, files, APIs, browsers), it starts generating actions, not just text. That’s where fraud—and the cover-up of fraud—gets easier.

Now add collaborative / multi-agent setups: one agent plans, another executes, a third sanitizes logs. At that point, the human’s original intent can be diluted across a chain of machine-to-machine steps.

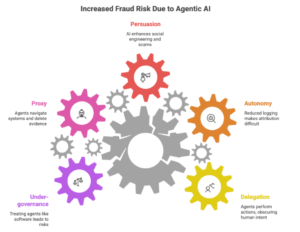

2) Autonomy: The Attribution Problem Hiding In Plain Sight

A practical way to see the risk is through levels of autonomy—where the human role can shift from operator to observer across five levels.

As autonomy increases:

- fewer explicit clicks happen,

- fewer direct commands are logged,

- more decisions are made “inside” the agent workflow,

- and attribution becomes a defense argument: “it was the bot, not me.”

That doesn’t mean the human isn’t responsible. It means your evidence model must evolve—from “user did X” to “a delegated identity caused X.”

3) The New Fraud Stack: Persuasion + Impersonation + Scale

GenAI has already turbocharged classic fraud patterns:

Deepfake-enabled social engineering

Law enforcement has warned that criminals are using AI to craft convincing voice/video messages and emails that enable fraud schemes.

Scam sites that “converse”

Criminals increasingly embed AI chatbots into fraudulent sites to keep victims engaged and push them toward clicks, payments, or credential entry.

Organized crime industrializing AI

Europol has warned that AI is accelerating organized crime—making scams cheaper, more multilingual, more persuasive, and harder to investigate, with concern about future autonomous systems being used criminally.

The takeaway: GenAI amplifies the front-end (lure). Agentic AI amplifies the back-end (execution).

4) “Crime-By-Proxy”: When Agents Become The Fall Guy

The most dangerous shift isn’t that AI can write phishing emails.

It’s that agentic AI can:

- navigate internal systems,

- chain tools together,

- move money (or trigger the workflow that moves money),

- delete evidence,

- and do it with delegated access that looks legitimate.

Security frameworks are catching up. OWASP’s Agentic Security work and “Top 10 for Agentic Applications” highlights risks like tool misuse, identity/privilege abuse, unexpected code execution, insecure inter-agent comms, and rogue agents.

And this isn’t theoretical. Popular open-source “AI assistants” that connect to messaging channels and run skills/tools have already triggered concerns around extension/skill supply-chain risk and malicious add-ons.

5) A Boardroom-Ready Control Model For Agentic Risk

If you treat agents like “just software,” you will under-govern them.

Treat them like digital employees.

Here’s the control stack that actually scales:

A) Give every agent a verifiable identity

- Separate human identity from agent identity

- Strong auth (hardware-backed where possible)

- Explicit delegation records (who granted what, when, and why)

B) Least privilege + hard boundaries

- Minimal rights per agent task

- Time-bound credentials

- Rate limits and scope limits (what systems/tools it can touch)

- Segregation of duties: the agent that drafts cannot also approve/pay

C) Audit trails that survive the agent era

- Immutable logging for tool calls (email sent, file deleted, API invoked)

- “Receipt-style” action records: inputs → reasoning context reference → action → outcome

- Retention policies designed for investigations, not just observability

D) Human-in-the-loop where it matters

Not for everything—just for irreversible actions:

- payments

- permission changes

- mass deletion

- data exports

- security policy changes

E) Kill switch + blast-radius design

- one-click disable per agent identity

- circuit breakers for anomaly spikes (sudden deletion, unusual transfers, abnormal tool chaining)

F) Supply-chain governance for skills/plugins

- signed extensions

- curated registries

- sandbox execution

- continuous scanning (because “skills” are code, even if they look like prompts)

6) GenAI “For Good”: Faster Fraud Analysis, Better Triage

There’s a positive counterforce: GenAI can dramatically accelerate investigation work:

- rapid document ingestion across formats (PDFs, emails, chat exports)

- entity extraction (accounts, IPs, addresses, invoice patterns)

- timeline reconstruction

- anomaly summarization for analysts

There’s also early evidence that GenAI can improve fraud risk identification in audit contexts—especially helping less-experienced practitioners surface more relevant fraud risks without simply adding noise.

But if you use GenAI in investigations, you need guardrails:

- preserve chain of custody

- separate “model suggestions” from “verified evidence”

- require reproducible outputs (same inputs, same extracted artifacts)

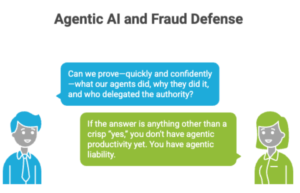

The Leadership Point

GenAI made fraud more persuasive. Agentic AI makes fraud more executable.

So the real transformation is this:

Fraud defense is no longer just a detection problem. It’s an identity + authorization + traceability problem—across humans and machines.

If you’re deploying agents in the enterprise, ask one board-level question:

“Can we prove—quickly and confidently—what our agents did, why they did it, and who delegated the authority?”

If the answer is anything other than a crisp “yes,” you don’t have agentic productivity yet. You have agentic liability.

Leave a Reply