Digital Products, Apps & Experience (2026–2028)- Part 2

Let’s now begin Part 2. Part 1 established the foundations of agentic products.Part 2 explores how to scale them with the right operating model, economics, governance, and control.The shift now is from architecture to execution at enterprise scale.

Trend #19: Unified AI Operations — ModelOps, AgentOps, AIOps, FinOps

From 2026 through 2028, “AI in production” stops being a model problem and becomes an operations discipline. You don’t just manage models—you manage agent behavior, tool access, context quality, cost, and incidents as one connected system. NIST’s AI RMF makes the core point: trustworthy AI requires ongoing governance across the lifecycle, not one-time approvals. (nvlpubs.nist.gov)

The winners will run AI like a critical production platform: observable, controlled, and economically managed.

Article content

The shift is from “deploying models” to “operating agentic systems.”

Three forces converge here:

1) Drift expands beyond models to prompts, tools, and behaviors

Model drift is only one failure mode. Prompts change, tools change, policies change, data changes—so agent behavior drifts even if the model version doesn’t. This is why lifecycle governance is now emphasized as a trust requirement. (nvlpubs.nist.gov)

2) Observability must cover the whole chain of action

Enterprise operations need end-to-end traces: intent → context → reasoning → tool calls → side effects → outcome → rollback. Traditional APM is insufficient when the “app” is a dynamic agent workflow.

3) Cost becomes a runtime control variable

Agentic systems create variable COGS (context size, model choice, retries, tool calls). FinOps practices must extend into routing, caching, and policy-based throttling to keep margins stable.

Business impact

- Higher reliability: fewer production surprises because drift and unsafe behavior are detected early and gated. (nvlpubs.nist.gov)

- Faster incident response: unified traces shorten time-to-diagnose and reduce “unknown cause” outages.

- Lower cost volatility: model/tool routing and context controls stabilize unit economics.

- Stronger governance: auditable decision chains and policy enforcement reduce regulatory and security exposure.

CXO CTAs

- Unify ops ownership: one operating model spanning ModelOps + AgentOps + AIOps + FinOps, with shared SLOs and incident playbooks.

- Standardize runtime telemetry: trace every agent action with context provenance, tool calls, approvals, and outcomes—treat gaps as a production defect.

- Implement drift gates: monitor prompt drift, tool drift, and behavior drift; require staged rollout and rollback for changes.

- Make cost a policy: budget caps, routing rules, caching defaults, and throttles tied to business priority.

- Run “agent failure” drills: simulate prompt injection, runaway automation, and bad tool calls—measure time to contain and restore trusted operation.

Trend #20: Platform Engineering, Green Software & Digital Resilience

From 2026 through 2028, engineering competitiveness is increasingly determined by the platform you run, not the apps you build. Internal Developer Platforms (IDPs) become the delivery advantage; green software becomes a cost-and-compliance requirement; resilience becomes a product promise. Gartner’s 2026 trends explicitly elevate Green Software Engineering and Digital Resilience as strategic priorities—signaling these are moving from “nice to have” to board-visible constraints. (gartner.com)

Article content

The shift is from “teams owning delivery” to “platforms owning delivery quality, cost, and resilience.”

1) Platform engineering becomes the scaling mechanism

IDPs standardize golden paths (build, deploy, test, observe, secure) so every team can ship reliably without reinventing pipelines. The CNCF platform engineering framing reflects how organizations use platforms to improve developer experience and throughput at scale. (cncf.io)

2) Carbon becomes an engineering variable

As compute loads grow (especially AI), energy becomes a first-order design constraint. The Green Software Foundation defines principles and practices for reducing software-related emissions and treating sustainability as an engineering discipline, not a CSR slide. (greensoftware.foundation)

3) Resilience shifts from SRE practice to product commitment

Chaos engineering and resilience testing are moving from “advanced teams” to baseline expectations as digital dependency deepens. Google’s SRE thinking (and the broader resilience movement) reinforces that reliability is a feature you engineer continuously, not something you inspect after the fact. (sre.google)

Business impact

- Higher velocity with less chaos: teams ship faster because the platform removes friction and standardizes quality controls. (cncf.io)

- Lower cost-to-serve: efficient pipelines, right-sized environments, and energy-aware compute choices reduce operational burn—especially under AI workloads. (greensoftware.foundation)

- Improved resilience: fewer severe incidents and faster recovery when resilience is tested continuously and engineered into architectures. (sre.google)

- Stronger trust posture: sustainability and resilience increasingly influence enterprise buying and regulatory scrutiny; they become differentiators, not overhead. (gartner.com)

CXO CTAs

- Fund an Internal Developer Platform as a product: clear ownership, roadmap, adoption metrics, and “golden paths” for build/deploy/observe/secure. (cncf.io)

- Set resilience SLOs per critical journey: measure and engineer availability, latency, error budgets, and recovery—tie to release gates. (sre.google)

- Make carbon a design constraint: introduce software carbon intensity targets for major workloads; require energy-aware defaults in platform tooling. (greensoftware.foundation)

- Institutionalize chaos and failure drills: test failover, dependency loss, and degraded-mode operation—treat “unknown unknowns” as preventable. (sre.google)

- Report platform outcomes to the exec level: developer lead time, change failure rate, MTTR, and energy-per-transaction for key services. (gartner.com)

Trend #21: AI Compute Fabric, Sovereignty & Data Infrastructure

From 2026 through 2028, AI stops being an “application layer” conversation and becomes infrastructure strategy: compute placement, isolation, data movement, latency, energy, and sovereignty determine what you can safely deploy—and at what unit cost. Gartner’s 2026 trends explicitly elevate geopatriation and hybrid computing realities, reinforcing that location/control constraints are now architectural fundamentals. (gartner.com)

Article content

The shift is from “cloud capacity” to an AI compute fabric you actively route and govern.

1) Hybrid AI becomes default: cloud + on-prem + edge

Physical AI, low-latency workflows, and regulated data force hybrid designs. NVIDIA’s direction on AI factories and accelerated computing underscores that modern AI deployment is increasingly tied to purpose-built infrastructure choices, not generic hosting. (nvidia.com)

2) Confidential computing and workload isolation become non-negotiable

As more sensitive data is used for inference and agents take actions, isolation boundaries matter (tenant isolation, enclaves, key control). Azure positions confidential computing as enabling data-in-use protection—critical for regulated inference and sensitive workloads. (learn.microsoft.com)

3) Carbon-aware and cost-aware routing becomes a runtime capability

AI COGS is volatile: model choice, context size, retries, and placement all change cost. Google’s guidance on carbon-aware computing and region-based carbon impact reflects the broader direction: routing decisions become both economic and sustainability decisions. (cloud.google.com)

Business impact

- Cost control becomes structural: unit economics improve when routing, caching, and placement are engineered—not left to ad-hoc team choices.

- Compliance and deal velocity improve: sovereignty-aligned architecture reduces “no” decisions from regulators, auditors, and risk teams. (gartner.com)

- Performance and resilience increase: edge/hybrid placement reduces latency and dependency fragility for critical workflows.

- Strategic leverage increases: you can avoid lock-in when your fabric supports multi-provider, portable deployments and clear control boundaries.

CXO CTAs

- Define what must be sovereign vs shared: data, models, keys, logs, and telemetry—by jurisdiction and risk class; bake into architecture standards. (gartner.com)

- Build a routing control plane: policy-based routing across models, regions, and runtimes (latency, cost, carbon, sensitivity) as a first-class platform capability. (cloud.google.com)

- Standardize isolation primitives: confidential computing where needed, strong tenant boundaries, and explicit key ownership—treat exceptions as high-risk. (learn.microsoft.com)

- Engineer real-time data movement deliberately: event streaming + governed access beats bulk replication; minimize “AI data sprawl.”

- Measure AI unit economics: cost per outcome unit (claim resolved, ticket deflected, order rerouted) becomes the governing metric—not GPU hours.

Trend #22: Revenue Intelligence Layer

From 2026 through 2028, growth leaders stop treating revenue as a CRM problem and start treating it as a product intelligence problem. The revenue engine becomes an always-on layer that combines product telemetry, customer signals, and commercial workflows to drive expansion, retention, and pricing decisions in near real time. This maps to the broader “revenue architecture” direction: data, process, and automation converge to surface the next best action, not just report pipeline. (mckinsey.com)

Article content

The shift is from “RevOps reporting” to “in-product revenue execution.”

1) Product telemetry becomes the source of truth for value

As PLG and usage-based motions mature, the most predictive signals live in the product: time-to-value, journey completion, feature friction, renewal risk indicators, and expansion triggers. Amplitude’s PLG guidance reflects the increasing emphasis on behavioral signals for growth. (amplitude.com)

2) AI turns signals into actions inside the workflow

The point isn’t better dashboards; it’s action automation: risk detection → outreach → offer → negotiation support → renewal orchestration. Salesforce’s Einstein positioning reflects the market direction toward embedding AI into revenue workflows to move from insight to action. (salesforce.com)

3) Variable AI COGS forces outcome-aware monetization

As costs become variable (tokens, orchestration, tool calls), pricing and packaging must align to measurable outcomes and usage patterns—otherwise margin surprises appear post-scale. This aligns with the broader shift toward usage and value-based pricing models in modern SaaS. (stripe.com)

Business impact

- Higher net revenue retention: earlier churn detection and proactive save motions based on real usage signals. (amplitude.com)

- Faster expansion: in-product “next best action” surfaces drive upsell at the moment value is proven, not weeks later via quarterly reviews.

- Better pricing discipline: outcome/usage alignment reduces discounting and improves margin predictability as COGS becomes variable. (stripe.com)

- Reduced RevOps drag: fewer manual pipeline hygiene cycles; more automated orchestration and measurable playbooks.

CXO CTAs

- Unify product + revenue signals: create one governed layer combining product telemetry, support signals, billing, and CRM—stop operating with disconnected “truths.”

- Define expansion and churn SLOs: measurable targets for renewal risk detection time, save-rate, and expansion conversion—managed like operational performance.

- Embed revenue actions into the product: surface value proof, recommended next steps, and renewal workflows in-product where the user is already acting.

- Align pricing to outcomes and COGS: define outcome units and cost envelopes early; adjust packaging before scale exposes margin risk. (stripe.com)

- Automate the playbooks: codify “if signal X then action Y” with human oversight for high-stakes accounts—turn RevOps into a supervised automation system.

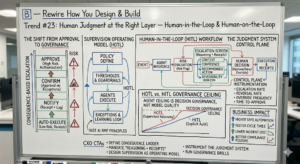

Trend #23: Human Judgment at the Right Layer — Human-in-the-Loop & Human-on-the-Loop

From 2026 through 2028, the adoption ceiling for agents won’t be model quality—it will be decision governance. Enterprises will separate work into:

- HITL for consequential decisions needing explicit human authorization, and

- HOTL for supervised autonomy where humans govern policies, thresholds, and exception handling.

NIST’s AI Risk Management Framework reinforces the lifecycle governance premise: trust requires defined accountability, monitoring, and controls—not “set and forget.” (nvlpubs.nist.gov)

Article content

The shift is from “humans approve everything” to “humans govern the system that approves.”

1) Consequence-based escalation becomes the core UX pattern

The next enterprise UX standard is confidence- and consequence-graded confirmation: low-risk actions auto-execute with receipts; high-risk actions escalate with full context, confidence, and named authority.

2) Auditability becomes a product feature, not a log file

The decision chain must be user-navigable: what the agent saw, what it inferred, what it did, why it did it, and how to reverse it—built into the experience.

3) Supervision replaces micromanagement

HOTL operating models win because they scale: humans define policies, thresholds, and stop conditions; agents execute within guardrails; exceptions become the learning loop.

Business impact

- Higher safe automation: autonomy expands without unacceptable risk because escalation is designed, not improvised. (nvlpubs.nist.gov)

- Faster cycle times: routine work flows through; humans focus on exceptions and accountability.

- Lower incident cost: clearer reversibility and traceability reduce blast radius when something goes wrong.

- Better compliance posture: defensible controls and accountability structures accelerate approvals and reduce regulatory friction.

CXO CTAs

- Define a “consequence ladder”: classify actions by risk and required human involvement (auto, notify, confirm, approve).

- Mandate “reasoning + receipts” on escalations: every approval screen must carry context, confidence, recommended action, and reversibility horizon.

- Design supervision as an operating model: assign owners for policy, thresholds, escalation routes, and incident response for agents.

- Instrument the judgment system: track escalation rate, reversal rate, override frequency, and time-to-approve—optimize the control plane, not just the agent.

- Run governance drills: simulate ambiguous or adversarial scenarios mid-process and measure time to restore safe operation.

Trend #24: The New Enterprise UX Paradigm — Process-Centric, Insight-Driven & Self-Explaining

From 2026 through 2028, enterprise UX stops being “module navigation” and becomes process execution. Dashboards and reports give way to insight + action surfaces: what matters now, why it matters, and the next best action—embedded in the workflow. This aligns with NN/g’s guidance that AI changes UX by enabling more contextual, adaptive experiences, but only succeeds when it reduces user burden rather than adding novelty. (nngroup.com)

Article content

The shift is from “help users find features” to “help users complete outcomes with minimal cognitive load.”

1) Navigation collapses into intent + process state

Users shouldn’t learn your product’s menu. The product should understand their role, the process stage, and what’s reversible—and generate the right actions accordingly.

2) Self-explaining systems replace training and manuals

Guidance becomes real-time, role-aware, and moment-aware. Instead of static help content, the product explains this decision, now—and shows evidence and alternatives.

3) Authentication and risk become part of conversion design

High-trust actions are engineered as frictionless, risk-calibrated journeys (step-up auth only when necessary). Passkeys and modern auth patterns signal the direction: better security can mean better UX when designed correctly. (fidoalliance.org)

Business impact

- Higher journey completion: fewer stalled processes, fewer errors, less rework because the product reduces ambiguity and step count. (nngroup.com)

- Lower implementation and training cost: self-explaining design reduces dependence on manuals, training courses, and heavy change management.

- Faster adoption of AI execution: users trust agents more when the system explains what changed, why, and how to undo it.

- Improved security outcomes: risk-calibrated UX reduces phishing and credential friction while maintaining assurance. (fidoalliance.org)

CXO CTAs

- Redesign around top journeys, not modules: define 10–20 critical processes and engineer end-to-end completion with step-count budgets and clarity as KPIs.

- Standardize “Insight → Action” cards: every insight must include embedded action, evidence, confidence, and reversibility—not a link to another screen.

- Make the decision chain visible: show what the agent used, what it inferred, what it did, and who approved—inside the experience.

- Replace training with embedded guidance: invest in contextual help generated from governed process/ontology, not static documentation.

- Treat auth as experience architecture: adopt phishing-resistant methods and design step-ups as a conversion optimization problem, not a security tax. (fidoalliance.org)

Trend #25: Multimodal Intelligence as the Primary Product Interface — Unified Multimodal Canvas

From 2026 through 2028, voice, video, text, and documents converge into one working surface. The “interface” isn’t a page—it’s a canvas where meetings become structured inputs, screenshots become executable context, and spoken intent becomes actions and audit trails. The user stops switching modalities; the system does.

Article content

The shift is from “channels” to “one continuous interaction loop.”

1) Meetings become executable process inputs

Decisions made in calls are automatically captured as structured outcomes: tasks, approvals, exceptions, and follow-ups—linked to evidence, owners, and due dates.

2) Video/audio move from content to intelligence

Products start understanding what’s said and what’s shown (screens, whiteboards, site footage, equipment behavior) and converting it into operational context: risks, anomalies, missing steps, compliance gaps.

3) The canvas becomes the new control room

The “work surface” becomes a unified place to: review context, see reasoning, approve consequential actions, and replay the decision chain—without bouncing between tools.

Business impact

- Faster cycle time: less translation from discussion → action; fewer dropped handoffs.

- Lower operational friction: fewer “where is the latest doc / decision / status” loops.

- Higher trust: decisions can be tied to evidence (what was said/shown), improving auditability.

- New differentiation: the product that becomes the default multimodal canvas becomes the default place work happens.

CXO CTAs

- Pick 3 “meeting-to-execution” workflows: sales pipeline reviews, incident calls, operations standups—turn outcomes into structured system actions.

- Design evidence-first UX: every recommended action carries the supporting clip/snippet/screenshot reference plus confidence and reversibility.

- Standardize the multimodal audit chain: what was observed → what was decided → what was executed → who approved.

- Guard privacy and consent by design: explicit capture boundaries, retention controls, and role-based visibility for audio/video-derived context.

- Measure the real KPI: decision-to-action time, not meeting count.

Trend #26: Collaboration as Process Origin

From 2026 through 2028, collaboration stops being “where people talk” and becomes where processes start. Chats, meeting notes, and document edits turn into structured triggers: tasks are created, approvals routed, cases opened, policies enforced, and actions audited—automatically.

Article content

The shift is from “collaboration as communication” to “collaboration as orchestration.”

1) Decisions become structured artifacts automatically

Instead of manually translating discussion into tickets and tasks, systems extract decisions, owners, deadlines, and required next steps—then initiate the workflow.

2) The gap between deciding and acting collapses

When collaboration surfaces are connected to agentic execution, “we agreed” becomes “it’s in motion” within seconds—reducing lost work and stalled outcomes.

3) Governance moves into the flow of work

Policies (data handling, approvals, segregation of duties) are enforced at the point of collaboration-triggered action—not after the fact.

Business impact

- Higher throughput: fewer handoffs and fewer “status chase” loops.

- Lower coordination tax: less manual project admin and fewer missed commitments.

- Better accountability: decisions have named owners, timestamps, evidence, and audit trails.

- Reduced risk: fewer off-system approvals and less untracked execution.

CXO CTAs

- Make collaboration a governed entry point: define which collaboration signals can start which processes (and which cannot).

- Standardize “decision objects”: decision, owner, due date, risk rating, reversibility—captured consistently across tools.

- Instrument decision-to-action time: treat delay as operational waste and optimize it continuously.

- Embed approvals where decisions are made: route high-impact actions through consequence-based confirmation.

- Create an “execution receipt”: every collaboration-triggered action produces a traceable record of what changed and why.

Trend #27: Agentic Search & Conversational-First Architecture

From 2026 through 2028, search stops being retrieval and becomes execution. The primary UX pattern becomes: ask → clarify → act → prove. Instead of returning a list, search returns an insight plus the first 3–5 executable steps, with governance and reversibility.

This is also a distribution shift: products increasingly win by becoming the authoritative domain source that AI systems cite, consume, and transact with. (nngroup.com)

Article content

The shift is from “find information” to “complete work through dialogue.”

1) Conversational becomes the default entry point

Users start with intent, not navigation. The product’s job is to clarify the minimum, then execute safely.

2) Search results become action surfaces

The new “result” is: recommendation + evidence + embedded action + next-best step—not a document list.

3) Trust is engineered into the flow

To allow execution, the system must show: sources/grounding, confidence, policy checks, approvals when needed, and a reversibility horizon—otherwise conversational execution becomes operational risk. (nvlpubs.nist.gov)

Business impact

- Higher productivity: fewer context switches between search, docs, apps, and tickets.

- Faster cycle time: work starts and completes in the same conversational surface.

- Lower support load: fewer “how do I” queries; more automated completion with receipts.

- New moat: “domain truth + actionability” becomes defensible—agents prefer sources they can trust and invoke. (nngroup.com)

CXO CTAs

- Redesign search as a workflow engine: require every high-value query class to return an action path, not just content.

- Publish machine-consumable domain truth: structured, citeable knowledge + callable actions so AI systems can rely on you as a source of record.

- Instrument outcome metrics for search: task completion rate, time-to-complete, escalation rate, reversals, and “answer-with-action” coverage.

- Build governance into conversational execution: approvals, policy checks, and audit trails are non-negotiable in agentic search. (nvlpubs.nist.gov)

- Treat “discoverability” as agent-first: optimize for AI consumption and transactions, not only human SEO.

Trend #28: Intelligent Lifecycle — Onboarding, Proactive Support & Renewal Orchestration

From 2026 through 2028, onboarding, support, and renewals collapse into one continuous, agent-driven lifecycle system. Instead of reactive tickets and quarterly QBRs, products run an always-on loop: onboard dynamically → detect friction → fix proactively → prove value → orchestrate renewal.

Article content

The shift is from “post-sale functions” to “post-sale automation as product architecture.”

1) Onboarding becomes persona-based and adaptive

The product learns role, intent, maturity, and context—then generates the shortest path to first value. Static walkthroughs are replaced by guided execution.

2) Support becomes “fix-before-ticket”

The system detects anomalies, failed journeys, and confusion signals, then resolves issues autonomously or escalates with full diagnostic context—before users complain.

3) Renewals become continuous value proof

Instead of scrambling at renewal time, the product continuously records outcomes achieved and surfaces value evidence to users and decision-makers.

Business impact

- Higher activation and retention: faster time-to-value and fewer early drop-offs.

- Lower cost-to-serve: fewer tickets and shorter resolution cycles through proactive diagnostics.

- Higher renewal rate: value proof becomes concrete and continuous, reducing churn risk.

- Better product learning: friction signals feed directly into roadmap and quality prioritization.

CXO CTAs

- Define lifecycle SLOs: time-to-first-value, journey completion, proactive resolution rate, escalation rate, renewal health score.

- Instrument “struggle signals”: retries, backtracks, abandon points, repeated searches, support intent—treat as defects.

- Build proactive support playbooks: detect → diagnose → resolve → verify → learn, with human-on-the-loop controls for sensitive actions.

- Create a value ledger: continuously capture outcomes delivered per account and surface it as in-product evidence.

- Unify lifecycle ownership: product + success + support + RevOps run one orchestration model, not separate tooling silos.

Trend #29: Hyper-Personalization as Policy & Empathetic Engagement

From 2026 through 2028, personalization stops being a campaign tactic and becomes a governed product capability. The enterprise shift is toward personalization that is consent-aware, auditable, and real-time—and where tone and interaction adapt to the user’s context, sentiment, and risk level without crossing trust boundaries.

Article content

The shift is from “personalization as growth” to “personalization as a policy-controlled system.”

1) Personalization becomes permissioned memory

Personalization depends on memory (preferences, history, role context). That memory must be governed: what’s stored, why, retention, deletion, and who can access it.

2) Empathy becomes an escalation and risk tool

Tone adaptation isn’t “nice UX”—it reduces friction in support, collections, HR, and high-stress workflows. But it must be bounded to avoid manipulation or inconsistency.

3) Policy becomes the differentiator

The most trusted products will make personalization rules explicit: what is customized, what is never customized, what triggers escalation, and what requires confirmation.

Business impact

- Higher completion and satisfaction: less user friction because workflows adapt to role and moment.

- Lower support cost: better intent detection and guided resolution reduces repeat contacts.

- Reduced compliance risk: auditable consent and policy controls prevent “creepy” or unsafe personalization.

- Better retention: personalization compounds when users trust it and see consistent value.

CXO CTAs

- Define “personalization boundaries”: what’s allowed, what’s prohibited, and what requires explicit consent.

- Make personalization auditable: log why something was personalized, what data was used, and how the user can correct or opt out.

- Use sentiment as a routing signal: escalate to humans when stakes are high or frustration is rising.

- Separate “model capability” from “policy permission”: the system may be able to infer—doesn’t mean it’s allowed to use it.

- Measure trust, not just conversion: track opt-outs, complaints, reversals, and escalation rates as leading indicators.

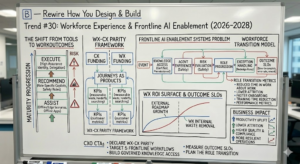

Trend #30: Workforce Experience & Frontline AI Enablement

From 2026 through 2028, enterprises close the biggest productivity gap: internal experience (WX) finally treated as a product on par with customer experience (CX). Frontline and knowledge workers get role-specific copilots/agents embedded into daily work, with clear maturity progression: assist → recommend → execute.

Article content

The shift is from “tools for employees” to “employee outcomes delivered by agents.”

1) WX becomes the fastest ROI surface

Internal friction (handoffs, searching, approvals, rework) is measurable waste. Agents remove it faster than most external feature roadmaps generate growth.

2) Frontline enablement becomes a systems problem

Frontline value requires reliability under constraints: offline/edge scenarios, safety rules, simplified interaction, and high assurance identity.

3) The workforce shifts from doing work to supervising work

As execution becomes delegated, roles evolve toward exception handling, stewardship, and accountability—requiring new training and operating models.

Business impact

- Productivity uplift: reduced time in “work about work” (searching, chasing, duplicating).

- Lower attrition and faster onboarding: better tools reduce frustration and speed proficiency.

- Higher quality and compliance: guided execution reduces errors in high-volume frontline workflows.

- More resilient operations: standardized, agent-assisted work reduces dependence on a few experts.

CXO CTAs

- Declare WX-CX parity: fund internal journeys like products with owners, roadmaps, and KPIs.

- Target 5 frontline workflows first: pick high-frequency, high-error tasks and implement assist → recommend → execute progression.

- Build governed knowledge access: frontline agents must use certified, permissioned knowledge with clear escalation paths.

- Measure outcome SLOs: time-to-complete, error rate, rework, escalations, and training time reduction.

- Plan the role transition: redesign roles, training, and performance metrics around supervision and exception handling.

Trend #31: Autonomous Product Support & Self-Healing Systems

From 2026 through 2028, support shifts from tickets to autonomous resolution. Products detect, diagnose, and remediate issues before users notice—tier-0 becomes automated, and escalations carry full context. The product becomes its own first responder.

Article content

The shift is from “reactive support” to “engineered reliability as experience.”

1) Experience SLOs become contractual

Beyond uptime, leaders track journey completion, time-to-resolution, escalation rate, and recurrence. Reliability is measured in user outcomes, not infrastructure health.

2) Diagnosis becomes automated, not tribal

Systems correlate telemetry, logs, traces, and recent changes to identify likely root cause and propose fixes, rollbacks, or mitigations.

3) Self-healing requires guardrails

Autonomous remediation only scales with policy: what can auto-fix, what needs approval, and what must escalate—with clear reversibility.

Business impact

- Lower cost-to-serve: fewer tickets and shorter MTTR.

- Higher retention: fewer “silent churn” moments caused by unresolved friction.

- Better reliability at scale: as change volume increases, autonomous operations prevent quality collapse.

- Stronger trust: users see fast resolution with transparent receipts.

CXO CTAs

- Define “self-heal eligible” incidents: start with safe remediations (restart, rollback, config revert, cache clear, reroute).

- Instrument experience SLOs: journey completion, time-to-resolution, escalation rate, and repeat-incident rate.

- Require escalation receipts: every handoff includes what happened, why, what changed, and what to do next.

- Build change-to-incident correlation: connect deployments to customer impact automatically.

- Run autonomous ops drills: test self-heal behavior under real failure scenarios with kill-switches and rollback paths.

Trend #32: Accessibility Redefined & Sustainability as Experience Design

From 2026 through 2028, accessibility and sustainability stop being compliance side-quests and become experience fundamentals. Two forces push this into the release pipeline: next-gen accessibility standards are evolving (WCAG 3.0 is progressing in W3C drafts), and regulation is already in effect (the European Accessibility Act sets EU-wide accessibility requirements for covered digital products/services). (W3C)

Article content

The shift is from “accessible + green as checklists” to “accessible + green as engineered product qualities.”

1) Accessibility expands beyond visual impairment to cognitive + conversational + multimodal

AI makes it possible to generate real-time adaptations (simplified language, guided flows, multimodal alternatives), but only works when built into design systems and QA—not bolted on late. WCAG 3.0’s direction signals broader scope and new conformance thinking. (W3C)

2) Regulation turns accessibility into a release gate

The EAA creates a concrete market-access constraint: if you serve EU markets in covered categories, accessibility is no longer optional. Treat it like security—noncompliance becomes a product risk. (European Commission)

3) Sustainability becomes a measurable UX attribute, not CSR

As AI and rich experiences increase compute, “green UX” becomes about reducing waste: fewer steps, less data transfer, efficient defaults. The Green Software Foundation’s SCI work formalizes measuring software carbon intensity so teams can optimize intentionally. (sci.greensoftware.foundation)

Business impact

- Lower legal and market-access risk via built-in compliance readiness. (European Commission)

- Higher completion and satisfaction by reducing cognitive load and offering adaptive interaction paths. (W3C)

- Lower run cost when sustainability practices reduce unnecessary compute and data movement—especially under AI workloads. (sci.greensoftware.foundation)

- Brand trust lift by treating inclusion and sustainability as product quality, not messaging.

CXO CTAs

- Make accessibility a release gate: embed tests (manual + automated), acceptance criteria, and owner accountability for critical journeys. (European Commission)

- Design for cognitive accessibility: step-count budgets, plain-language defaults, guided execution, and multimodal alternatives as standard patterns. (W3C)

- Adopt SCI-style measurement for priority services: track carbon intensity per journey/transaction and fund reductions like performance work. (sci.greensoftware.foundation)

- Treat sustainability as experience: reduce unnecessary compute, compress flows, and make “efficient by default” a design principle. (greensoftware.foundation)

- Report it like reliability: accessibility defects and carbon hotspots become part of the product quality dashboard.

Trend #33: Video & Audio as Primary Product Intelligence Surfaces

From 2026 through 2028, video and audio stop being “content types” and become first-class intelligence inputs. Products will routinely understand calls, screen recordings, site footage, and voice notes—turning them into structured signals, actions, and audit trails. This shift is accelerated by rapid progress in multimodal AI and long-context processing that make continuous interpretation practical, not experimental. (ai.google.dev)

Article content

The shift is from “record and store media” to “reason over media and execute.”

1) Calls become operational systems

Meetings and support calls become structured objects: decisions, risks, commitments, and next steps—automatically extracted and routed into workflows.

2) Visual evidence becomes machine-readable

Screenshots and video become inputs for diagnosis, compliance checks, safety validation, and workflow verification—reducing reliance on manual interpretation.

3) Media creates a new trust surface

When actions are triggered from what was said or shown, provenance, consent, retention, and auditability become mandatory—media intelligence must be governed like financial data.

Business impact

- Faster decision-to-action: less manual translation from conversations to tickets, approvals, and tasks.

- Lower support cost: richer context reduces back-and-forth; escalations carry “evidence packets.”

- Higher compliance and quality: audio/video-derived checks catch issues humans miss under time pressure.

- New differentiation: products that convert media into outcomes become the default operational layer.

CXO CTAs

- Pick 2–3 media-first workflows: incident calls, contact center QA, sales handoffs, site inspections—where evidence-to-action speed matters most.

- Define governance up front: consent, capture boundaries, retention, redaction, role-based access, and audit logs for every media-derived action.

- Standardize “evidence packets”: every recommendation carries the exact clip/snippet, timestamp, confidence, and reversibility guidance.

- Measure media ROI: decision-to-action time, reduction in escalations, and rework avoided—treat as operational efficiency, not “AI feature value.”

- Plan for trust failures: run drills where media is incomplete, conflicting, or adversarial; ensure safe fallback to human judgment.

Trend #34: Agentic Product Lifecycle Management

From 2026 through 2028, product management shifts from periodic planning to continuous, agent-supported lifecycle control. Agents don’t just generate ideas—they monitor real usage, detect friction, propose changes, simulate impact, and help ship and validate improvements as a closed loop.

Article content

The shift is from “roadmaps and releases” to “continuous lifecycle orchestration.”

1) Discovery becomes always-on

Instead of quarterly research cycles, systems continuously detect struggle signals (abandonment, retries, escalations, negative sentiment) and translate them into hypotheses.

2) Prioritization becomes outcome- and risk-driven

Backlogs evolve into dynamic portfolios governed by outcome lift, cost-to-serve reduction, and risk exposure—not opinion battles.

3) Validation becomes telemetry-native

Every change is shipped with measurement plans: expected outcome, guardrails, rollback triggers—validated in days, not quarters.

Business impact

- Higher value per deploy: fewer low-impact releases; more measurable improvements.

- Faster learning loops: shorter time from “problem observed” to “fix validated.”

- Lower product risk: continuous monitoring reduces silent failure and drift.

- Better alignment: product, engineering, success, and support operate on the same lifecycle signals.

CXO CTAs

- Mandate lifecycle telemetry: define the canonical set of outcome + friction metrics per priority journey.

- Replace static roadmaps with dynamic portfolios: govern priorities by measured lift and risk, not calendar commitments.

- Operationalize “safe change”: every release includes guardrails, rollback plans, and escalation paths.

- Create agent-assisted product ops: a dedicated function that runs the continuous lifecycle loop and ensures measurement integrity.

- Review “learning velocity” monthly: how fast you detect issues, ship fixes, and prove impact becomes a leadership KPI.

Trend #35: Agentic Customer Support Models

From 2026 through 2028, customer support shifts from “handling cases” to completing resolutions. Agents move beyond answering—into tool-using diagnosis, policy-aware action, and verification, while humans focus on exceptions, empathy-heavy moments, and consequential approvals. Gartner is already projecting that agentic AI will autonomously resolve the majority of common issues over time, pushing support leaders into an operating-model rewrite, not a tooling upgrade. (gartner.com)

Article content

The shift is from “answers” to “resolutions with receipts.”

1) Support becomes tool-using and action-capable (not text-only)

Agents increasingly diagnose by pulling telemetry, checking warranties/entitlements, reproducing issues, executing safe remediations, and confirming outcomes—then escalating with full context when needed. This “digital-first” support shift is visible in real enterprise moves like Intel’s Copilot Studio-based assistant that can open/update cases and escalate to humans. (tomshardware.com)

2) Tiering collapses into consequence-based routing

Instead of rigid L1/L2/L3 queues, routing becomes confidence + consequence: low-risk issues auto-resolve; higher-risk issues require confirmation/approval and human-on-the-loop supervision. This aligns with Gartner’s emphasis on blending human strengths with AI and the reshaping of frontline roles. (gartner.com)

3) Knowledge becomes executable playbooks, not static articles

Static FAQs become insufficient. Support knowledge turns into governed playbooks: symptoms → diagnosis → allowed actions → verification → rollback, enabling consistent autonomous resolution. Market platforms are explicitly positioning “AI agents” around resolving requests end-to-end, not just deflecting. (zendesk.com)

Business impact

- Lower cost-to-serve: more tier-0/1 resolution without human time, fewer repeat contacts. (zendesk.com)

- Faster resolution: fewer handoffs; escalations arrive with “evidence packets” (what was tried, what worked, what failed).

- Higher trust: customers accept automation when actions are transparent, reversible, and auditable—especially for account changes and sensitive workflows.

- Better product quality: resolved-issue patterns feed directly into self-healing and lifecycle improvement loops.

CXO CTAs

- Start with “safe-to-fix” classes: access issues, known-error patterns, configuration resets—where automated actions are low-risk and reversible.

- Mandate escalation packets: every escalation must include diagnostic steps taken, telemetry used, confidence, recommended next action, and rollback guidance.

- Build an executable playbook library: codify allowed actions by issue class with policy gates and approvals for consequential changes.

- Measure support like a production system: time-to-resolution, autonomous resolution rate, re-contact rate, escalation rate, and reversal rate for automated fixes.

- Treat support as part of the product: the best support model is engineered into the product’s telemetry, self-healing, and governed action surfaces.

D — Recapture How You Compete & Price

Trend #36: Token-Era Economics & Outcome-Based Pricing

From 2026 through 2028, per-seat pricing steadily loses legitimacy for agentic products. Cost is no longer mostly fixed software OpEx—it becomes variable digital labor COGS (tokens, tool calls, orchestration, monitoring, and human supervision). This is why leading billing and SaaS economics thinking is converging on usage and value-based models, especially where marginal cost is real and measurable. (stripe.com)

The winners will price what they deliver: outcome units with telemetry-backed attribution and risk-calibrated contracts.

Article content

The shift is from “selling access” to “selling measurable work completed.”

1) AI introduces variable unit economics into software

When each action has a compute cost, pricing must reflect consumption and value—or margins will break at scale. Usage-based pricing principles are increasingly positioned as the right fit where variable costs track with usage. (stripe.com)

2) Outcome units replace seats as the commercial language

Customers don’t want “tokens.” They want claims resolved, tickets deflected, contracts reviewed, fraud prevented. Defining outcome units forces clarity on scope, quality, and exception handling—turning pricing into an operating model.

3) Finance and engineering converge (FinOps-for-AI becomes core)

Pricing can’t be set without controlling runtime cost drivers (context length, routing, retries, tool choice). Cloud FinOps principles—visibility, allocation, optimization—become embedded into product architecture decisions. (finops.org)

Business impact

- Better gross margin control: pricing aligns to variable COGS; routing/caching become profitability levers. (finops.org)

- Faster enterprise buying: outcomes map cleanly to business cases and procurement language.

- More durable differentiation: competitors can copy features; they struggle to copy outcome reliability and unit economics.

- New contract structures: base + outcome, shared-risk, and performance-linked pricing become normal for high-value agentic workflows.

CXO CTAs

- Define 3–5 outcome units per product line: measurable, auditable, and hard to game (include quality thresholds and exclusions).

- Instrument outcome attribution in-product: telemetry that ties actions → outcomes → value, with audit-ready records.

- Build a cost envelope model: expected cost per outcome by segment and scenario; design routing/caching to stay inside it. (stripe.com)

- Adopt FinOps-for-AI governance: allocate costs, set budgets, enforce caps, and optimize continuously—run it as a product discipline. (finops.org)

- Design shared-risk offers carefully: specify reversibility, dispute mechanisms, and supervision costs so outcome pricing doesn’t become hidden services creep.

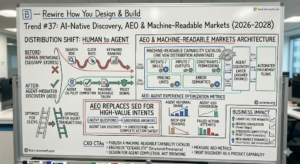

Trend #37: AI-Native Discovery, AEO & Machine-Readable Markets

From 2026 through 2028, distribution shifts from human browsing (SEO, app stores) to agent-mediated discovery (answer engines, copilots, automated procurement flows). Products increasingly win because they are machine-readable, citeable, and executable—not because they rank for keywords. Microsoft’s guidance on making content work for AI-powered experiences reflects this direction: content must be structured so AI systems can reliably interpret and use it. (learn.microsoft.com)

Article content

The shift is from “optimize for clicks” to “optimize for agent transactions.”

1) Answer engines become the new top-of-funnel

Users ask a model, not a search box—and accept the best grounded answer. If your product is not a trusted source the model can cite and verify, you become invisible in the new discovery layer. (nngroup.com)

2) AEO replaces SEO for high-value intents

Agent Experience Optimization (AEO) means: can an agent discover your capability, understand constraints, and complete an action safely? Standards like schema.org show the long arc: structured metadata improves machine interpretation; now it becomes a competitive moat. (schema.org)

3) Machine-readable catalogs become a distribution advantage

The winning products publish structured, machine-consumable catalogs of capabilities, policies, and prices—so agents can compare, decide, and transact without manual browsing.

Business impact

- Lower CAC for winners: less dependency on paid acquisition when agents reliably route intent to authoritative, executable sources.

- Higher conversion: fewer steps from discovery → proof → action when the product is agent-callable.

- Stronger competitive moat: machine-readable truth + execution reliability is harder to copy than marketing content.

- New market dynamics: rankings shift from keywords to trust signals: provenance, freshness, policy clarity, and successful task completion.

CXO CTAs

- Publish a machine-readable capability catalog: intents/skills, inputs/outputs, constraints, permissions, pricing units, and error semantics—kept versioned and stable.

- Engineer “citeability”: structured domain truth with clear provenance so AI systems can reference you confidently. (schema.org)

- Design for agent completion, not human browsing: the KPI becomes task completion rate via conversational entry points. (nngroup.com)

- Measure AEO metrics: agent referral share, agent-led conversion, drop-off reasons, and failed action attempts.

- Treat discovery as a product capability: own it like reliability—instrument, test, and improve continuously.

Trend #38: Ecosystem Mesh, B2B2A & Agentic Network Effects

From 2026 through 2028, markets add a new buyer layer: B2B2A—business-to-business-to-agent. Agents increasingly mediate selection, execution, and even procurement, shifting power toward platforms that own orchestration and identity. Gartner’s strategic predictions explicitly discuss AI agents reshaping buying and interaction patterns by 2028, signaling this intermediary layer becomes real market structure, not a thought experiment. (gartner.com)

Article content

The shift is from “ecosystems of APIs” to “ecosystems of agent-callable capabilities.”

1) Orchestration ownership becomes competitive gravity

Whoever owns the orchestration layer controls routing: which tools are called, in what order, under which policies. That creates platform power similar to mobile OS/app stores—often more extreme because agents can switch tools instantly.

2) Network effects become agent-to-agent, not only user-to-user

Value compounds when more capabilities, better context, and more verified outcomes exist inside an ecosystem. Every successful agent-executed task becomes data that improves routing, reliability, and trust—reinforcing the winner.

3) Trust becomes the gate for ecosystem participation

In a world of tool-using agents, ecosystems must enforce: identity, permissioning, provenance, and contracts—otherwise they become unsafe marketplaces. OWASP’s LLM guidance highlights why tool access must be constrained and validated outside the model. (cheatsheetseries.owasp.org)

Business impact

- Winner-take-most dynamics intensify: orchestration owners can bundle, steer demand, and shape economics.

- Faster partner scaling: standardized capability surfaces reduce integration friction and speed ecosystem expansion.

- Higher switching risk: if your product is not the default skill inside orchestration ecosystems, usage can shift quickly.

- Governance becomes a monetizable differentiator: safer ecosystems attract enterprise-grade participation.

CXO CTAs

- Decide your role: orchestrator, capability provider, or both: be explicit—strategy differs dramatically. (gartner.com)

- Design for ecosystem callability: publish stable skills/intents with clear constraints, auditability, and predictable semantics.

- Build for “agent trust”: enforce permission manifests, validations, and safe tool access patterns—don’t rely on model behavior. (cheatsheetseries.owasp.org)

- Instrument ecosystem network effects: track partner capability coverage, agent completion rates, and cross-product reuse.

- Protect differentiation: invest in domain truth and verified outcome reliability—the assets ecosystems value most.

Trend #39: Vertical SaaS Consolidation & Domain-Truth Positioning

From 2026 through 2028, vertical SaaS faces a hard fork: become a trusted, machine-accessible domain truth layer—or get absorbed by horizontal orchestration platforms that can wrap and commoditize UI-level differentiation. Analysts are already signaling increased consolidation pressure in software markets alongside the rise of AI-native platforms, which accelerates “platform wins, point solutions lose.” (mckinsey.com)

Article content

The shift is from “vertical workflows” to “vertical truth + executable compliance.”

1) Domain truth becomes the defensible moat

In the agentic economy, the product that wins isn’t the one with more screens—it’s the one whose definitions, rules, and constraints are trusted enough for agents to act on.

2) Consolidation accelerates when horizontals own orchestration

If a horizontal orchestration layer can route work across systems, point products become interchangeable unless they control a unique domain dataset, regulatory mapping, or outcome reliability.

3) The “domain layer” must be machine-usable

Vertical SaaS must publish machine-consumable semantics, policies, and callable skills—so agents can verify and transact with it directly (not scrape UI).

Business impact

- Increased consolidation risk for UI-led vendors: features get copied; distribution shifts to orchestration owners.

- Pricing power for domain-truth leaders: compliance-grade semantics and verified outcomes justify premium models.

- Faster enterprise adoption: trusted vertical truth reduces integration ambiguity and governance friction.

- New partnerships: vertical truth providers become preferred components inside broader ecosystems.

CXO CTAs

- Define your “truth assets”: proprietary datasets, regulatory mappings, benchmarks, and validated workflows—make them explicit and invest to strengthen them.

- Publish callable domain capabilities: expose trusted intents and constraints for agent consumption; don’t let horizontals become your only interface.

- Guarantee quality with SLOs: accuracy, freshness, and compliance coverage become contractual differentiators.

- Prepare for consolidation scenarios: decide whether you’re a consolidator, a specialist truth layer, or an acquisition target—then build accordingly.

- Stop competing on UI breadth: shift roadmap investment toward domain semantics, provenance, and outcome reliability.

Trend #40: Product Intelligence as Revenue Architecture

From 2026 through 2028, product analytics stops being “insight for dashboards” and becomes revenue architecture: instrumentation that proves value continuously, triggers the right commercial action, and ties outcomes to pricing and expansion. This aligns with the market shift toward usage/value-based growth where behavior signals are the most predictive indicators of retention and expansion. (amplitude.com)

Article content

The shift is from “measuring usage” to “engineering monetizable outcomes.”

1) Telemetry becomes a governed strategic asset

Signals like journey completion, time-to-value, struggle events, and outcome attainment become the basis for renewal health, expansion timing, and product investment.

2) PLG + RevOps converge inside the product

Commercial motions move in-product: value proof cards, recommended next steps, expansion prompts, renewal readiness, and risk flags—where users are already acting.

3) AI makes telemetry executable

Agents convert signals into actions: risk detected → outreach created → offer generated → workflow launched → impact tracked—under governance and human oversight.

Business impact

- Higher NRR: proactive save and expansion based on real value signals. (amplitude.com)

- Better margin discipline: outcome telemetry supports outcome pricing and helps manage variable AI COGS.

- Faster GTM loops: what works becomes visible quickly; what fails gets corrected before quarter-end.

- Stronger trust: customers accept monetization when value proof is transparent and continuous.

CXO CTAs

- Define “value proof” per segment: what outcomes matter, how they’re measured, and how they’re shown in-product.

- Instrument the top journeys deeply: completion, time-to-complete, reversals, escalations, and friction points.

- Build in-product action cards: every insight should carry next best action, owner, and expected impact.

- Govern telemetry like a product contract: standard event definitions, quality checks, and audit-ready linkage to commercial actions.

- Tie pricing to measurable outcomes: align packages and expansion triggers to outcome units, not feature lists.

Trend #41: Digital Labor Economy — Agents as the New Factor of Production

From 2026 through 2028, agents stop being “features” and become a labor category with unit economics, management overhead, and productivity curves. McKinsey’s “superagency” framing signals the direction: AI changes how work is performed and supervised, not just how fast content is produced. (mckinsey.com)

Article content

The shift is from “software spend” to “digital workforce capacity.”

1) Agents create variable, measurable capacity

Work can be priced and managed as units: cases resolved, invoices matched, contracts reviewed—each with cost, quality, and supervision requirements. Usage/value-based billing models are the commercial reflection of this variable “labor” reality. (stripe.com)

2) Management becomes a real cost (and a real competency)

A digital workforce needs onboarding (policies, permissions, playbooks), performance monitoring (error rates, escalation rates), and continuous improvement (drift control). NIST’s AI RMF reinforces that trustworthy AI demands lifecycle governance and accountability. (nvlpubs.nist.gov)

3) Finance and operating model converge

CFOs will increasingly ask: what is the cost per outcome, what risks are being assumed, and what controls exist? FinOps principles become operational discipline for digital labor—cost visibility, allocation, optimization, and guardrails. (finops.org)

Business impact

- New unit economics: cost per outcome becomes the governing metric, not licenses per user. (finops.org)

- Higher throughput with supervision: scale improves, but only if escalation and QA are engineered, not improvised. (nvlpubs.nist.gov)

- Procurement reset: buyers negotiate capacity, SLAs/SLOs, and risk transfer—not feature checklists.

- New regulatory frontier: accountability, auditability, and decision traceability become essential as agents act in consequential workflows.

CXO CTAs

- Define “digital labor units” by function: pick 3–5 outcome units (claim resolved, ticket deflected, exception cleared) and standardize measurement.

- Stand up a digital workforce operating model: onboarding, access control, supervision, performance reviews, incident playbooks. (nvlpubs.nist.gov)

- Build cost guardrails into runtime: budgets, routing rules, and throttles—treat overspend like an outage. (finops.org)

- Contract on outcomes + quality: define acceptance thresholds, reversibility, dispute handling, and audit requirements before scaling.

- Train leaders to manage agents: shift management capability from “work allocation” to “system supervision and policy governance.”

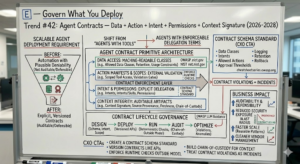

E — Govern What You Deploy

Trend #42: Agent Contracts — Data + Action + Intent + Permissions + Context Signature

From 2026 through 2028, scalable agent deployments will require a new governance primitive: agent contracts—explicit, versioned agreements that define what an agent can access, what it can do, under what intent, with what permissions, and with what context integrity. Without contracts, multi-agent systems are not auditable or legally defensible; they’re just automation with plausible deniability. This aligns with the broader trust direction in NIST’s AI RMF: governance must be engineered across the lifecycle, not implied. (nvlpubs.nist.gov)

Article content

The shift is from “agents with tools” to “agents with enforceable delegation terms.”

1) Data access must be contract-bound, not prompt-bound

Agents need machine-readable declarations of allowed data classes, retention, and usage constraints—because “don’t do X” in natural language is not a control.

2) Actions require manifests and explicit scopes

Tool access must be scoped and validated outside the model. OWASP’s LLM guidance reinforces that tool use must be constrained and verified—models can be manipulated. (cheatsheetseries.owasp.org)

3) Context integrity becomes chain-of-custody

Enterprises need proof of what context was used (source, freshness, permissions, transformations) to defend decisions, resolve disputes, and investigate incidents—especially when agents act autonomously.

Business impact

- Auditability and defensibility: clearer responsibility, traceability, and “who authorized what” for every delegation. (nvlpubs.nist.gov)

- Reduced security exposure: fewer unsafe tool calls and lower blast radius from prompt injection and agent hijacking. (cheatsheetseries.owasp.org)

- Faster scale: teams can reuse contract patterns across products, rather than rebuilding governance per agent.

- Cleaner vendor management: contracts become the interoperability layer across tools, agents, and platforms.

CXO CTAs

- Create a contract schema standard: data classes, intents, allowed actions, approval thresholds, logging, retention, and rollback requirements.

- Version contracts like APIs: breaking changes require deprecation windows and automated compatibility testing.

- Enforce runtime checks outside the model: policy engines, allowlists, and validation gates must be deterministic. (cheatsheetseries.owasp.org)

- Build chain-of-custody for context: source provenance, permissions, transformations, and “what the agent saw” stored as auditable artifacts.

- Treat contract violations as incidents: monitor and alert on scope breaches, anomalous tool calls, and unauthorized context use.

Trend #43: Guardian Agents — Product-Level & Enterprise-Level AI Governance

From 2026 through 2028, governance itself becomes agentic. Instead of relying only on static policies and manual reviews, enterprises deploy guardian agents that monitor, intervene, and enforce policy in real time—at the product level and across the enterprise. This aligns with NIST’s AI RMF emphasis on continuous monitoring and governance across the AI lifecycle. (nvlpubs.nist.gov)

Article content

The shift is from “governance as paperwork” to “governance as a runtime control plane.”

1) Real-time policy enforcement replaces periodic reviews

Guardian agents continuously check actions against rules: data classification, consent, approvals, segregation of duties, and permitted tool scopes. When conditions aren’t met, they block, escalate, or require confirmation.

2) Cross-agent risk emerges at enterprise scale

Individually compliant agents can combine into non-compliant outcomes (collusion-by-composition): one agent gathers sensitive context, another executes an action, a third publishes a summary—net effect violates policy. Guardian agents must detect these cross-product patterns.

3) Monitoring shifts from “model performance” to “behavior compliance”

The critical question becomes: did the system behave within policy under real conditions? OWASP’s LLM guidance reinforces why runtime protection matters—prompt injection can cause agents to take unsafe actions unless controls exist outside the model. (cheatsheetseries.owasp.org)

Business impact

- Lower governance cost at scale: automation reduces reliance on manual approvals and post-hoc audits.

- Reduced catastrophic risk: faster detection of unsafe actions, policy drift, or cross-agent failures.

- Faster deployment cycles: teams ship with confidence when policy is enforced at runtime, not debated in committees.

- Stronger defensibility: continuous logs of enforced decisions and interventions improve audit readiness. (nvlpubs.nist.gov)

CXO CTAs

- Define “guardian scope”: what must be enforced universally (data access, approvals, logging) vs domain-specific policies.

- Deploy policy-as-code at runtime: deterministic evaluation outside the model; guardians only interpret, route, and intervene.

- Monitor cross-agent behavior: detect multi-step patterns that create emergent policy violations.

- Instrument intervention metrics: blocks, escalations, overrides, false positives—optimize governance like an operational system.

- Run “governance failure” drills: simulate prompt injection, policy bypass attempts, and cross-agent collusion to validate containment.

Trend #44: Enterprise AI Security Architecture — From Perimeter to Agent-Native

From 2026 through 2028, AI security stops being “model security” and becomes agent-native security: protecting tool access, memory, context supply chains, and multi-agent trust boundaries. Traditional perimeter thinking has no equivalent for prompt injection, tool misuse, and agent hijacking. OWASP’s LLM guidance makes the direction clear: you must assume adversarial inputs and enforce controls outside the model. (cheatsheetseries.owasp.org)

Article content

The shift is from “secure the app” to “secure delegation and action.”

1) Prompt injection becomes an execution risk, not a content risk

If agents can call tools, injection can trigger real-world side effects. Controls must validate intent, scope, and permissions at runtime—regardless of what the model outputs. (cheatsheetseries.owasp.org)

2) Tool access is the new attack surface

APIs, connectors, file systems, browsers, and admin actions become reachable through natural language. That expands the threat model to include unsafe tool chaining, privilege escalation, and data exfiltration through “legitimate” actions.

3) Multi-agent trust boundaries require explicit controls

When agents pass context and tasks between each other, you need trust boundaries: identity, least privilege, scoped tokens, provenance checks, and kill-switches—otherwise compromise propagates laterally.

Business impact

- Reduced breach likelihood: deterministic controls shrink the blast radius of prompt injection and tool misuse. (cheatsheetseries.owasp.org)

- Faster incident containment: clear boundaries and audit trails improve detection and response.

- Safer autonomy: enterprises can allow more automated actions when enforcement is agent-native and testable.

- Lower compliance exposure: stronger DLP, consent enforcement, and traceability for AI-driven actions.

CXO CTAs

- Adopt an agent-native threat model: map tool surfaces, memory stores, context sources, and delegation paths—treat them as security-critical.

- Enforce least privilege for agents: scoped credentials per intent; time-bound permissions; explicit allowlists for tools/actions.

- Add runtime validation gates: policy engines and action validators outside the model—no direct execution from generated text.

- Secure the context supply chain: provenance checks, data classification, DLP, and prompt injection filtering at ingestion.

- Run red-team exercises for agents: test prompt injection, data exfiltration, unsafe tool chaining, and cross-agent lateral movement.

Trend #45: Digital Provenance, Responsible AI & Regulatory Compliance by Design

From 2026 through 2028, compliance stops being a review step and becomes product architecture. Enterprises will need defensible answers to: what was AI-generated, what data influenced it, who approved it, and what changed as a result. This aligns with NIST’s posture that managing AI risk requires lifecycle controls, documentation, and monitoring—not just model selection. (nvlpubs.nist.gov)

Article content

The shift is from “prove compliance after” to “produce audit evidence by default.”

1) Provenance becomes the trust substrate

You need tamper-evident records of creation and change: who produced content (human/AI), when, how it was transformed, and what sources were used. Content provenance standards like C2PA define cryptographically verifiable manifests for digital media—an anchor model for enterprise-grade provenance patterns. (c2pa.org)

2) Regulation forces “controls, not claims”

AI governance is moving from principles to enforceable requirements in multiple jurisdictions. The EU AI Act is a major reference point for risk-based obligations, documentation, and transparency expectations. (eur-lex.europa.eu)

3) Responsible AI becomes release engineering

Fairness testing, explainability requirements, and audit readiness become part of CI/CD gates—similar to security testing—because the cost of non-defensibility shows up in incidents, legal disputes, and procurement blocks. (This aligns with the governance lifecycle emphasis in NIST.) (nvlpubs.nist.gov)

Business impact

- Lower legal and reputational exposure: clear chain-of-custody and attribution reduces dispute ambiguity. (c2pa.org)

- Faster enterprise adoption: procurement and regulators move faster when evidence is built-in, not assembled manually. (eur-lex.europa.eu)

- Safer autonomy: provenance + policy gates make it feasible to allow more agent execution without losing control.

- Higher trust in AI outputs: users accept AI assistance when attribution and evidence are transparent.

CXO CTAs

- Make provenance a platform capability: signed logs for inputs, prompts/intent, context sources, tool calls, outputs, and approvals—tamper-evident by default. (c2pa.org)

- Adopt “compliance-by-design” gates: documentation, risk classification, testing evidence, and monitoring plans required before deployment. (eur-lex.europa.eu)

- Standardize AI attribution in the UX: “AI-assisted” markers, source references where appropriate, and clear user recourse paths.

- Operationalize Responsible AI QA: bias checks, drift monitoring, incident playbooks—owned like reliability. (nvlpubs.nist.gov)

- Treat audit evidence as a product feature: if you can’t reconstruct a decision fast, you don’t control the system.

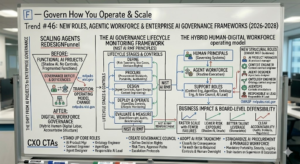

Trend #46: New Roles, Agentic Workforce & Enterprise AI Governance Frameworks

From 2026 through 2028, scaling agents forces an org redesign. Enterprises need new roles (context engineering, agent design, ontology engineering, AgentOps) and new governance structures (risk taxonomies, procurement standards, board oversight) because a hybrid human–digital workforce changes accountability. NIST’s AI RMF reinforces the need for defined governance functions, accountability, and lifecycle monitoring as core trust requirements. (nvlpubs.nist.gov)

Article content

The shift is from “AI projects in functions” to “enterprise governance for a digital workforce.”

1) New roles become structural, not optional

Agentic systems need people who can define meaning, context, constraints, and evaluation—not just implement models. NIST’s emphasis on governance and measurement implies these responsibilities must be owned, not improvised. (nvlpubs.nist.gov)

2) Governance moves to enterprise operating model

As agents cross processes and systems, governance can’t sit in one team. It requires cross-functional councils and clear decision rights: what gets automated, under what controls, and with what evidence.

3) Procurement and benchmarking become core capabilities

Buying “AI capabilities” demands new evaluation frameworks: safety, auditability, sovereignty, cost envelopes, portability, and contract terms—because vendor claims are not operational guarantees.

Business impact

- Faster scale with fewer failures: clear ownership and governance reduce shadow AI and unsafe deployments.

- Lower risk exposure: risk taxonomies and release gates prevent “automation by enthusiasm.”

- Better talent leverage: principal humans govern systems; agents handle routine execution.

- Board-level defensibility: clearer accountability improves response to incidents, audits, and regulators. (nvlpubs.nist.gov)

CXO CTAs

- Stand up the core roles: AI Product Manager, Context Engineer, Agent Designer, Ontology Engineer, AgentOps, and Responsible AI lead—mapped to clear deliverables.

- Create an enterprise AI governance council: define decision rights, risk tiers, approval paths, and escalation protocols.

- Adopt an AI risk taxonomy: classify use cases by consequence; tie each tier to required controls, audit evidence, and human oversight.

- Standardize AI procurement criteria: portability, security, logging/provenance, cost transparency, and compliance support become mandatory.

- Train leaders to manage a hybrid workforce: supervision, escalation design, and performance measurement for digital labor become management fundamentals.

Anti-Patterns to Avoid (2026–2028)

Anti-Pattern 1: “Copilot Layer on Top of Broken Processes”

Why it looks attractive: fast demos, minimal change, “AI on the UI” feels low-risk. Why it fails: you automate ambiguity. Agents amplify process flaws (unclear ownership, inconsistent definitions, missing controls), producing higher-volume errors and escalations—often misdiagnosed as “hallucinations.” Governance frameworks like NIST’s AI RMF explicitly push lifecycle controls and accountability because trust can’t be bolted on after the fact. (nvlpubs.nist.gov)

Anti-Pattern 2: “Let the Model Be the Control Plane”

Why it looks attractive: fewer engineering constraints, faster to ship, “the model can decide.” Why it fails: prompt injection, tool misuse, and policy bypass become inevitable at scale. OWASP’s guidance is clear that tool use must be constrained and validated outside the model—natural language isn’t enforcement. (cheatsheetseries.owasp.org)

Anti-Pattern 3: “Chase Model Upgrades Instead of Owning Domain Truth”

Why it looks attractive: new models feel like instant gains; vendor roadmaps substitute for strategy. Why it fails: differentiation shifts to proprietary context, semantics, and outcome reliability—not model brand. Gartner’s trend framing toward domain-specific models and AI-ready data semantics reflects that the moat is context + meaning + governance. (gartner.com)

Far-Fetch but Plausible Trend to Watch (3–5 Years): Enterprise “Reality Layers” — Verifiable Shared Context for Humans + Agents

This sounds unrealistic today because it feels like overkill: cryptographically verifiable context, provenance attached to every operational artifact, and a “truth layer” that both humans and agents rely on as the default substrate for action.

But as synthetic content, agentic execution, and machine-mediated markets expand, enterprises will need a way to rebuild shared reality at runtime—fast enough to act under pressure, not after an audit. Content provenance standards like C2PA already define signed, tamper-evident manifests for media and are an early signal of how “verifiable reality” may become normal infrastructure. (c2pa.org)

Article content

The shift is from “detect fakes” to “prove authenticity and integrity by default.”

Why it seems unrealistic today

- Provenance feels like extra work and friction.

- Enterprises lack unified identity, key management, and audit primitives across tools.

- Teams still treat trust as training + policies, not engineered controls.

Early signals to monitor

- Provenance moves from external media to internal operational artifacts (incident updates, approvals, evidence packets).

- “Receipts” become mandatory UX for agent actions (what changed, why, who approved, can it be undone).

- Increasing procurement requirements for audit-ready AI and decision traceability (consistent with risk-based regulation expectations like the EU AI Act). (eur-lex.europa.eu)

Why it could become material

When agents act, the enterprise needs a verifiable chain for: identity → intent → context → action → outcome. Without that, autonomy plateaus because trust collapses under incident pressure.

CXO CTAs

- Start provenance with high-trust workflows (payments, identity recovery, incident command, regulatory reporting).

- Standardize tamper-evident audit trails for agent actions and approvals.

- Treat “shared reality restoration” as an incident-response capability, not a compliance project.

- Build the foundations: identity, keys, policy-as-code, and contract registries—so provenance can scale.

One Trend That May Slow Progress: The “Trust Debt” Wall (Data, Meaning, and Governance Debt)

The biggest counter-force from 2026–2028 won’t be model capability. It will be trust debt: weak data quality, inconsistent semantics, unclear ownership, and missing controls. Organizations will discover that agents don’t fail gracefully—when meaning and governance are fragile, autonomy turns small defects into high-impact incidents. This is exactly why NIST frames trustworthy AI as a lifecycle governance problem, not a model selection problem. (nvlpubs.nist.gov)

Article content

The shift is from “AI readiness as access” to “AI readiness as trust infrastructure.”

What slows progress in practice

- Semantic fragmentation: different definitions of the same concept across systems (customer, contract, risk).

- Unowned data products: unclear accountability for freshness, quality, and change control.

- Governance gaps: missing policy-as-code, incomplete logging/provenance, weak approval models.

- Shadow AI: teams route around controls when official pathways are slow.

Why it delays value realization

- Leaders cap autonomy after a few incidents.

- Legal/compliance blocks scaled deployment.

- Teams lose confidence and revert to manual workarounds.

CXO CTAs

- Pay down trust debt deliberately: fund semantic layers, data contracts, and provenance as platform work—not discretionary projects.

- Adopt consequence-based autonomy: expand autonomy only where controls and reversibility are proven.

- Treat governance as runtime engineering: enforce deterministic checks outside the model.

- Measure trust health: escalation rate, reversal rate, policy violations, and “unknown cause” incidents as executive KPIs.