Sovereign AI and the Fragmented Cloud: How Tech Leaders Deal with the New Geopolitics of Intelligence

Sovereignty is now a problem for the CTO

AI is no longer just an “app”; it’s becoming decision infrastructure. Because of this, sovereignty has gone from being legal fine print to a core technology strategy.

The board’s question (“Where does our AI actually live, and who controls it?”) are really four questions: where data is stored and processed, who can access it (including operators and governments), which jurisdictions claim authority, and how quickly you can change things when rules or geopolitics change.

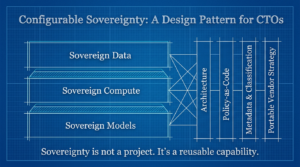

The practical answer is not a project that only needs to be done once. It’s a configurable sovereignty capability: a platform and operating model that can enforce local and control requirements without stopping innovation. It can also keep the business running through regulatory changes, export controls, provider disruptions, and regional access limits. The job of the CTO/CDO is to change sovereignty from a reactive constraint into a design pattern that can be used over and over again.

This includes sovereign data, sovereign compute, and sovereign models, which are put into place through architecture, policy-as-code, strong metadata/classification, and a vendor strategy that is made to be portable and shock-absorbent.

1) Why do boards now ask, “Where does our AI live, and who is in charge of it?””

Cloud regions didn’t suddenly become interesting to boards. They wanted to be in charge because AI makes it easier to see how infrastructure choices affect business results. When AI makes decisions about things like credit, pricing, eligibility, triage, forecasting, and security actions, jurisdictional control becomes a risk for the business.

What has changed in the last 24 to 36 months?

- AI supply chains are now real. Training data, foundation models, fine-tuning, retrieval pipelines, agent tools, and inference endpoints are all part of a distributed system that crosses vendors and countries. Sovereignty issues affect every link, not just the database.

- The rules now cover more than just “data.” The EU AI Act timeline makes this clear: it went into effect in 2024 and will phase in requirements for general-purpose AI models over the course of 2025–2027.

- Providers started to offer “sovereign” versions as a type of product. AWS announced the launch of a dedicated European Sovereign Cloud, which has its own operations and governance structures in the EU.

- It became clear that “data residency” and “control” did not match up. Even when data stays in the same country, there are still questions about who can access it, who can enforce the law, and where the law applies. This is especially true in countries like the U.S. where laws apply to people outside of the country. The CLOUD Act.

The board’s main worry isn’t that you’ll break a rule; it’s that you won’t be able to prove that you’re following it, adapt quickly enough, or keep going if a dependency becomes limited.

2) What’s new from data sovereignty to AI sovereignty?

In the past, sovereignty grew in waves:

- Data residency: “Keep personal data in the country or region.”

- Sovereign cloud: “Run cloud services with extra local controls and guarantees.”

- Sovereign AI: “Control how models behave, who can access them, where they come from, and where inference happens.”

AI changes the rules of the game by adding new layers of sovereignty:

- Training data: where it came from, if it can be used for training, and if derived artifacts like embeddings, gradients, and checkpoints are regulated.

- Model weights and variants: where they are kept, who can use them, and whether a jurisdiction can ask for access or limit their use or export.

- Inference endpoints: where requests are handled, whether prompts and outputs are kept, and what telemetry leaves the area.

- Agent toolchains show what systems agents can use (like ERP, HR, and payment rails), what actions they can take, and where those tools and logs are stored.

Sovereignty is no longer just “where the database sits.” It’s also where intelligence is made, stored, run, and checked.

3) The three parts of sovereign AI

Think of sovereign AI as a space with three axes for design. Most businesses fail because they only optimize one axis (data residency) and don’t pay attention to the others.

A) Sovereign data (where it is, who can access it, and where it is legal)

This includes:

- Location: where data is kept and used (in use and at rest).

- Access: who can get to it, like customer admins, vendor operators, subcontractors, and government workers.

- Jurisdiction: which legal systems can force disclosure or set limits.

The main problem is that data can be “resident” but not “controlled.” You need a posture for operator access, encryption key control, and auditability, not just a region choice.

B) Sovereign computing and the cloud (in the same region, in the same country, or run by a partner)

Here, sovereignty is about who runs the infrastructure and what rules they follow. Providers are moving toward things like “sovereign landing zone” patterns that put sovereignty controls into the foundations of platforms and “EU-operated sovereign environments.”

C) Sovereign models (hosted, trained, and controlled in the same place)

There is more than one type of model sovereignty (hosted and not hosted). Think in levels:

- Hosted control: model weights and inference run in the country, with local access controls and audit logs.

- Training control: fine-tuning and training are done on local data with local rules.

- Lifecycle control: approval, evaluation, safety policies, and rollback are all done within the jurisdiction, and evidence is kept there.

- Behavioral control: policy restrictions differ from one jurisdiction to another (for example, sector rules and restricted content) and must be enforceable without splitting the whole product.

4) The platform lens: sovereignty that can be changed, not one-offs

The best way to do this is to make configurable sovereignty a platform feature. This means having one global product architecture with regional control planes and policy-driven limits.

Why custom stacks don’t work:

- Every fork that is specific to a certain jurisdiction turns into a slow, expensive mini-cloud.

- AI systems change every week, and sovereign forks force you to choose between following the rules and getting things done quickly.

- The evidence for the audit becomes inconsistent, and the board loses faith in your ability to show control.

A platform approach means:

- A global “core” that stays the same (standards, controls, developer experience)

- Localized “enforcement” (where data is stored, who can access it, and where models can be deployed)

- One way to handle approvals, exceptions, and evidence

You don’t make a new app for each threat model; instead, you make controls that work in different risk situations.

5) Architectural patterns that work and when to centralize and when to localize

Pattern 1: Hub-and-spoke (with a global core and regional spokes)

Use this when you need to keep regulated data and inference local but want your products to be the same all over the world.

- Global hub: shared model governance, evaluation pipelines, code artifacts, policy definitions, and global telemetry schemas (not always raw data).

- Local data stores, local RAG indexes/embeddings, local inference endpoints, local audit evidence, and local incident response are all examples of regional spokes.

Centralize: standards, templates, evaluation frameworks, and “golden” controls.

Localize: regulated datasets, keeping prompts and outputs, identity logs, and running inference for limited workloads.

Pattern 2: Federated AI (centralized control with local training and inference)

Use when: data can’t leave the jurisdiction, and the model’s behavior has to be based on local facts.

- Train and fine-tune locally with datasets that have been approved.

- Make sure that model cards, evaluation suites, red-team tests, and release approvals all use the same process, even if the artifacts stay local.

Pattern 3: Bring your own key (BYOK) and keep control of the keys

This is very important if your sovereignty stance depends on keeping unauthorized people out. If the business is in charge of the keys and the policy says when to release them, the risk profile changes a lot, especially for operator access situations.

Pattern 4: For high-sovereignty zones, bring your own computer (BYOC)

Use this when you need to run inference or training on computers that you own (or in a government or regulated enclave) but still want to use standard tools and model lifecycle governance.

What really matters in these patterns

Not the picture. The points of enforcement are:

- Identity and access boundaries: separate admin planes in different areas.

- Data plane egress controls stop data from moving across borders without being detected.

- Policy decision points are checks that run at runtime and determine “where this request may execute” and “what tools this agent may call.”

- Evidence capture: logs that can’t be changed, kept locally, and ready for an audit.

6) Designing for changing regulations: regulation as a variable

I think that laws will change. Your architecture should treat rules about where things are like settings, not code.

Ways to make it work:

- Policy engines and policy-as-code can be used to set limits on data classes, allowed regions, allowed operators, retention and logging rules, and tool permissions for agents.

- Metadata and classification are the sovereignty control surface. You can’t route a dataset correctly or prove compliance if you don’t know what it is (PII, export-controlled, financial, health, defense-adjacent).

- Separation of concerns means that product logic shouldn’t have “Germany-only” logic, but policy should.

A helpful way to think about it:

Classification determines the placement of items. Policy determines what can transpire. Telemetry shows what really happened.

7) Operating model: who makes decisions about sovereignty?

When decisions stay in inboxes, sovereignty breaks. You need clear ownership and quick forums.

Roles (clear accountability is better than perfect org charts)

- Legal and Compliance: explains obligations and sets “must” limits.

- Risk: sets the scenario posture and tolerance (what happens if a provider can’t be reached in a certain area?).

- Security: sets up controls like keys, access, monitoring, and responding to incidents.

- The CTO/CDO of technology designs the platform, makes sure policies are followed, and makes sure that deliveries are fast.

- Product owners are responsible for trade-offs between user impact and market access.

Forums for making decisions that work

- AI Use Case Council (every other week) approves new AI use cases by jurisdiction class, not by making exceptions for each one.

- The Sovereignty Architecture Review (monthly) checks reference patterns and exceptions and makes reusable blueprints available.

- The Risk Scenario Committee meets every three months to run geopolitical and regulatory scenarios and update posture and contingency plans.

A useful guide for getting AI use cases approved in different places

- Sort the data and the importance of the decision.

- Choose a level of sovereignty (standard, regulated, restricted, or national-critical).

- Map to an accepted reference pattern, such as hub-spoke, federated, or BYOC.

- Run the standard eval and the red-team suite, and save the evidence locally.

- Policy gates, not manual checklists, should be used to approve deployment.

8) Vendor and ecosystem strategy in a world where there is sovereignty

Sovereignty is the point at which vendor contracts turn into architecture.

Questions to ask any platform (cloud and AI)

Planes and operations for control

- Who runs the environment (rules for staff residency, governance model, subcontractors)?

- Is it physically or logically separate from other areas or environments? (This is a main point of some sovereign cloud services.)

Paths for data

- Where do logs, prompts, outputs, and embeddings go?

- What telemetry goes outside of the jurisdiction by default?

The position of law enforcement and forced access

- What is the provider’s written procedure for dealing with government requests?

- Do they allow customers to keep their own keys and notify customers when it’s legal?

- How do they settle disputes between different jurisdictions, like claims for extraterritorial access?

Moving around

- Is it possible to move models, policies, and logs between regions and providers without rewriting the whole world?

- Is it possible to move your evaluation pipelines and policy definitions around, not just your containers?

Avoiding new lock-in while still being able to innovate

You don’t avoid lock-in by saying no to hyperscalers; you avoid lock-in by picking the right abstraction layers:

- Make sure that identity, policy, metadata, and evaluation are the same in all environments.

- Keep the “hard-to-migrate” state to a minimum (closed evaluation telemetry, opaque prompt retention, and proprietary vector indexes).

- Put model providers behind a governance envelope and treat them as if they are all the same: consistent model cards, consistent eval gates, and consistent logging.

Multi-cloud (a reality check for AI)

When every team picks a different stack, multi-cloud doesn’t work. It only works when you’re multi-cloud at the governance and policy level. AI makes chaos worse. You want one enterprise AI platform with multiple execution zones, not “three clouds and 14 exceptions.”

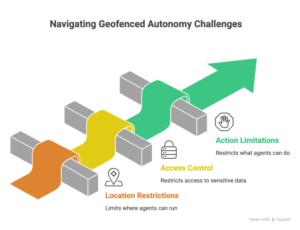

9) Sovereignty and agentic/autonomous systems: “geofenced autonomy”

Agents change sovereignty because they don’t just look at data; they do things with it.

Sovereignty limits affect agents in three ways:

- Where the agent runs (where it makes inferences and runs tools)

- What the agent can get to (sensitive datasets and systems of record)

- What the agent can do (give refunds, change prices, start security actions)

A pattern that works: agents who know about jurisdiction and policy

Make jurisdiction a top input:

- The agent runtime gets a policy context that includes the risk tier, business unit, user role, data class, and jurisdiction.

- Based on that context, access to tools is given dynamically (least privilege).

- Policy determines where to log and keep evidence.

What are “geofenced autonomy levels”? For example:

- Level 0: Help only (suggestions, no actions)

- Level 1: Draft-and-route (makes artifacts; needs human approval)

- Level 2: Bounded execution (can only do things within strict limits, like giving small refunds)

- Level 3: Conditional autonomy (does things, but only when policy gates are open and monitoring is good)

The sovereign design principle says that autonomy should slowly go away. If a jurisdiction blocks a dependency, your agent should go back to lower autonomy instead of failing completely.

10) Risk, resilience, and scenario planning: ready for the board, not driven by fear

Sovereignty isn’t just following the rules; it’s also being able to bounce back from global systems that don’t work as well as they should.

Things you should actively plan for:

- Data localization rules get stricter (new categories must stay local).

- Export controls make it hard to get advanced chips/models or get help across borders.

- Provider access bans (a cloud or AI service can’t be used in a certain area).

- Cross-border transfer methods change (the legal basis changes, and audits become more common).

You can answer when you’re in board-ready posture:

- What breaks first?

- What keeps going?

- How quickly can we change things?

- What do we have as proof?

A useful plan for resilience:

- Design for regional independence for “restricted” workloads like local inference, local logs, local identity integration, and local incident response.

- Provider exit drills: test restoring important AI services in a different zone (or provider) with clear RTO/RPO.

- Dependency mapping for AI includes models, vector stores, evaluation services, secrets/key stores, and observability pipelines.

And don’t hide this in architecture decks. Change it into 3 to 5 scenarios that affect the business and test them every three months.

11) Leadership story: talking about sovereignty without making people afraid

Executives don’t want to hear about regions; they want a plan that keeps growth going.

Frame sovereignty as something that makes things possible:

- Trust: showing that you have control lowers the risk of reputational and regulatory problems.

- Sovereign capabilities open up regulated markets and public sector opportunities.

- Resilience means having fewer places where geopolitics can go wrong.

- Speed: controls based on policy cut down on rework and “compliance theater.”

A story that works:

“We are creating configurable sovereignty, which means that we can enforce local control when we need to while still having a single enterprise platform for innovation.” This lowers the risk of not following the rules and makes things run more smoothly.

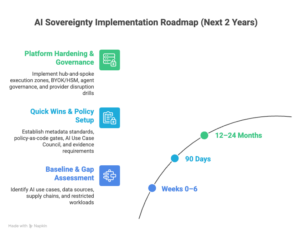

12) The next two years: a useful plan

Baseline and gap assessment (weeks 0–6)

- List the different ways AI can be used, the types of data it can work with, and the places it can work.

- Map AI supply chains: data sources, model providers, inference endpoints, logs, and tool integrations.

- Find “restricted” workloads, which are those that have a lot of regulatory exposure, involve making decisions that are critical to safety, or involve strategic data.

90-day moves (quick, with a lot of risk)

- Set up standards for enterprise classification and metadata for AI inputs, outputs, and training data.

- Set up policy-as-code gates for tool permissions, locality, and retention (begin with your top five use cases).

- Set up the AI Use Case Council and the patterns for sovereignty references.

- Require evidence by default: logging, model cards, and evaluation reports must be kept in the region for regulated workloads.

Moves that take 12 to 24 months (hardening the platform and operating model)

- Make the hub-and-spoke or federated execution zones fit with the levels of sovereignty.

- Mature key control (BYOK/HSM patterns) and private computing for sensitive workloads where “data-in-use” exposure is important.

- Create agent governance by setting geofenced levels of autonomy, tool policies, runtime enforcement, and constant monitoring.

- Do provider disruption drills every three months and send the results to the board.

Things to avoid (they look good but don’t work in real life)

- “One sovereign stack for each country”

Why it feels right: the most power stays in the hands of the people.

Why it doesn’t work: it slows down the process of iterating, increases the number of security holes, and makes sure that audit evidence is inconsistent. You get slower and more dangerous.

- “Compliance for residents only”

Why it feels right: easy to check off the procurement box.

Why it doesn’t work: residency doesn’t cover operator access, the risk of jurisdictional compulsion, or model lifecycle governance. It gives people false confidence until something happens that makes them ask uncomfortable questions.

- “Agents everywhere, rules later”

Why it feels right: quick wins in productivity.

Why it doesn’t work: agents turn data access into action. You make un-auditable automation without jurisdiction-aware policy gating and evidence capture, which is something that regulators and boards won’t put up with.

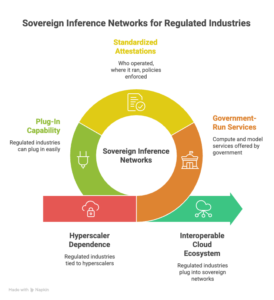

Sovereign inference networks are a trend to watch over the next three to five years.

Sovereign inference networks could be a good next step. These are pools of compute and model services that are run by a national or regional government and offer standardized attestations (who operated it, where it ran, what policies were enforced). This would let regulated industries “plug in” without having to switch to a single hyperscaler. It sounds impossible right now because the economics and interoperability are hard. Early signs to look for include more sovereign cloud footprints, standardized confidential-compute attestations, and policy-driven workload portability becoming a common request for procurement. The rise of dedicated sovereign cloud structures in Europe is a significant early sign.

One thing that could slow progress is the sovereignty tax on talent and complexity.

The biggest problem is not technology; it’s how complicated things are to run. Sovereign-by-design needs strong platform engineering, strict metadata practices, and governance across departments. Many businesses don’t realize how much talent and process maturity they need, so they either “compliance theater” or stop delivering. The answer is to make sovereignty a standard product (platform capability + operating model) instead of a list of exceptions.

What leaders need to stop doing and what they need to start doing now

Stop thinking of sovereignty as a late-stage legal review or a clause in a contract. Within the next 12 months, you should commit to building configurable sovereignty. This means using classification-driven controls, enforcing policy-as-code, creating regional execution zones for regulated workloads, and having a board-ready resilience posture that assumes churn.

Don’t optimize, re-architect:

- Re-architect control planes (identity, keys, policy, evidence) so you can localize without breaking things up.

- Change the way AI lifecycle governance works so that model behavior and auditability are the same in all places.

- Rebuild agent runtimes so that they are policy-aware, geofenced, and can be broken down.

Within 90 days, publish your Sovereign AI blueprint. This should include sovereignty tiers, approved reference architectures, policy gates, and decision forums that keep innovation going while keeping control clear.

Leave a Reply