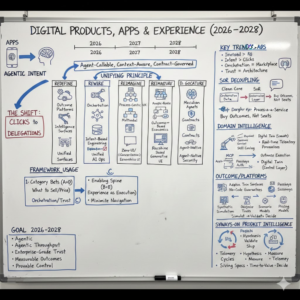

Digital Products, Apps & Experience (2026–2028)- Part 1

Digital products are entering a structural reset, not a feature cycle.

For 15 years, “digital” meant apps, screens, workflows, and seats. Value was delivered through navigation, trained users, and UI-driven process completion. That era is ending because the primary consumer of software is no longer a human—it’s an agent acting on human intent, inside governed boundaries, across systems. When the unit of interaction shifts from clicks to delegations, everything changes: product surfaces, architecture, data semantics, security, pricing, and even what “adoption” means.

Why this is an inflection point now (and not later)

Over the last 24–36 months, four things have become true at the same time:

- Reasoning has become a default capability (even if imperfect). The question is no longer “can the model answer?” but “can the enterprise safely let it act?”

- Interface gravity is moving upstream: users increasingly start in chat, voice, meeting notes, or collaboration streams—and expect the product to execute, not just display.

- Differentiation is shifting from model choice to proprietary context: domain meaning, governed process, and trusted data are the competitive moat.

- Cost has become variable and operational: tokens, inference, orchestration, and monitoring are turning product COGS into a managed system—pushing CFOs into product architecture decisions.

Where enterprises are misreading the moment

Most organizations are still responding with “AI features” bolted onto 2018-era product assumptions. The most common misreads:

- Treating agents as UI assistants instead of a new execution layer. This leads to copilots that demo well and underperform in production.

- Over-indexing on model capability while under-investing in ontology, data contracts, and context supply chains—then blaming “hallucinations” for what is actually meaning drift.

- Assuming governance is a policy problem, not an architectural one. In agentic systems, trust is engineered through contracts, permissions, provenance, and runtime control—policy alone doesn’t scale.

- Keeping seat-based product thinking while value migrates to outcomes and digital labor unit economics. Procurement will follow economics.

What the 2026–2028 winners will do differently

They will redesign digital products around five moves (your five pillars), with one unifying principle:

Make the product agent-callable, context-aware, and contract-governed—so outcomes can be delivered with minimal human navigation.

- A — Redefine What You Sell: product categories shift from apps to outcome platforms, agentic services, and intelligence surfaces.

- B — Rewire How You Design & Build: orchestration meshes, agent-ready data, intent-based engineering, and unified AI ops become core platforms.

- C — Reimagine the Experience: UX becomes process-centric, multimodal, self-explaining, and designed for “human judgment at the right layer.”

- D — Recapture How You Compete & Price: discovery, distribution, and pricing move to machine-readable markets and outcome-based economics.

- E — Govern What You Deploy: contracts, guardian agents, provenance, and agent-native security become non-negotiable infrastructure.

How to use this framework

Treat the Final 24 as an integrated strategy stack, not a menu:

- Pick 2–3 “Category Bets” (A + D): what you will sell and how you will price/distribute it.

- Build the enabling platform spine (B + E): orchestration, data meaning, runtime control, and operational discipline.

- Redesign experience as execution (C): minimize navigation, maximize guided action, and make judgment auditable.

The goal for 2026–2028 is not “AI adoption.” It is agentic throughput with enterprise-grade trust: faster cycle times, lower friction, measurable outcomes, and provable control.

A — Redefine What You Sell

Trend #1: The Collapse of the App — The Agentic Web

From 2026 through 2028, the most important “channel shift” in digital products won’t be mobile → web or web → super-app. It will be human navigation → agent delegation. Users will increasingly express intent in a conversational or collaboration layer—and expect the product to execute outcomes, not guide clicks. Products that don’t expose agent-callable, governed capability surfaces will be progressively bypassed by orchestration layers that can complete the job elsewhere.

The winners will treat the “product surface” as two-tier: human experience for judgment and agent experience for execution—with strong contracts, telemetry, and control.

Article content

The shift is from designing screens and workflows to designing callable outcomes. In the app era, you sold UI, modules, and navigation. In the agentic era, you sell reliable execution: discrete intents that can be invoked, composed, verified, and audited.

Three forces converge here:

1) Interaction moves upstream—intent becomes the entry point

Work starts in chat, voice, email, meeting notes, and collaboration streams. The first ask is no longer “where is that feature?” but “handle this.” The product must respond with actions, not instructions.

2) Orchestration becomes the new marketplace

The system that can route intent across tools—and complete the first 3–5 steps reliably—becomes the default interface. If your product can’t be called as a skill, it becomes a backend system at best.

3) Trust becomes architectural, not UX

Delegation requires permissioning, policy checks, reversibility, audit trails, and escalation design. “Great responses” are irrelevant if the system can’t prove safe execution under pressure.

Business impact

- Lower friction, higher throughput: fewer clicks, fewer tickets, less training; routine work becomes delegated execution.

- Faster change: capabilities ship as “skills” (intents) without reworking end-to-end UI flows each time.

- New risk surface: prompt injection/tool misuse/agent hijack shift security from perimeter to runtime control planes.

- New differentiation: domain truth + execution reliability (SLOs, auditability, reversibility) becomes the moat—not prettier screens.

CXO CTAs

- Define your “top 25 intents”: the handful of outcomes customers/employees actually want (approve, resolve, reconcile, quote, renew). Make them the product roadmap spine.

- Build agent-callable capability surfaces: machine-readable skill definitions with stable inputs/outputs, constraints, and error semantics—treat them like contracts, not endpoints.

- Separate interaction from execution: one governed execution layer invoked by UI, chat, APIs, and other agents—same capability, many entry points.

- Engineer delegation controls: permission manifests, policy gates, reversibility horizons, confidence thresholds, and escalation to named authority for consequential actions.

- Instrument outcome telemetry: completion, reversals, escalations, time-to-value, and agent error rates—manage them as product health metrics.

Trend #2: SoR Decoupling & Process-as-a-Service

From 2026 through 2028, Systems of Record (SoR) won’t disappear—but they will stop being where work happens. ERP/HCM/CRM platforms increasingly become governed compliance ledgers with a “clean core” posture, while workflow, intelligence, and experience shift upward into an orchestration layer. (SAP)

In parallel, repeatable professional and operational work gets packaged as productized, supervised workflows with measurable service levels. Enterprises will buy outcomes more than dashboards or seats. (McKinsey & Company)

Article content

The shift is from “buying systems” to buying measurable processes delivered as services. SoR becomes the place to record and govern. Orchestration becomes the place to compose and execute.

Three forces converge here:

1) “Clean core” pushes innovation outside the ledger

SoR vendors and enterprise architects are explicitly steering toward keeping the core stable and pushing extensions/workflows outside it—because heavy customization creates upgrade drag and technical debt. (SAP)

2) Decoupling layers become the new enterprise integration tier

Enterprises are building (or buying) decoupling tiers that aggregate SoR data/services for digital experiences—Gartner’s “digital integration hub” framing captures this architecture pattern: protect SoRs from direct experience load while enabling fast front-end/process iteration. (AppyThings API Library)

3) Services are being re-priced around outcomes and performance

Services markets are moving toward performance and shared-risk models, and software business models are shifting toward outcome-based pricing—especially in AI-enabled delivery where value is measurable per unit of work. (Forrester)

Business impact

- Lower cost of change: move workflow variation and policy logic out of SoR customization cycles; reduce upgrade friction. (SAP)

- Faster operating model evolution: process changes ship in orchestration layers, not in ERP projects. (AppyThings API Library)

- Clearer control & audit: SoR enforces record integrity; orchestration enforces runtime controls, escalation, and traceability. (AppyThings API Library)

- Outcome monetization: product + services converge into “process offers” priced by delivered results, not consumption proxies. (McKinsey & Company)

CXO CTAs

- Declare the boundary: formally define what must live in SoR (record integrity, compliance controls) vs orchestration (workflow, intelligence, experience). (SAP)

- Stand up a “process product catalog”: pick 10–20 high-cost repeatable processes and define them as outcome services (inputs, guardrails, escalation, definition of done). (Forrester)

- Contract on SLOs for work: time-to-complete, exception rate, reversals, compliance breaches—make service levels explicit and measurable. (Forrester)

- Re-platform telemetry for outcomes: instrument end-to-end process completion and quality, not just system uptime. (McKinsey & Company)

- Re-negotiate vendor assumptions: insist on portability of workflows/logic and clean separation between ledger and experience to protect your change velocity. (SAP)

Trend #3: Domain Intelligence & Agent-Callable Product Design

From 2026 through 2028, the defensible moat in digital products won’t be “which model you use.” As major analysts and strategy firms now state plainly, advantage shifts toward domain-specific intelligence and proprietary context—because generic models often underperform on specialized tasks, compliance, and industry nuance. (Gartner)

In parallel, products must be redesigned for agent consumption: not just human-friendly UX, but machine-invokable capability surfaces—stable “skills/intents” with constraints, predictable semantics, and governance. Open standards like the Model Context Protocol (MCP) are accelerating this by normalizing how agents connect to tools and enterprise context. (Anthropic)

The winners will productize domain truth into callable execution—so agents can safely transact, not merely retrieve.

Article content

The shift is from “feature differentiation” to “domain truth + callable outcomes.” In agent-mediated markets, being invisible to agents increasingly means being invisible to demand.

Three forces converge here:

1) Models commoditize; domain-specific intelligence rises

Gartner’s 2026 trend framing highlights domain-specific language models as a path to higher accuracy, reliability, and compliance for specialized enterprise work—and positions context as a critical differentiator for successful agent deployments. (Gartner)

2) Proprietary context becomes the strategic asset

McKinsey’s software frontier view is blunt: competitive advantage shifts from features to proprietary data access and control—the ingredient that drives better outcomes and supports premium economics. (McKinsey & Company)

3) Standardized “callability” turns products into agent-invokable capabilities

MCP’s premise—secure, two-way connections between AI tools and external data/tools—pushes the market toward capability surfaces that agents can discover and invoke consistently, across ecosystems. (Anthropic)

Business impact

- Differentiation: “domain truth” (rules, constraints, semantics, quality) becomes more valuable than UI breadth. (Gartner)

- Distribution: agent-mediated discovery favors products with reliable, machine-invokable skills and clear constraints. (Anthropic)

- Risk reduction: domain constraints + governed execution reduce costly reversals, exceptions, and compliance failures. (Gartner)

- Faster evolution: shipping new value as new skills/intents can outpace full UI/workflow redesign cycles.

CXO CTAs

- Codify “domain truth” as a product contract: explicit ontology, policies, and constraints—testable and versioned, not tribal knowledge. (Gartner)

- Publish an agent-callable capability map: top intents, required inputs, permitted actions, constraints, and error semantics—treated as stable interfaces. (Model Context Protocol)

- Invest in context supply chains: provenance of sources, freshness, semantic alignment, and cost controls—managed with the rigor of latency and security. (Gartner)

- Engineer safe execution as default: permission gates, reversibility horizons, escalation paths, and auditability embedded per skill. (Model Context Protocol)

- Defend the moat you can own: proprietary telemetry + domain datasets + feedback loops that compound model performance and outcome reliability over time. (McKinsey & Company)

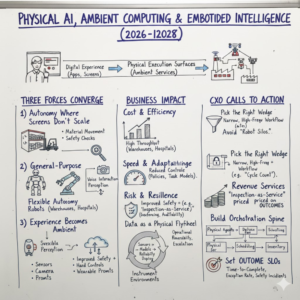

Trend #4: Physical AI, Ambient Computing & Embodied Intelligence

From 2026 through 2028, AI meaningfully exits the screen. What looks like “robots” on the surface is actually a deeper product shift: software becomes operational in the physical world—sensing, deciding, and acting through machines, spaces, and wearables. Gartner now explicitly calls out Physical AI as a strategic trend powering robots, drones, and smart equipment for measurable operational impact. (Gartner)

The winners won’t treat this as a robotics initiative. They’ll treat it as a new product category: ambient, embodied services with outcome guarantees, safety constraints, and continuously improving performance.

Article content

The shift is from “digital experience” to “physical execution surfaces.” Physical AI is the embodiment of AI into systems that perceive the environment, reason under constraints, and perform actions in the real world—factories, warehouses, hospitals, retail floors, and field operations. NVIDIA’s framing positions this as a breakthrough moment for robots and autonomous systems operating in real environments. (NVIDIA Blog)

Three forces converge here:

1) Operations demand autonomy where screens don’t scale

The biggest value pools are in environments where the UI is the wrong interface: material movement, inspection, safety checks, maintenance, and frontline execution. The World Economic Forum describes “physical AI” as emerging from hardware + AI + vision breakthroughs, enabling robotic systems capable of perception, reasoning, and autonomous action. (World Economic Forum)

2) General-purpose robots shift from demos to deployment programs

McKinsey’s industrial research points to embodied AI enabling robots to adapt behavior in real time from sensor input—moving beyond fixed automation toward more flexible autonomy across settings like warehouses, factories, hospitals, and fields. (McKinsey & Company) Recent corporate signals also show timelines becoming concrete (e.g., announced humanoid deployments in manufacturing environments later this decade). (Reuters)

3) The “experience surface” becomes ambient and distributed

Experience isn’t only an app. It’s the space: camera-based perception, voice interaction, wearable prompts, and autonomous action coordinated through an orchestration layer. WEF’s autonomous systems view frames this as the next frontier—AI plus sensors and connectivity performing complex tasks in unstructured environments, with material implications for productivity and safety. (World Economic Forum)

Business impact

- Cost & efficiency: step-changes in throughput for repetitive physical work (move/inspect/verify/maintain), especially where labor is scarce or turnover is high. (World Economic Forum)

- Speed & adaptability: operations can be reconfigured by updating policies, constraints, and task models—less retooling, more software-driven change. (McKinsey & Company)

- Risk & resilience: improved safety outcomes for high-risk tasks, but new failure modes require hard controls (geofencing, kill-switches, auditability, and incident playbooks). (World Economic Forum)

- Revenue & differentiation: new categories of “ambient services” emerge (inspection-as-a-service, inventory accuracy-as-a-service, safety monitoring-as-a-service) priced on outcomes rather than devices or seats.

CXO CTAs

- Pick the right wedge: start with a narrow, high-frequency physical workflow (inspection, cycle count, parts movement, safety rounds) where success is measurable and constraints are clear. (McKinsey & Company)

- Design “safety + supervision” as product architecture: define operational envelopes, reversibility horizons, escalation rules, and human-on-the-loop control surfaces before scaling autonomy. (World Economic Forum)

- Treat data as a physical flywheel: instrument environments (sensors, vision, logs) so every deployment improves models, task libraries, and reliability over time. (McKinsey & Company)

- Build the orchestration spine: physical agents must be coordinated with digital systems of record, scheduling, inventory, and compliance—avoid “robot silos.” (World Economic Forum)

- Set outcome SLOs, not pilot goals: time-to-complete, exception rate, safety incidents, rework, and downtime impact—manage like a service, not a gadget rollout. (McKinsey & Company)

Trend #5: Generative, Adaptive & Zero-UI Product Surfaces

From 2026 through 2028, the “wireframe-first” era breaks. Interfaces stop being mostly static artifacts and become generated, adaptive surfaces assembled in real time from role, intent, policy, and live process state. NN/g’s definition of generative UI captures the core move: AI dynamically generates interfaces to fit the user’s needs and context. (Nielsen Norman Group)

In parallel, Zero-UI becomes the default for routine actions: if no human judgment is required, the “best interface” is increasingly no interface—execution happens in the background with proof, reversibility, and auditability. (ptgmedia.pearsoncmg.com)

The winners will treat UI not as a screen system, but as an outcome surface: generated when needed, minimized when not, and always governed.

Article content

The shift is from “designing screens” to “designing outcome surfaces.” Static UI libraries don’t scale to agentic execution, personalization, and rapid process change. Dynamic UI does—if it’s constrained by strong product semantics and governance.

Three forces converge here:

1) Generative UI turns UX into a runtime system

Generative UI is moving from concept to practice: AI can generate interactive UI elements on the fly to support a user’s intent in the moment—shifting UX from static layouts to dynamic, context-specific experiences. (Nielsen Norman Group)

2) “Zero-UI” expands as agents execute routine work

When the work is repeatable and low-risk, the most usable flow is often no flow: the system executes, summarizes what happened, and asks for approval only when thresholds are crossed—aligning with long-standing design thinking that screens should be reduced when they don’t add value. (ptgmedia.pearsoncmg.com)

3) Trust and authentication become conversion architecture

As interaction shifts to intent and delegation, frictionless high-assurance auth becomes part of experience design (not a security afterthought). Passkeys—phishing-resistant, cryptographic credentials—are a concrete example of security improving UX while reducing attack surface. (FIDO Alliance)

Business impact

- Higher completion, lower cognitive load: adaptive surfaces reduce step count and training overhead by presenting only what matters now. (Nielsen Norman Group)

- Faster product evolution: teams ship new outcomes without redesigning every workflow path; UI becomes assembled from stable “intent + policy + state.” (arXiv)

- Lower support cost: fewer “where do I click” issues; more “the system did it” with transparent reasoning and receipts. (ptgmedia.pearsoncmg.com)

- Reduced security exposure: modern auth patterns like passkeys cut phishing risk while improving sign-in success and speed. (FIDO Alliance)

CXO CTAs

- Define “Zero-UI eligible” work: identify the top routine actions where no human judgment is required; automate them with receipts, reversibility, and audit trails. (ptgmedia.pearsoncmg.com)

- Treat UX as a policy-constrained runtime: mandate that generated UI is derived from governed semantics (roles, permissions, process state), not ad-hoc prompting. (arXiv)

- Standardize an “Outcome Surface” pattern: every experience should answer: what changed, why, who approved, can it be undone, and what’s the next best action. (Nielsen Norman Group)

- Make authentication part of the journey design: move high-trust actions to phishing-resistant flows (e.g., passkeys) and design risk-calibrated step-ups for consequential actions. (FIDO Alliance)

- Create guardrails for generated UX: test suites for safety, accessibility, and compliance; regression controls for “UI drift” the same way you manage model drift. (arXiv)

Trend #6: Outcome Platforms, Digital Twins & AI-Augmented Professional Services

From 2026 through 2028, the center of gravity shifts from point products to end-to-end outcome platforms—where the offer is not a feature set, but a measurable business result delivered through software + orchestration + supervised AI services. Digital twins become the validation layer: simulate, stress-test, and optimize before execution, then keep learning from real-time telemetry. McKinsey’s work on digital twins repeatedly frames them as decision accelerators that enable “what-if” simulation across factories and supply chains and can materially improve performance when done end-to-end. (McKinsey & Company)

In professional services, AI doesn’t just “assist consultants.” It restructures delivery: repeatable work becomes supervised agentic workflows with SLOs, and humans focus on exceptions, judgment, and accountability. This is the practical bridge between software economics and services economics: outcomes at scale.

Article content

The shift is from “selling tools” to “operating outcomes.” The next platform category is a hybrid: product + operations + continuous optimization.

Three forces converge here:

1) Digital twins move from visualization to operational control

Digital twins are increasingly positioned as real-time virtual representations used to simulate and optimize complex systems (factory, supply chain, infrastructure). The value comes when the twin is tied to live data and becomes the decision layer—not a one-off model. (McKinsey & Company)

2) Simulation becomes the new “QA” for business change

Before you change a plant layout, alter a logistics policy, or re-parameterize workforce scheduling, you simulate it. WEF has highlighted digital twins as a way for organizations to experiment safely, learn faster, and apply improvements back to the real world. (World Economic Forum)

3) Professional services get re-encoded into governed workflows

Accenture’s “digital twin done right” framing (and subsequent twin-reality work) emphasizes combining AI, data, automation, and models—treating the twin as a means to reshape end-to-end delivery, not an end in itself. This matches the broader market direction: services become productized into repeatable workflows with clear performance outcomes. (Accenture)

Business impact

- Cost & efficiency: fewer real-world mistakes via pre-deployment simulation; reduced commissioning and rework; better asset utilization. (McKinsey & Company)

- Speed & adaptability: faster “policy-to-production” cycles because changes can be tested and rolled out with confidence. (World Economic Forum)

- Risk & resilience: safer change management; clearer audit trails from simulated decision rationale to executed outcomes. (World Economic Forum)

- Revenue & differentiation: platform offers priced on outcomes (throughput, downtime avoided, yield improved, claim resolved) and backed by continuous optimization—harder to displace than a dashboard product.

CXO CTAs

- Choose outcome units first: define the measurable business unit (minutes of downtime avoided, % yield lift, days-to-close reduced) before choosing tooling.

- Make the twin a control layer, not a visualization: require live data linkage, scenario testing, and decision traceability as non-negotiables. (McKinsey & Company)

- Productize services into supervised workflows: encode repeatable service work as governed steps + escalation + SLOs; humans own exceptions and accountability. (Accenture)

- Contract on performance, not activity: move from “hours and seats” to measurable, telemetry-backed outcomes (and define reversibility and dispute mechanisms).

- Build a continuous improvement loop: every execution updates the model/twin assumptions; every exception becomes a design input.

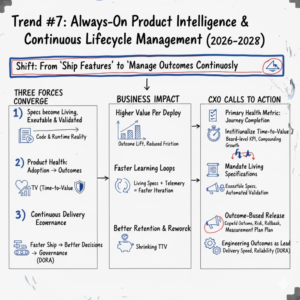

Trend #7: Always-On Product Intelligence & Continuous Lifecycle Management

From 2026 through 2028, “roadmaps” stop being promises and become living hypotheses—continuously updated by telemetry, outcome attainment, and real-world friction signals. High-performing teams will treat product intent, behavior, and quality as one continuously managed system: living specifications synchronized with code and runtime reality, not static documents that drift.

This trend is the product analogue of what modern engineering has already learned: measure outcomes, shorten feedback loops, and treat reliability as a feature. DORA’s research-backed metrics framing is a strong proxy for the mindset shift: performance is managed through measurable outcomes and continuous improvement, not activity proxies. (Dora)

Article content

The shift is from “ship features” to “manage outcomes continuously.” In an agentic world, the product isn’t “done” at release. It’s a living system that must continuously optimize: effectiveness, safety, cost-to-serve, and trust.

Three forces converge here:

1) Specs become living, executable, and continuously validated

“Living documentation” and executable specifications (from BDD) formalize a pragmatic idea: what the system does should be verifiable and remain aligned with behavior as the system changes. This approach makes drift visible and correctable rather than discovered in production by customers. (serenity-bdd.github.io)

2) Product health shifts from adoption to outcome completion and time-to-value

The most strategic product metrics are moving closer to the user’s realized value, not product activity. Time-to-Value (TTV) is increasingly treated as a core indicator because it measures when users actually reach meaningful benefit—not when they click around. (productled.org)

3) Continuous delivery economics force continuous product governance

When the delivery machine can ship frequently, the limiting factor becomes decision quality: what to ship, to whom, under what constraints, with what expected outcome and rollback path. Teams that operationalize this via measurable delivery and reliability outcomes outperform those managing by roadmap theater. (Dora)

Business impact

- Higher value per deploy: releases are evaluated on outcome lift and reduced friction, not volume of tickets closed or features launched. (Dora)

- Faster learning loops: living specs + telemetry reduce time between “we think” and “we know,” accelerating iteration with less regression risk. (serenity-bdd.github.io)

- Lower support and rework: earlier detection of behavioral drift reduces downstream incidents, customer confusion, and expensive remediation. (Dora)

- Better retention economics: shrinking time-to-value improves the likelihood users stick long enough to realize recurring value. (Amplitude)

CXO CTAs

- Replace “feature adoption” with “journey completion” as the primary health metric: define the 10–20 critical journeys and measure completion rate, time-to-complete, reversals, and escalation frequency.

- Institutionalize Time-to-Value: make TTV a board-level product KPI for priority journeys; treat reductions as compounding growth and cost-to-serve wins. (productled.org)

- Mandate living specifications for high-impact processes: tie key product behaviors to executable specs and automated validation so intent stays aligned with code. (serenity-bdd.github.io)

- Adopt outcome-based release governance: every major change declares expected outcome, risk envelope, rollback/reversibility, and measurement plan—before shipping.

- Use engineering outcome metrics as leading indicators: delivery speed and reliability metrics (e.g., DORA-style) should be reviewed alongside product outcomes to spot systemic bottlenecks early. (Dora)

Trend #8: Synthetic Market Intelligence — Product & Insights Hypothesis Engine

From 2026 through 2028, product teams stop treating “launch” as the primary learning mechanism. They shift from Build-Launch-Learn to Simulate-Validate-Decide: synthetic customer cohorts, scenario simulations, and pricing sensitivity models become a first-class product capability—used to test hypotheses before a single sprint is planned.

This is not “fake research.” It’s a new decision layer: when uncertainty is high and iteration costs are high, the winning move is to compress learning cycles with simulation—then validate selectively with real users and real-world signals. NN/g’s guidance on “synthetic users” is a pragmatic marker: these techniques are useful, but must be treated as directional and governed to avoid false confidence. (Nielsen Norman Group)

Article content

The shift is from market research as episodic discovery to market intelligence as a continuous hypothesis engine. In agentic product strategy, the scarcest resource is not ideas—it’s high-quality decisions under uncertainty.

Three forces converge here:

1) Synthetic cohorts reduce time-to-confidence in early decisions

Generative AI enables simulated personas/cohorts and scenario exploration that can rapidly stress-test positioning, messaging, onboarding flows, and edge cases. This doesn’t replace user research—but it changes sequencing: simulation first, then targeted real validation. (Nielsen Norman Group)

2) Synthetic data becomes a mainstream enabler for sparse, sensitive, or high-risk domains

Forrester explicitly frames synthetic data as a catalyst for AI development and highlights the difficulty of balancing fidelity, privacy, and bias—meaning governance and measurement become part of product discipline, not an afterthought. (Forrester)

3) Scenario planning becomes product-native, not strategy-only

Forrester also positions GenAI as a practical accelerator for long-term scenario planning—using generated scenarios and predictions as an interface for analysts and executives. In product terms, this becomes “continuous strategy telemetry”: what happens to adoption, churn, conversion, cost-to-serve, and trust under different market conditions. (Forrester)

Business impact

- Fewer expensive misbuilds: earlier signal on whether a concept fails on comprehension, value perception, or trust—before engineering effort is committed. (Nielsen Norman Group)

- Faster product cycles: hypothesis generation and prioritization compress from weeks to days; real-user validation becomes more surgical and higher ROI. (arXiv)

- Better pricing and packaging: scenario simulation improves sensitivity analysis and outcome-unit design earlier in GTM planning (especially under variable AI COGS). (Forrester)

- Improved governance and defensibility: using synthetic data responsibly can reduce exposure in regulated contexts—but only if bias/fidelity controls are explicit. (Forrester)

CXO CTAs

- Create a “Hypothesis Engine” operating cadence: every major bet declares assumptions (persona, willingness-to-pay, adoption path, risk) and is run through simulation + targeted real validation. (Forrester)

- Treat synthetic insights as directional, not dispositive: require triangulation with real telemetry, interviews, experiments, and domain experts; ban “synthetic-only” go decisions for high-stakes bets. (Nielsen Norman Group)

- Stand up synthetic data governance: fidelity thresholds, bias checks, privacy controls, documentation, and approval gates—especially when synthetic data influences product decisions. (Forrester)

- Instrument “decision quality” as a product capability: track which assumptions were wrong, how fast they were corrected, and the cost avoided—make learning a measurable system. (McKinsey & Company)

- Use simulation for pricing + cost-to-serve together: model willingness-to-pay alongside expected inference/orchestration cost envelopes to avoid margin surprises later. (Forrester)

Trend #9: New Emerging Product Categories — Personal AI, Enterprise AI, World Knowledge AI & Synthetic Metaverse AI

From 2026 through 2028, “AI features” won’t be the story. Entire new product categories will become normal purchase motions—because agents shift software from tools you use to systems that know, decide, and act. Gartner’s strategic predictions point to AI agents intermediating a large share of B2B buying by 2028, which is effectively a distribution reset: products compete to become the machine-preferred source of truth and execution. (Gartner)

The winners will stop thinking in terms of apps and start designing AI-native products with compounding context, defensible domain truth, and governed autonomy.

Article content

The shift is from “software as interface” to “software as an operating entity.” These categories emerge because three things become possible at once: persistent context, agentic action, and machine-mediated markets.

Three forces converge here:

1) Personal AI becomes a compounding asset, not a convenience feature

WEF’s “digital entities” framing makes the direction clear: assistants, “digital twins/doppelgangers,” and AI-driven entities evolve into persistent counterparts that support decisions and actions over time. The key product logic is compounding: the longer the system knows you (within consent boundaries), the more valuable it becomes—and the harder it is to replace. (World Economic Forum)

2) Enterprise AI products shift from copilots to function replacement

Gartner expects a rapid expansion of task-specific AI agents embedded across enterprise applications by 2026, signaling that “agentic capability” becomes a default expectation inside core workflows—not an add-on. (Gartner) McKinsey’s “superagency” and State of AI work reinforces the same point: enterprises are already moving from experimentation toward scaled, function-level deployment where agents plan and execute multi-step work, not just generate text. (McKinsey & Company)

3) World Knowledge AI and Synthetic Metaverse AI become new intelligence layers

Two adjacent categories mature:

- World Knowledge AI: continuously updated, cross-source reasoning for regulatory intelligence, scientific discovery, market truth, and risk sensing—beyond search and beyond static BI. (This becomes especially valuable as machine-mediated buying and decisioning accelerates.) (Gartner)

- Synthetic Metaverse AI: purpose-built simulated worlds for training, planning, safety testing, and discovery. WEF explicitly links generative AI with dynamic virtual environments; academic work also frames the metaverse as an innovation platform where generative AI scales content and interaction. (World Economic Forum)

Business impact

- New moats form: compounding context + trust + governance becomes harder to copy than UI or generic model access. (World Economic Forum)

- Distribution rewires: agent-intermediated procurement favors machine-readable, verifiable capabilities and “domain truth” suppliers. (Gartner)

- Operating model shift: enterprise AI products become measurable “digital labor” capacity at the function level (speed, quality, compliance), not a productivity layer. (McKinsey & Company)

- Acceleration via simulation: synthetic environments enable faster validation of training, policy, and safety behaviors before deploying into real operations. (World Economic Forum)

CXO CTAs

- Pick your category bet explicitly: are you building Personal AI, Enterprise AI function replacement, World Knowledge AI, or Synthetic Worlds? Each implies different data, governance, and GTM. (World Economic Forum)

- Design “compounding context” with consent boundaries: treat memory, preference, and user models as governed assets (what’s stored, why, retention, portability, deletion). (World Economic Forum)

- Package enterprise value as outcomes: define function-level outcome units (claims resolved, renewals saved, exceptions cleared) and make agents accountable to measurable SLOs. (Gartner)

- Become machine-preferred: publish stable, testable, machine-consumable capability contracts and provenance so agents can safely trust and transact with you. (Gartner)

- Use synthetic worlds as a safety and scale lever: train, test, and benchmark behaviors in simulation—then validate selectively in production with strong monitoring and rollback. (World Economic Forum)

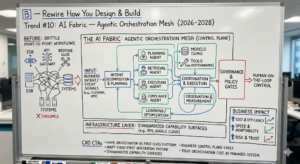

B — Rewire How You Design & Build

Trend #10: AI Fabric — Agentic Orchestration Mesh

From 2026 through 2028, the enterprise “integration layer” gets rewritten. The old center (API gateways + ESBs + brittle point-to-point workflows) won’t disappear, but it will be subsumed by an orchestration mesh that coordinates multiple agents, multiple models, tools, and policies across the business. Gartner’s 2026 framing explicitly elevates multi-agent systems and AI application orchestration as a strategic architecture layer—not a niche pattern. (Gartner)

The winners will treat orchestration as a control plane: how intent is decomposed, executed, governed, observed, and paid for.

Article content

The shift is from “integrating systems” to “orchestrating work.” Orchestration becomes the tier where the enterprise decides: which agent acts, which tool is used, what data is allowed, what must be approved, and how outcomes are measured.

Three forces converge here:

1) Multi-agent coordination becomes the new default for complex work

Single-agent “do everything” designs fail under real enterprise complexity. Multi-agent systems modularize work: planning, retrieval, verification, execution, and compliance checks can be separated and governed. Gartner is already positioning multi-agent systems as central to scalable automation. (Gartner)

2) Pub-sub event backbones become the nervous system

Agentic enterprises are event-driven: signals from customers, operations, devices, and systems trigger autonomous workflows. Event-driven architecture (EDA) and publish/subscribe patterns are increasingly described as the backbone for scalable, real-time enterprise integration—exactly what orchestration needs to be responsive and resilient. (Google Cloud)

3) Connector standards normalize “tool access” as infrastructure

As agent ecosystems mature, standardization accelerates. MCP is widely described as a standardized integration layer enabling agents to safely access tools and external context—complementing orchestration frameworks rather than replacing them. This changes the economics: less bespoke glue, more reusable capability surfaces. (IBM)

Business impact

- Cost & efficiency: orchestration reduces duplicate workflow builds and brittle integrations; capabilities become reusable building blocks. (IBM)

- Speed & adaptability: new processes are composed by routing intent across skills and events, not by re-platforming apps. (Gartner)

- Risk, trust & resilience: governance shifts left and right—policy gates, human-on-the-loop controls, and audit trails become runtime requirements, not documentation. Deloitte’s outlook points to human-on-the-loop orchestration as the practical enterprise direction. (Deloitte)

- Platform power: whoever owns orchestration increasingly owns how work is routed, measured, and monetized—becoming the gravitational center of the internal platform economy.

CXO CTAs

- Name orchestration as a first-class platform: assign clear ownership (architecture + product + ops), with KPIs tied to cycle time, reliability, and policy compliance. (Deloitte)

- Adopt an event-first integration posture: treat pub/sub as the default for cross-domain process signals; reserve synchronous APIs for bounded, latency-critical transactions. (Google Cloud)

- Standardize “capability surfaces” as skills/intents: make every major enterprise capability discoverable, callable, constrained, and observable—before scaling agents. (IBM)

- Engineer the control plane early: policy evaluation, permission manifests, escalation paths, reversibility, and provenance must be native to orchestration—retrofits fail under incident pressure. (Deloitte)

- Treat orchestration cost as a managed system: build FinOps-for-agents discipline (routing, caching, tool choice, model choice) so variable AI COGS doesn’t surprise margins.

B — Rewire How You Design & Build

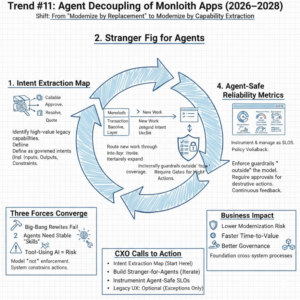

Trend #11: Agent Decoupling of Monolith Apps

From 2026 through 2028, most enterprises won’t “rewrite the monolith.” They’ll decouple it for agents: expose legacy capabilities as discrete, governed, agent-callable intents while keeping the core system stable as a system of record. This is the Strangler Fig pattern adapted for the agentic era—incremental extraction of capabilities without a risky big-bang rewrite. (martinfowler.com)

The winners will treat legacy apps as data-of-record + transaction integrity, while intelligence and workflow migrate upward into orchestration—one capability at a time.

Article content

The shift is from “modernize by replacement” to “modernize by capability extraction.” Agent decoupling turns a monolith into a portfolio of callable capabilities with clear contracts, constraints, and observability.

Three forces converge here:

1) Big-bang rewrites remain a failure pattern—incremental displacement wins

Modernization patterns like Strangler Fig exist because they reduce risk: value can be delivered steadily, progress can be monitored, and you avoid overbuilding features that rewrite programs tend to create. (martinfowler.com)

2) Agents require stable “skills,” not fragile UI paths

Agents can’t depend on brittle screen flows and implicit business rules. They need explicit intents (“create supplier,” “approve credit exception,” “rebook shipment”) with deterministic validation, permissioning, and predictable error semantics—otherwise autonomy amplifies failure. (architect.salesforce.com)

3) Tool-using AI expands the attack surface—governed decoupling is safer than direct exposure

As soon as agents can call tools, you inherit prompt-injection risks and unintended action execution. OWASP’s guidance makes the practical implication clear: the model must not be the enforcement layer—your system must constrain tool access and validate actions. (OWASP Cheat Sheet Series)

Business impact

- Lower modernization risk: incremental extraction reduces downtime risk and avoids “rewrite shock” to operations. (Microsoft Learn)

- Faster time-to-value: you can expose the 20% of capabilities that drive 80% of outcomes first—without waiting for a full transformation. (martinfowler.com)

- Better governance: each extracted intent becomes a controllable surface with permissions, policy gates, and audit trails—far safer than letting agents “click around” legacy UX. (OWASP Cheat Sheet Series)

- Foundation for orchestration: once intents exist, the orchestration mesh can compose cross-system processes reliably. (Gartner)

CXO CTAs

- Start with an “Intent Extraction Map”: identify the highest-value legacy capabilities and define them as callable intents with inputs/outputs, constraints, and error semantics.

- Adopt Strangler-for-Agents execution: route new work through the intent layer while the monolith remains the transaction backbone; expand coverage iteratively. (Microsoft Learn)

- Build a deterministic control layer: enforce allowlists, validation, and permissions outside the model; require approvals for destructive or high-impact actions. (OWASP Cheat Sheet Series)

- Instrument “agent-safe reliability” metrics: success rate per intent, reversal rate, escalation rate, latency, and policy violations—managed like SLOs.

- Treat legacy UX as optional, not primary: keep screens for exceptions and human judgment; push routine execution through governed intents.

Trend #12: Intent-Based & Declarative Programming

From 2026 through 2028, the dominant engineering shift won’t be a new language—it will be a new contract between humans and machines: developers increasingly specify what must be achieved (intent, constraints, policies, quality bars), and AI systems determine how to implement it. This maps to the broader move analysts describe as “AI-native development platforms,” where development becomes more model-assisted, policy-constrained, and automation-heavy. (Gartner)

The winners will treat prompts, plans, and constraints as versioned engineering artifacts, governed in CI/CD, tested continuously, and monitored in production—because “intent” becomes part of the executable system.

Article content

The shift is from coding procedures to declaring outcomes with guardrails. Declarative systems succeed when the “what” is precise, testable, and enforceable—and the runtime can choose the best “how” safely.

Three forces converge here:

1) AI-native development makes “how” cheaper than “what”

As AI assistance increases throughput, the bottleneck shifts to: clarifying intent, defining constraints, and making correctness measurable. Strategy firms are now explicit that realizing value requires operating model change, not just tool adoption. (McKinsey & Company)

2) Prompts and intents become first-class engineering artifacts

Teams are converging on a hard-earned lesson: ad-hoc prompt changes create silent regressions. The emerging best practice is to version, test, and monitor prompts like code—because they are production logic. (blog.neosage.io)

3) Declarative patterns are the safest way to govern agentic execution

When AI is taking actions (not just generating text), declarative intent + policy constraints reduce ambiguity and make automation auditable. The industry narrative is increasingly: declare desired outcomes and let the runtime decide execution under governance. (jit.pro)

Business impact

- Speed with control: faster delivery without losing governance—because “intent” is explicit and testable, not tribal knowledge. (McKinsey & Company)

- Lower defect and drift risk: versioned prompts/intent specs reduce “behavior drift” that otherwise shows up as production surprises. (blog.neosage.io)

- Better reuse: intent definitions and constraints become reusable building blocks across teams and products. (jit.pro)

- Clearer accountability: humans own intent, constraints, and approval policies; machines own implementation and execution—improving auditability and decision traceability.

CXO CTAs

- Mandate “intent specs” for critical work: every high-impact capability must have a declared outcome, constraints, acceptance tests, and reversibility plan—before code generation. (McKinsey & Company)

- Operationalize a prompt/intent lifecycle: versioning, test suites, staged rollout, regression detection, and rollback for prompts and agent plans—same rigor as application code. (blog.neosage.io)

- Shift QA from UI paths to outcome properties: test what must always be true (compliance, invariants, safety constraints), not only scripted flows. (jit.pro)

- Create “policy-as-code” guardrails: ensure AI-generated implementations cannot bypass authorization, data classification, or risk thresholds—enforced at runtime.

- Measure engineering by value per deploy: treat throughput as secondary to outcome quality, stability, and business impact.

Trend #13: Engineering Intelligence Layer

From 2026 through 2028, engineering leadership stops running on lagging indicators (velocity, story points, “utilization”) and moves to an always-on engineering intelligence layer: real-time signals that connect delivery throughput, reliability, developer experience, technical debt risk, and—crucially—value per deploy.

The trigger is simple: as AI amplifies output, activity metrics become noisier. The winners will measure what matters: outcome delivery, stability, and the health of the socio-technical system. DORA’s evolution toward a more explicit throughput/instability model is a clear marker of the direction. (Dora)

Article content

The shift is from “tracking delivery” to “governing the engineering system.” Engineering intelligence becomes a control surface: detect drift, prevent regressions, and direct investment (platform, quality, debt, tooling, skills) based on live signals—not anecdotes.

Three forces converge here:

1) DORA-style outcomes become the baseline, not the finish line

DORA research emphasizes measurable performance outcomes and has updated its model over time (including how it frames stability/throughput). This supports a broader leadership shift: treat delivery + operations performance as a managed system with explicit trade-offs and leading indicators. (Dora)

2) Productivity measurement matures beyond “activity”

The SPACE framework makes the point bluntly: developer productivity is multi-dimensional (satisfaction, performance, activity, communication/collaboration, efficiency/flow). Leaders who optimize a single proxy metric reliably create dysfunction. (queue.acm.org)

3) AI changes what “good” looks like—and raises new risks

As AI increases code generation and change volume, the risk shifts toward: review quality, architectural coherence, security regressions, dependency sprawl, and prompt/agent drift. You need telemetry that measures not just speed, but confidence, maintainability, and resilience—in near real time. (This aligns with the broader “AI-native development platforms” trend framing.) (Gartner)

Business impact

- Cost & efficiency: investment shifts from “more output” to removing systemic bottlenecks (build pipelines, flaky tests, unclear ownership, platform friction), improving ROI per engineering dollar. (Microsoft Developer)

- Speed & adaptability: teams sustain higher change rates with less instability because early warning signals surface where quality is degrading before incidents spike. (Dora)

- Risk, trust & resilience: engineering intelligence makes reliability a first-class product characteristic—measured, governed, and improved continuously. (Dora)

- Revenue & differentiation: higher “value per deploy” compounds: faster iteration on what customers actually complete, fewer regressions, and lower support burden.

CXO CTAs

- Define a single engineering leadership surface: unify DORA-style outcomes + SPACE dimensions + technical debt risk into one executive view; stop running separate dashboards that encourage local optimization. (Dora)

- Replace “velocity” with “value per deploy”: for priority journeys, require every significant release to declare intended outcome lift and measure it post-deploy (completion, time-to-value, reversals, escalations).

- Instrument developer experience as a control variable: treat friction (environment setup time, CI latency, flaky tests, cognitive load) as measurable and fundable—not “team complaints.” (Microsoft Developer)

- Track AI-era quality signals explicitly: PR review depth, test coverage quality (not quantity), security findings, dependency risk, incident correlation, and prompt/agent drift—tie them to release gates. (Gartner)

- Create an “engineering debt portfolio”: quantify debt like financial risk (probability × impact) and manage it continuously, not as a quarterly cleanup ritual.

Trend #14: The Agentic Engineering Team

From 2026 through 2028, “AI in engineering” stops being a productivity add-on and becomes a team topology change. The practical end-state is smaller human cores (principal architects, domain owners, risk stewards) directing a larger layer of virtual contributors (coding, testing, refactoring, documentation, incident triage)—with humans shifting from doing tasks to governing outcomes. McKinsey’s research on AI in software delivery consistently points to significant productivity upside, but also stresses that value depends on operating model and governance changes—not just tool rollout.

The winners will redesign how work is specified, reviewed, assured, and shipped—because AI increases throughput faster than traditional quality systems can absorb.

Article content

The shift is from “teams that write code” to “teams that run an agentic delivery system.” Engineering becomes a supervised production line for software change: high volume, policy-constrained, continuously verified.

Three forces converge here:

1) Continuous delivery becomes continuous agentic delivery

DORA’s metrics and research reinforce a core point: the best performers ship frequently and maintain stability—meaning performance is a system property, not a hero story. As AI increases change volume, the team must become better at system-level control (tests, gates, observability, rollback) or instability explodes.

2) “What to build” becomes the bottleneck, not “how to code it”

As coding gets cheaper, requirements precision, acceptance criteria, risk classification, and architectural intent become the scarce assets. This mirrors the broader productivity measurement guidance in SPACE: optimizing for activity alone creates dysfunction; you need multi-dimensional governance of outcomes and flow.

3) New failure modes force new disciplines

AI-assisted engineering introduces distinct risks: silent regressions, dependency sprawl, inconsistent architecture, insecure patterns, and “agent drift” in generated changes. OWASP’s guidance on LLM risks highlights why you cannot rely on model behavior for safety—controls must exist outside the model.

Business impact

- Cost & efficiency: fewer humans can deliver more change—but only if the assurance system scales (tests, review automation, policy gates, platform support).

- Speed & adaptability: teams can iterate faster on outcomes, not just ship more code; change lead time compresses when intent → implementation becomes semi-automated.

- Risk, trust & resilience: without new controls, higher throughput increases incident rate; with controls, you get higher change velocity and stability.

- Talent model: value concentrates in principal engineers who can govern architecture, constraints, and quality—while routine implementation becomes commoditized.

CXO CTAs

- Redesign roles, not just tools: establish clear ownership for (a) intent/specs, (b) architecture decisions, (c) quality gates, (d) operational readiness, and (e) agent governance.

- Create “virtual contributor” controls: define what agents can change, approval thresholds, mandatory tests, security checks, and rollback rules—enforced in CI/CD, not by policy documents.

- Standardize agentic SDLC disciplines: agentic QA, agentic DevOps/SRE, agentic data engineering—each with playbooks, measurable SLOs, and auditability.

- Measure the right productivity: adopt a multi-dimensional measurement approach (quality + flow + satisfaction), and stop incentivizing raw output.

- Fund the platform spine: invest in internal developer platforms, golden paths, and automated assurance so higher throughput doesn’t become higher chaos.

Trend #15: Enterprise AI Sovereignty & Citizen Agent Building

From 2026 through 2028, “enterprise AI strategy” becomes inseparable from sovereignty: where models run, where data is processed, who controls operations, and how risk is governed across jurisdictions. Gartner’s 2026 trends explicitly elevate geopatriation and confidential computing alongside AI platform shifts—signaling that location, control, and compliance are now architectural constraints, not procurement checkboxes. (Gartner)

At the same time, enterprises won’t scale agents only through central teams. They’ll scale through citizen agent building—but only inside governed zones that prevent shadow AI sprawl. Microsoft’s Copilot Studio governance guidance is explicit about zoning citizen development to manage risk and complexity. (Microsoft Learn)

Article content

The shift is from “AI adoption” to “governed autonomy at scale”—owned by the enterprise, not improvised by teams.

Three forces converge here:

1) Sovereignty moves from data residency to full-stack operational control

Sovereignty now means more than “data stays in-region.” It includes control of model routing, inference environments, access policy, auditability, and the ability to meet geopolitical/regulatory demands without stopping innovation. Gartner’s trend set (e.g., confidential computing, geopatriation) reflects this broadening definition. (Gartner)

2) Shadow AI is already a governance and security problem

Uncontrolled use of generative AI (“shadow AI”) is being widely reported as a real enterprise risk pattern—data leakage, IP exposure, and unapproved decision automation. The lesson: if approved options are slow or restricted, teams will route around them. (IT Pro)

3) Citizen-built agents become the next personalization wave—if zoned and governed

Enterprises need distributed creation, but not distributed chaos. “Zoned governance” (low-risk personal/team agents vs higher-risk business agents) is emerging as the operating model that allows scale with control. (Microsoft Learn) In parallel, platforms are moving toward “bring your own model” patterns, reflecting enterprise demand to keep model choice and control portable rather than locked to a single vendor stack. (UiPath)

Business impact

- Faster scale with fewer failures: governed citizen creation increases automation coverage without expanding central bottlenecks. (Microsoft Learn)

- Reduced risk exposure: zoning + controls reduces shadow AI leakage and unsafe autonomous actions. (IT Pro)

- Negotiating power and portability: BYOM/model-agnostic expectations shift leverage toward enterprises that can enforce portable contracts, not vendor-specific implementations. (UiPath)

- Operational resilience: sovereignty-aligned architecture reduces disruption from regulatory change, geopolitical constraints, and cross-border data limitations. (Gartner)

CXO CTAs

- Define your sovereignty stance in architecture terms: which workloads must be sovereign (data, models, keys, inference), what is shareable, and what “proof” is required (auditability, controls, isolation). (Gartner)

- Implement zoned agent governance: explicitly separate low-risk citizen agents from business-critical agents with escalating requirements (identity, approvals, logging, DLP, monitoring). (Microsoft Learn)

- Offer an approved enterprise alternative to shadow AI: make it easier to do the right thing than the risky thing—enterprise-grade access, safe defaults, and fast enablement. (IT Pro)

- Set a “BYOM-ready” standard: require portability of models and policy controls inside major platforms to avoid long-term lock-in as agent adoption accelerates. (UiPath)

- Treat governance as product infrastructure: agent inventory, permissions, telemetry, and incident playbooks—run like a production platform, not a policy deck. (Microsoft Adoption)

Trend #16: Enterprise Ontology Engineering — The Knowledge Foundation

From 2026 through 2028, many “agent failures” won’t be model failures. They’ll be meaning failures: the enterprise can’t reliably tell an agent what a customer, contract, risk, approval, or done actually means across functions and systems. This is why the semantic layer and knowledge-graph approaches are increasingly framed by leading analyst research as core enablers for trustworthy GenAI in enterprises. (Forrester)

The winners will treat ontology engineering as infrastructure: a governed “meaning layer” that makes agentic systems auditable, interoperable, and safe to scale.

Article content

The shift is from “data integration” to “meaning integration.” You can integrate systems and still have semantic chaos. Agents amplify that chaos because they operate at the level of concepts, relationships, and intent—not tables and screens.

Three forces converge here:

1) GenAI needs structured knowledge to be reliable in enterprise settings

Forrester’s work on knowledge graphs + GenAI highlights a growing conviction: structured enterprise knowledge (entities, relationships, domain facts) is essential to reduce hallucination risk and improve reasoning quality in real deployments. (Forrester)

2) “Agent callability” requires shared meaning to be safe

As agent ecosystems expand, you’re effectively publishing callable capabilities into a mesh. Without a common meaning layer, you get brittle automation: the same term triggers different actions in different contexts, and governance becomes unworkable.

3) Standards exist—enterprises just haven’t operationalized them

W3C standards such as OWL define formal ways to represent classes, relationships, and constraints—exactly the primitives you need to make enterprise meaning machine-checkable and consistent across systems and teams. (W3C)

Business impact

- Fewer agent errors that look like “hallucinations”: many failures are actually semantic mismatches; ontologies reduce ambiguity and enforce constraints. (Forrester)

- Faster scaling of automation: once meaning is standardized, new agents and workflows plug in with less bespoke mapping and fewer exceptions.

- Stronger governance and auditability: decisions can be traced through consistent definitions and relationships, improving defensibility under scrutiny. (This aligns with risk-governance directions like NIST’s AI RMF emphasis on context and trustworthiness.) (NIST Publications)

- Higher reuse across the enterprise: the meaning layer becomes a shared asset across analytics, automation, customer journeys, and compliance.

CXO CTAs

- Fund “meaning infrastructure” as a platform: treat ontology + semantic layer as a shared product, with roadmap, stewardship, and adoption KPIs (not a one-off data project). (Forrester)

- Start with 20–30 canonical concepts: define the enterprise-critical entities and relationships (customer, account, product, contract, incident, approval, obligation) and publish them as governed definitions with constraints.

- Tie ontologies to agent contracts: require that every high-impact agent skill references canonical concepts and validated relationships—no free-text semantics in consequential automation.

- Operationalize change control: ontologies must be versioned, tested for consistency, and rolled out like APIs—because changing meaning breaks systems. (W3C)

- Make “semantic drift” observable: instrument where terms are used inconsistently, where mappings break, and where exceptions cluster—then treat it like reliability debt.

Trend #17: Agent-Ready Data Architecture

From 2026 through 2028, “AI readiness” splits from “analytics readiness.” Dashboards can tolerate ambiguity; agents cannot. If data meaning is unclear, freshness is unknown, lineage is missing, or quality is unstable, agents don’t just misreport—they take wrong actions. Gartner’s D&A predictions are explicit that prioritizing semantics in AI-ready data materially improves GenAI accuracy and cost outcomes. (Gartner)

The winners will treat data as a governed contract system: meaning + quality + change control designed for autonomous consumption.

Article content

The shift is from “data pipelines” to a “data-as-contract runtime” for agents. Agent-ready means: machine-readable semantics, enforceable expectations, and reliable consumption without brittle human interpretation.

Three forces converge here:

1) Semantics becomes the AI performance multiplier

Enterprises that standardize meaning (metrics, entities, definitions) reduce ambiguity, improve retrieval quality, and lower downstream correction costs. Gartner’s prediction explicitly ties semantics in AI-ready data to large gains in model accuracy and cost reduction by 2027. (Gartner) (Gartner)

2) Data contracts move from “nice-to-have” to production safety

Data contracts are increasingly described as “APIs for data”—explicit agreements between producers and consumers about schema, semantics, quality, and terms of use. This matters because agents are consumers at scale; without contracts, breakage becomes constant. (Thoughtworks)

3) Standards and certification thinking emerge for autonomous consumption

As agent adoption grows, organizations are moving toward repeatable “readiness checks” (discoverability, understandability, governance, freshness, access controls) that resemble a lightweight certification model for whether a dataset/data product is safe for agentic use. (Salesforce)

Business impact

- Higher agent reliability: fewer action errors and fewer “hallucination” incidents caused by ambiguous or stale enterprise data. (Gartner)

- Lower operational cost: fewer downstream fixes, less manual reconciliation, fewer broken automations after schema changes. (Data Contract Specification)

- Faster onboarding of new agents/use cases: standardized semantics + contracts reduce bespoke mapping and rework. (opendatacontract.com)

- Better governance defensibility: clear lineage, ownership, and contractual expectations improve auditability when AI-driven decisions are challenged.

CXO CTAs

- Create an “Agent-Ready Data” standard: define the minimum bar (semantic definitions, ownership, freshness SLOs, lineage, access policies, quality tests, change notification). (Gartner)

- Mandate data contracts for critical domains: treat high-impact data products as versioned interfaces; breaking changes require governance and deprecation windows. (Data Contract Specification)

- Stand up a semantic layer program: prioritize the top business entities/metrics and make them the canonical meaning source for both humans and agents. (Gartner)

- Operationalize freshness and quality as SLOs: “last updated” and “quality score” become first-class runtime signals for agent decisioning. (Rishabh Software)

- Measure breakage as a board-relevant risk: track contract violations, schema drift incidents, and automation reversals attributable to data ambiguity—then fund fixes like reliability work.

Trend #18: Long Context, Memory & Knowledge Architecture

From 2026 through 2028, one of the most consequential architecture decisions for agentic products will be how you build context: do you rely on retrieval (RAG), expand long-context usage, or engineer a hybrid memory stack? Google’s long-context guidance now explicitly frames 1M+ token windows as a practical development surface, not an experiment. (Google AI for Developers)

The winners will treat context as a supply chain: curated, fresh, permissioned, cost-governed, and observable—because context quality (and cost) becomes the primary determinant of agent reliability.

Article content

The shift is from “prompting a model” to “operating an enterprise memory system.” In agentic systems, the question isn’t “can the model answer?” It’s “did the system provide the right context, at the right time, with the right permissions, at the right cost?”

Three forces converge here:

1) Long-context windows change what’s architecturally possible

Models increasingly support very large context windows—up to ~1M tokens in certain offerings—making “read the whole corpus” workflows feasible for specific tasks like codebase reasoning and multi-document analysis. (Claude) This reduces chunking overhead, but it does not eliminate the need for good context engineering.

2) RAG doesn’t disappear; it becomes selective and policy-driven

Side-by-side comparisons in the ecosystem increasingly position long-context and RAG as complementary: long-context for bounded, high-fidelity tasks; retrieval for freshness, cost control, and targeted grounding. (Meilisearch) Enterprises will converge on hybrid designs: “long context for the working set, retrieval for the expanding universe.”

3) “Memory” becomes a product capability, not a feature

Long-term memory requires more than storing chat logs. Research on memory-augmented architectures shows structured approaches (selective retrieval + pruning) to maintain coherence and relevance over time. (arXiv) This pushes the enterprise toward explicit memory tiers: session memory, task memory, user memory (consent-bound), and organizational memory (policy-bound).

Business impact

- Higher reliability: fewer wrong actions caused by missing or stale context; improved coherence across multi-step workflows. (Google AI for Developers)

- Lower cost volatility: context strategy becomes a FinOps problem—1M-token prompts can be powerful but expensive; retrieval and caching become economic levers. (Meilisearch)

- Faster time-to-solution: long-context can eliminate orchestration overhead for certain analyses (e.g., “load everything and reason”), accelerating complex work. (Claude)

- Better governance: memory architectures make permission boundaries explicit (what is remembered, why, for how long, and who can access it).

CXO CTAs

- Make “context strategy” a named architecture decision: define where you use long-context, where you use retrieval, and where you require hybrid—by workload class and risk level. (Meilisearch)

- Engineer a memory tier model: session → task → user (consent) → org (policy) with clear retention, deletion, and audit rules. (arXiv)

- Operationalize context quality metrics: grounding rate, citation/attribution coverage, freshness, permission compliance, and “context defect” incident tags. (Google AI for Developers)

- Control cost with routing + caching: treat context assembly like performance engineering—cache stable context, retrieve only what changes, reserve full long-context for high-value moments. (Meilisearch)

Treat memory as a trust surface: ensure sensitive memory is permissioned, encrypted where appropriate, and never implicitly expanded without policy.

Part 1 sets the foundation: from redefining digital products around intents and outcomes to building the data, memory, and orchestration layers that make agentic execution possible. In Part 2, we move from architecture to scale—covering AI operations, platform strategy, pricing, governance, security, compliance, and the leadership playbook required to turn agentic systems into enterprise-grade digital labor.

Leave a Reply