The Enterprise Is Moving From Chat to Action

That is the real change that is happening behind the story of agentic AI in 2026.

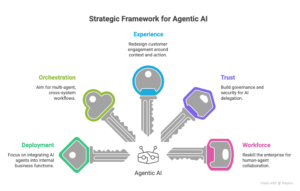

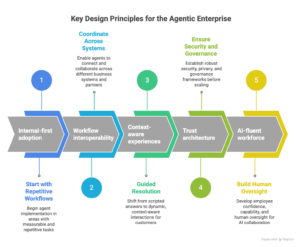

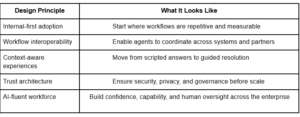

The Google Cloud article is not just saying that AI agents are the next step in chatbots. The deeper point is that businesses are moving away from systems that just answer questions and toward systems that can reason, plan, and act in business workflows with human oversight. It frames this change as a matter of competition, not just a technical curiosity, and lists five areas where leaders need to act: getting people to use it, coordinating workflows, improving the customer experience, building trust, and getting the workforce ready.

Agents are not new at this moment, which is what makes it important. It has to do with the operating model.

The business is no longer just using a tool when AI starts to help with execution instead of just making content. It is changing how work is done, how power is shared, how trust is kept, and how value is measured. That’s why the article is important. It makes agentic AI look like a way to change a business instead of just a way to improve a model.

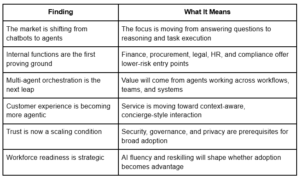

Important Results

The article’s five-insight structure and focus on both business transformation and workforce readiness make these themes very clear.

The first business advantage will come from within the business, not from the outside.

One of the best things about the article is that it says that early agentic gains will come from internal line-of-business functions. It says that financial planning, procurement, contract management, legal, and HR are all good places to start because they all have repetitive tasks, lots of paperwork, and clear inefficiencies. The article says that these areas are the best places to build “agentic AI muscle” before moving on to use cases that are more public or customer-facing.

That is a big sign for strategy.

Businesses often want AI transformation to start where it can be seen the most. But the most lasting changes happen when the workflow is organized, the value can be measured, and the risk can be managed. Internal operations are more than just a safe way to get in. Organizations learn what delegation, supervision, escalation, and trust really look like in real life there. That’s how adoption turns into ability.

What the article is really about

The article talks about internal automation as more than just making things run more smoothly. It sees it as getting the organization ready. Businesses get experience with deployment, make their teams more comfortable with AI, and lay the groundwork for bigger agentic changes by automating repetitive internal workflows first.

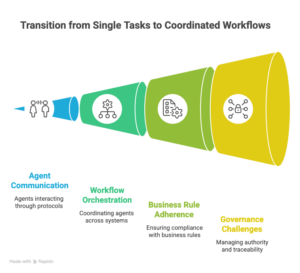

It’s not a big jump from one smart agent to another. It is from single tasks to coordinated workflows.

The second main point of the article is that the next wave of value will come from next-generation workflows that work together. It mentions things like the Agent2Agent Protocol and the Agent Payments Protocol as ways to make it easier for agents to talk to each other and to enterprise systems, even when they are using different developers, frameworks, and organizations.

That matters because most businesses’ biggest problems don’t happen in just one task. They are in between tasks.

They sit in handoffs, checks of policies, dependencies on suppliers, chains of approval, and systems that are broken up. The examples in the article about manufacturing and supply networks make it clear that the promise of agentic AI is not just to speed up one step, but to coordinate action across multiple steps while following certain business rules.

Why this is important for strategy

Automating tasks makes people more productive. Workflow orchestration changes how much leverage you have in your business.

That’s a different kind of value. It means that agentic AI isn’t just about replacing human workers. It’s about making a new layer of coordination for the business. But that also makes governance harder, because when multiple agents work together across systems, authority, traceability, and exception management become very important.

The way customers experience service is changing from scripted to contextual.

Another important point in the article is that AI that interacts with customers is getting better because it is becoming more contextual. It is different from the old way of automating customer service, which mostly used pre-programmed chatbots to handle simple requests, to the new way, where agents can remember preferences, understand more complicated requests, and help users through more complicated journeys. It uses retail and agentic commerce as examples of this change.

This is a big change.

For years, businesses worked to improve service by speeding up response times and lowering the number of calls. The new standard is not the same. The article says that agents can help customers with more and more of their tasks from start to finish, such as finding products, comparing options, negotiating price or delivery terms, and even making purchases on the customer’s behalf. It also has bigger effects on travel, hospitality, and consumer goods.

What is different here

Automation is no longer the only design goal. It is a guided resolution.

That means that the strength of the context architecture behind the interaction—memory, preferences, business rules, policy boundaries, and decision support—will become more important for customers. The interface might still look like a conversation. But the value is now useful.

Trust is not a layer of support. It is the condition for scale.

The fourth insight in the article is probably the most important. It says that while agents can make work easier and improve performance, they won’t be widely used until people trust them. It connects that trust to security, privacy, governance, and safe execution, and it warns that if people misuse it or don’t prove their identity, it could lead to big risks, like a backlash against the brand.

This is where the piece goes from being just a positive trend piece to something more.

The article makes it clear that agents are not ready for safe enterprise deployment by default. They need to lay the groundwork. Businesses need to make sure that the tools and platforms they use allow for responsible governance and safe access to company data. In other words, adoption isn’t just about what you can do. It’s about whether the system around it makes that ability safe enough to give to someone else.

Trust in the agentic enterprise means that –

- enterprise systems are safe to access,

- agents are governed in how they act,

- enterprise and customer data is kept private,

- And delegated actions are kept within limits and can be seen.

That is the real limit for scaling. Agentic AI is still a pilot without trust. It becomes operational infrastructure when people trust it. This is what I got from the article’s argument about the rules for adoption and governance.

The question of the workforce is not secondary. It is a plan.

The fifth point made in the article is that people, not prompts, should always be the focus of AI projects. It says that as agents take care of boring tasks, businesses will value human skills like critical thinking, problem-solving, and making connections with others more. It also talks about how the traditional career pyramid is changing into a diamond shape, with more talented people moving into strategic roles.

That framing is important because it helps avoid one of the biggest mistakes in enterprise AI strategy: not thinking about how to adapt the workforce as an HR afterthought.

The article makes it clear that AI fluency will be very important for success and that everyone in the company, from front-line workers to executives, needs to know how to use AI tools and models. It also calls for a culture that makes people feel safe using AI and for plans for employees to keep learning that let them gain real-world experience at their own pace.

What this means for leaders

In 2026, AI will have an edge that goes beyond just having access to models.

It will depend on whether the organization can train its employees to use AI in a way that is safe, effective, and confident. When technology is ready but the workforce isn’t, adoption is uneven. Fluency in the workforce turns experimentation into a skill that the whole organization can use. This conclusion is based on the article’s focus on reskilling, upskilling, and AI fluency.

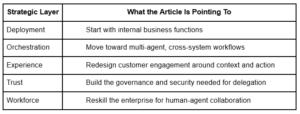

The article’s main point is that agentic AI is really a change in the way things work.

The article has five main points that are easy to see.

The deeper structure is more strategic.

That’s what makes the piece important to CXOs. It’s not just saying that agents are getting better. It says that how well organizations can redesign execution around them will become more and more important for their competitive edge. This synthesis is an inference based on the five clear themes in the article.

How the agentic enterprise will probably be made

One of the article’s five clear suggestions is the basis for each of these principles.

The most important question in the boardroom

If AI agents are no longer just helping people think, but also helping businesses do things more and more, then the main question for leaders is this:

Are we still using AI to boost productivity, or are we redesigning the business to work responsibly with machines that do tasks for us?

The article really means that.

Not an agent versus a chatbot.

Not trying things out versus using them.

But being ready for the operating model versus drifting toward the competition.

In 2026, the organizations that win won’t just be the ones that use AI more. They will be the ones who can put together a single system that includes internal deployment, workflow orchestration, trusted execution, and workforce fluency. That conclusion is a summary of the five main points made in the article.

Leave a Reply